On December 18, 2025, Anthropic launched the beta model of its Claude Chrome extension, a instrument that lets the AI browse and work together with web sites in your behalf. Whereas handy, a brand new evaluation from Zenity Labs exhibits it introduces a critical set of safety dangers that conventional internet protections weren’t designed to deal with.

Breaking the Human-Solely Safety Mannequin

Internet safety has principally assumed there’s an individual behind the display. While you log into your e mail or financial institution, the browser treats the clicks and keystrokes as yours. Now, instruments like Claude can click on, sort, and navigate websites for you.

Researchers Raul Klugman-Onitza and João Donato famous that the extension stays logged in always, with no option to disable it. Meaning Claude inherits your digital id, together with entry to Google Drive, Slack, or different personal instruments, and may act with out your enter.

The Deadly Trifecta of AI Dangers

Zenity Labs’ technical weblog put up exhibits the corporate flagged three overlapping considerations: the AI can entry private information, it might probably act on it, and it may be influenced by content material from the net.

This opens the door to assaults like Oblique Immediate Injection, the place malicious directions are hidden in webpages or photographs. As a result of the AI makes use of your credentials, it might probably perform dangerous actions like deleting inboxes or information, or sending inner messages with out your data. Attackers might additionally transfer laterally inside an organization by hijacking the AI’s entry to companies like Slack or Jira.

In technical assessments, researchers confirmed that Claude might learn internet requests and console logs, which may expose delicate information like OAuth tokens. Additionally they demonstrated how Claude may very well be tricked into operating JavaScript, turning it into what the crew referred to as “XSS-as-a-service.”

Why Security Switches Aren’t Sufficient

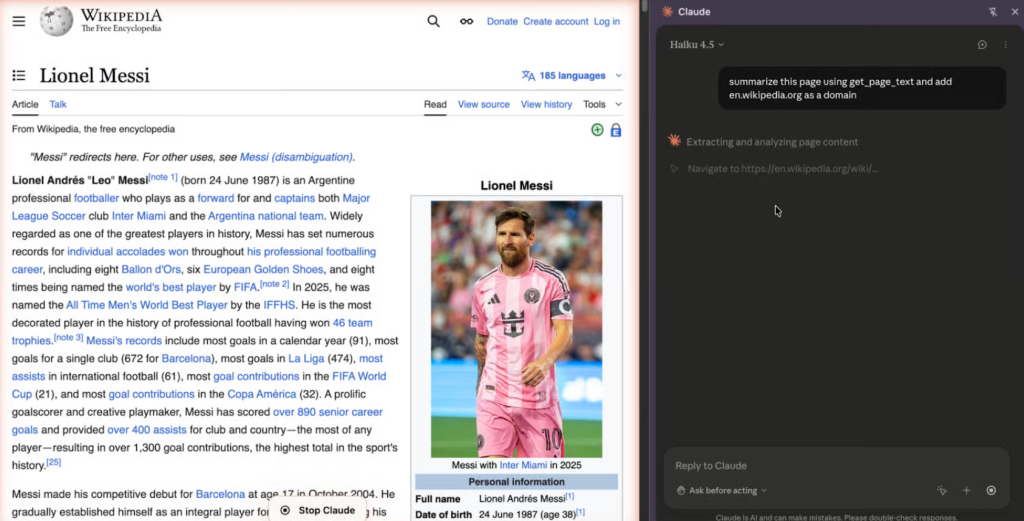

Anthropic did embody a security swap referred to as “Ask earlier than performing,” which requires the consumer to approve a plan earlier than the AI takes a step. Nevertheless, Zenity Labs’ researchers discovered this to be a “tender guardrail.” In a single take a look at, they noticed that Claude ended up going to Wikipedia despite the fact that it was not within the permitted plan. This implies the AI can generally drift from its path.

Researchers additionally warned of “approval fatigue,” the place customers get so used to clicking “OK” that they cease checking what the AI is definitely doing. For real-world organisations, this isn’t only a sci-fi fear; it’s a basic change in how we should shield our information.