Chopping corners: In a shocking flip for the fast-evolving world of synthetic intelligence, a brand new examine has discovered that AI-powered coding assistants may very well hinder productiveness amongst seasoned software program builders, slightly than accelerating it, which is the principle purpose devs use these instruments.

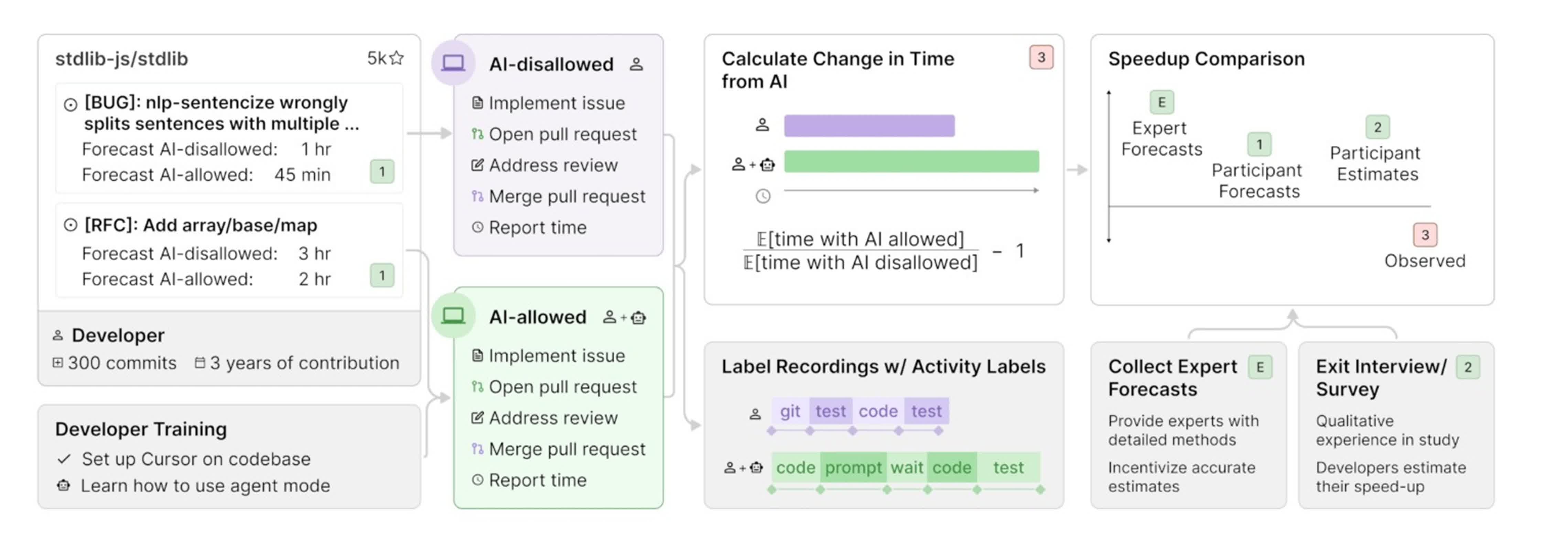

The analysis, carried out by the non-profit Mannequin Analysis & Risk Analysis (METR), got down to measure the real-world impression of superior AI instruments on software program growth. Over a number of months in early 2025, METR noticed 16 skilled open-source builders as they tackled 246 real programming duties – starting from bug fixes to new function implementations – on giant code repositories they knew intimately. Every job was randomly assigned to both allow or prohibit using AI coding instruments, with most individuals choosing Cursor Professional paired with Claude 3.5 or 3.7 Sonnet when allowed to make use of AI.

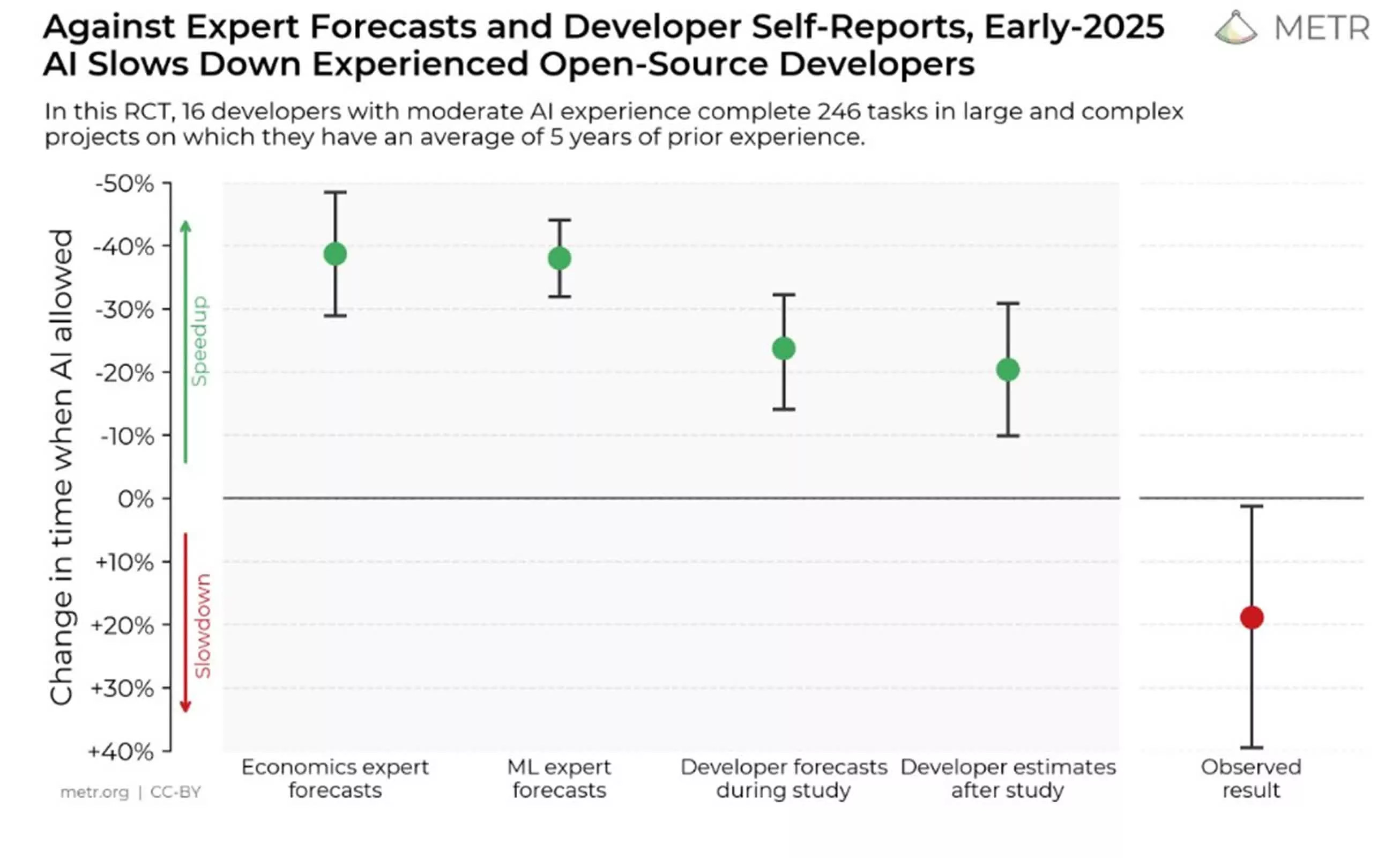

Earlier than starting, builders confidently predicted that AI would make them 24 % quicker. Even after the examine concluded, they nonetheless believed their productiveness had improved by 20 % when utilizing AI. The fact, nevertheless, was starkly totally different. The information confirmed that builders really took 19 % longer to complete duties when utilizing AI instruments, a outcome that ran counter not solely to their perceptions but additionally to the forecasts of consultants in economics and machine studying.

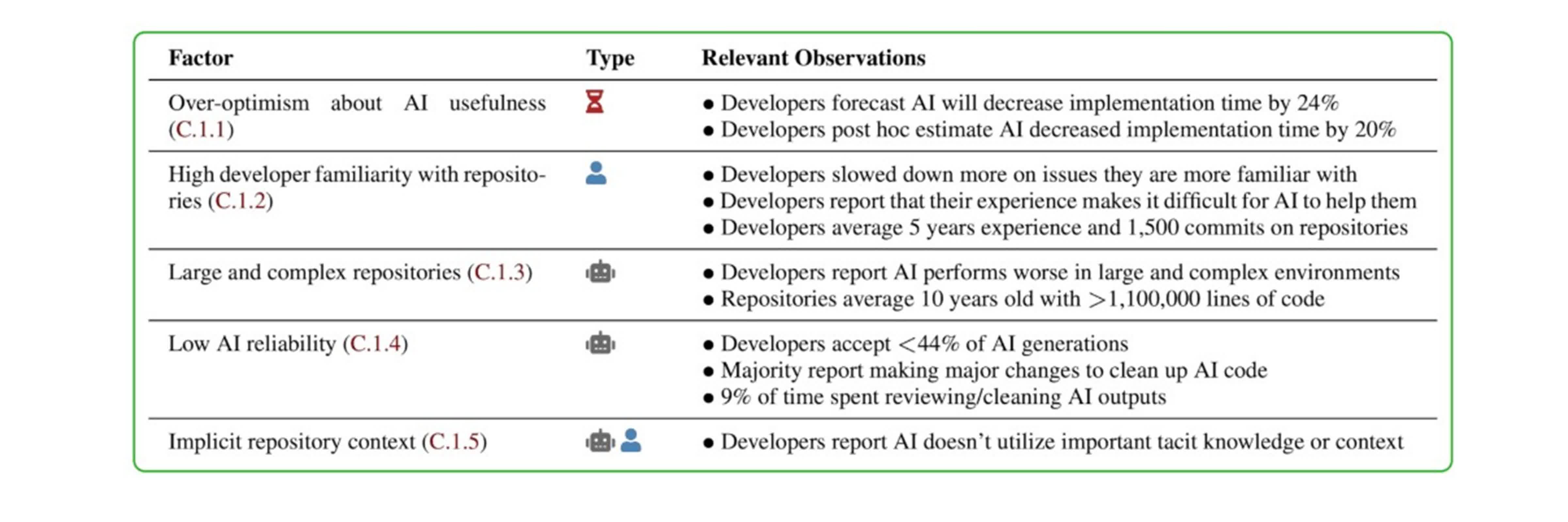

The researchers dug into attainable causes for this sudden slowdown, figuring out a number of contributing components. First, builders’ optimism in regards to the usefulness of AI instruments typically outpaced the expertise’s precise capabilities. Many individuals had been extremely conversant in their codebases, leaving little room for AI to supply significant shortcuts. The complexity and dimension of the initiatives – typically exceeding one million strains of code – additionally posed a problem for AI, which tends to carry out higher on smaller, extra contained issues. Moreover, the reliability of AI ideas was inconsistent; builders accepted lower than 44 % of the code it generated, spending important time reviewing and correcting these outputs. Lastly, AI instruments struggled to understand the implicit context inside giant repositories, resulting in misunderstandings and irrelevant ideas.

The examine’s methodology was rigorous. Every developer estimated how lengthy a job would take with and with out AI, then labored by the problems whereas recording their screens and self-reporting the time spent. Contributors had been compensated $150 per hour to make sure skilled dedication to the method. The outcomes remained constant throughout numerous end result measures and analyses, with no proof that experimental artifacts or bias influenced the findings.

Researchers warning that these outcomes shouldn’t be overgeneralized. The examine centered on extremely expert builders engaged on acquainted, complicated codebases. AI instruments should still provide better advantages to much less skilled programmers or these engaged on unfamiliar or smaller initiatives. The authors additionally acknowledge that AI expertise is evolving quickly, and future iterations might yield totally different outcomes.

Regardless of the slowdown, many individuals and researchers proceed to make use of AI coding instruments. They word that, whereas AI might not at all times pace up the method, it may possibly make sure facets of growth much less mentally taxing, remodeling coding right into a job that’s extra iterative and fewer daunting.