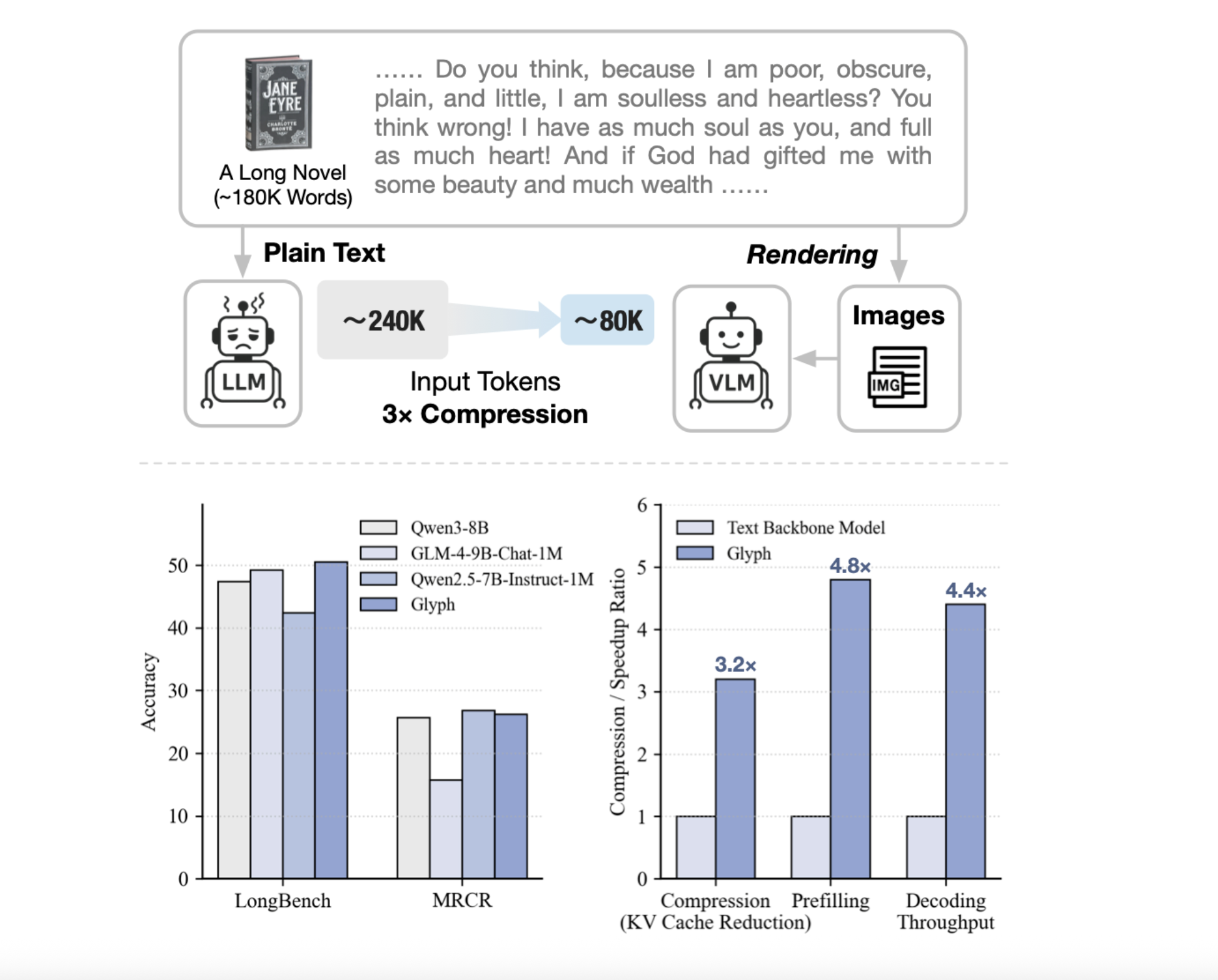

Can we render lengthy texts as pictures and use a VLM to attain 3–4× token compression, preserving accuracy whereas scaling a 128K context towards 1M-token workloads? A crew of researchers from Zhipu AI launch Glyph, an AI framework for scaling the context size by way of visual-text compression. It renders lengthy textual sequences into pictures and processes them utilizing imaginative and prescient–language fashions. The system renders extremely lengthy textual content into web page pictures, then a imaginative and prescient language mannequin, VLM, processes these pages finish to finish. Every visible token encodes many characters, so the efficient token sequence shortens, whereas semantics are preserved. Glyph can obtain 3-4x token compression on lengthy textual content sequences with out efficiency degradation, enabling important good points in reminiscence effectivity, coaching throughput, and inference velocity.

Why Glyph?

Typical strategies increase positional encodings or modify consideration, compute and reminiscence nonetheless scale with token depend. Retrieval trims inputs, however dangers lacking proof and provides latency. Glyph modifications the illustration, it converts textual content to pictures and shifts burden to a VLM that already learns OCR, format, and reasoning. This will increase info density per token, so a hard and fast token finances covers extra authentic context. Underneath excessive compression, the analysis crew present a 128K context VLM can tackle duties that originate from 1M token stage textual content.

System design and coaching

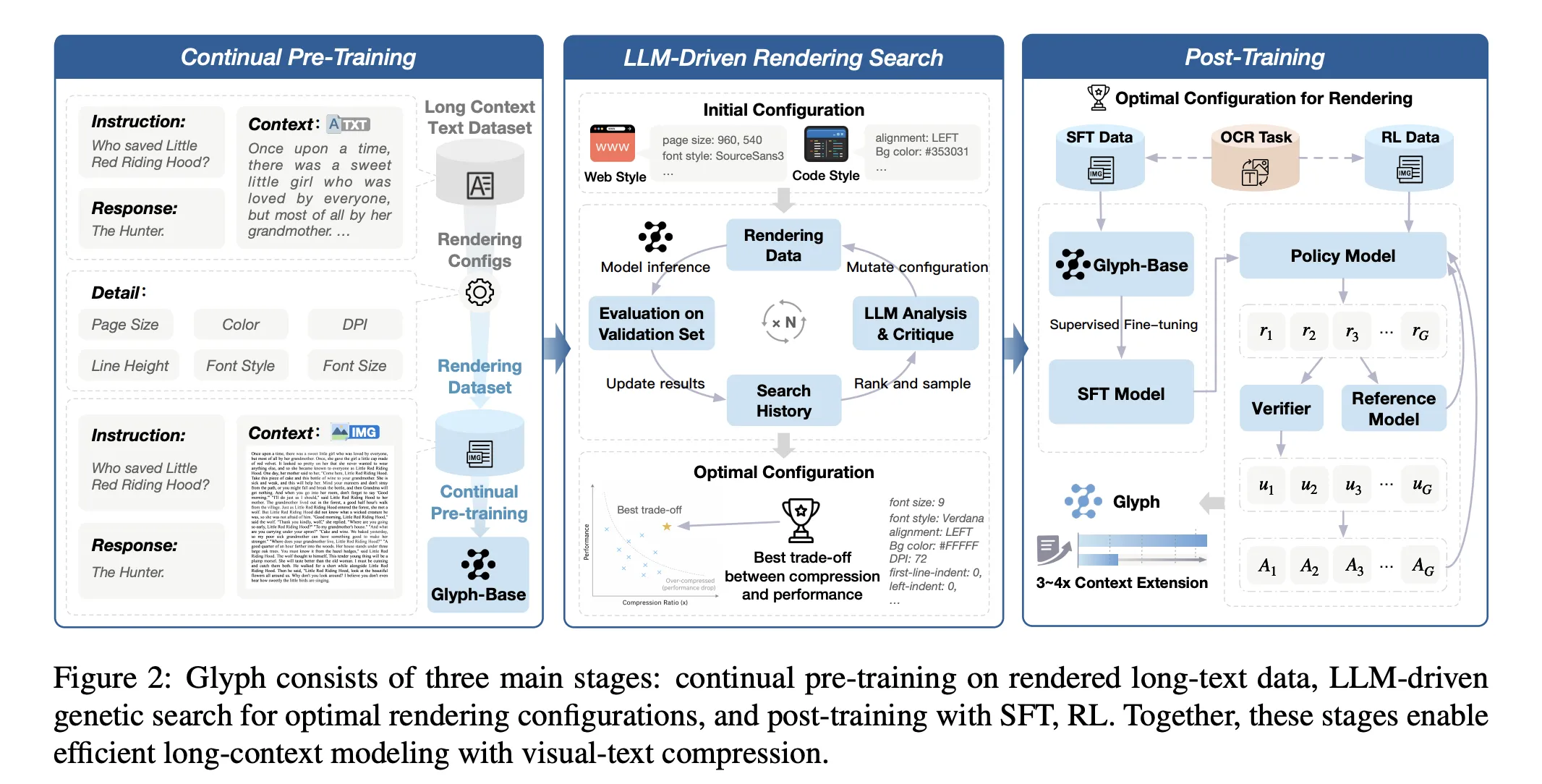

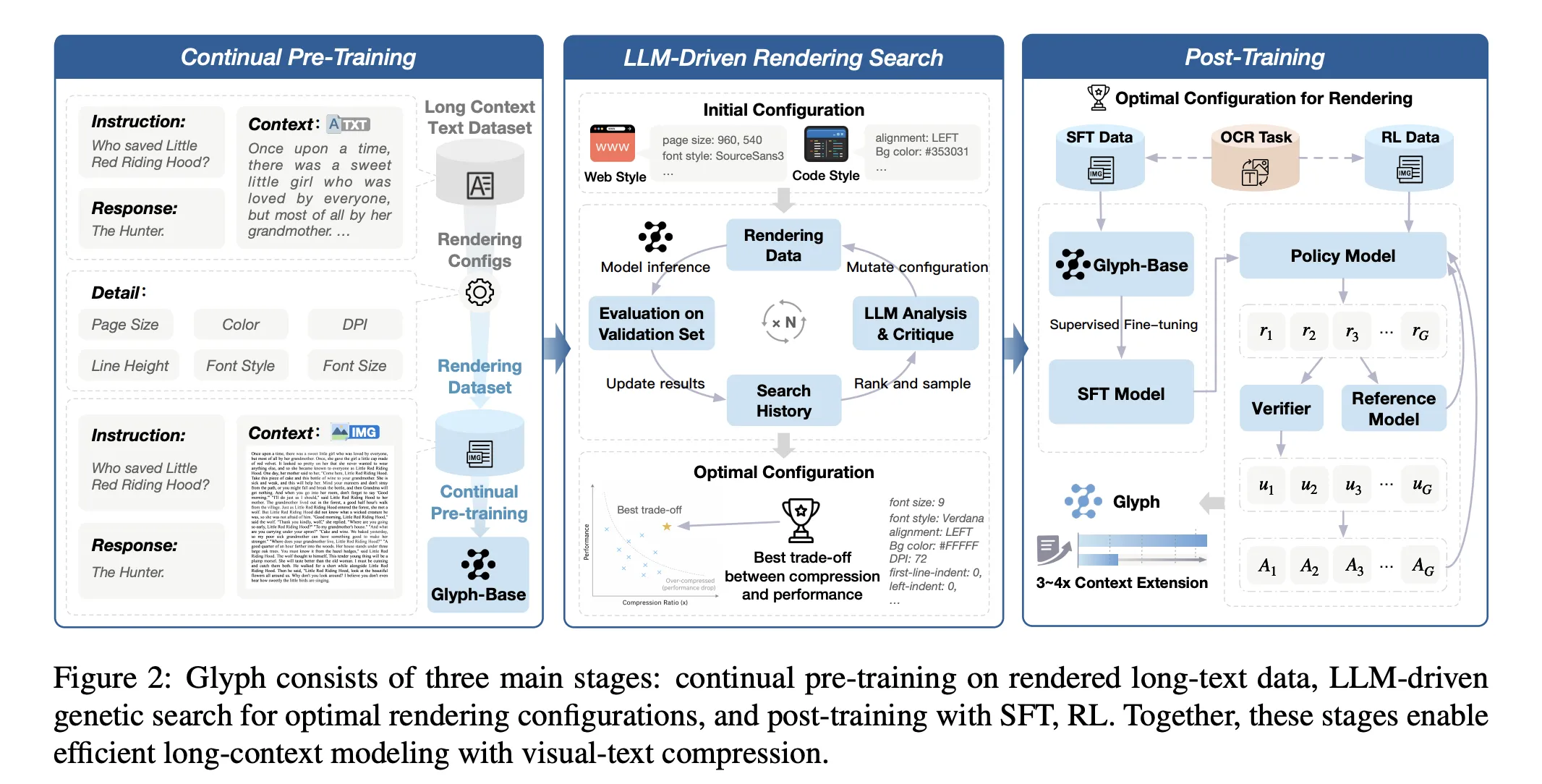

The tactic has three phases, continuous pre coaching, LLM pushed rendering search, and submit coaching. Continuous pre coaching exposes the VLM to giant corpora of rendered lengthy textual content with numerous typography and kinds. The target aligns visible and textual representations, and transfers lengthy context abilities from textual content tokens to visible tokens. The rendering search is a genetic loop pushed by an LLM. It mutates web page dimension, dpi, font household, font dimension, line peak, alignment, indent, and spacing. It evaluates candidates on a validation set to optimize accuracy and compression collectively. Publish coaching makes use of supervised advantageous tuning and reinforcement studying with Group Relative Coverage Optimization, plus an auxiliary OCR alignment job. The OCR loss improves character constancy when fonts are small and spacing is tight.

Outcomes, efficiency and effectivity…

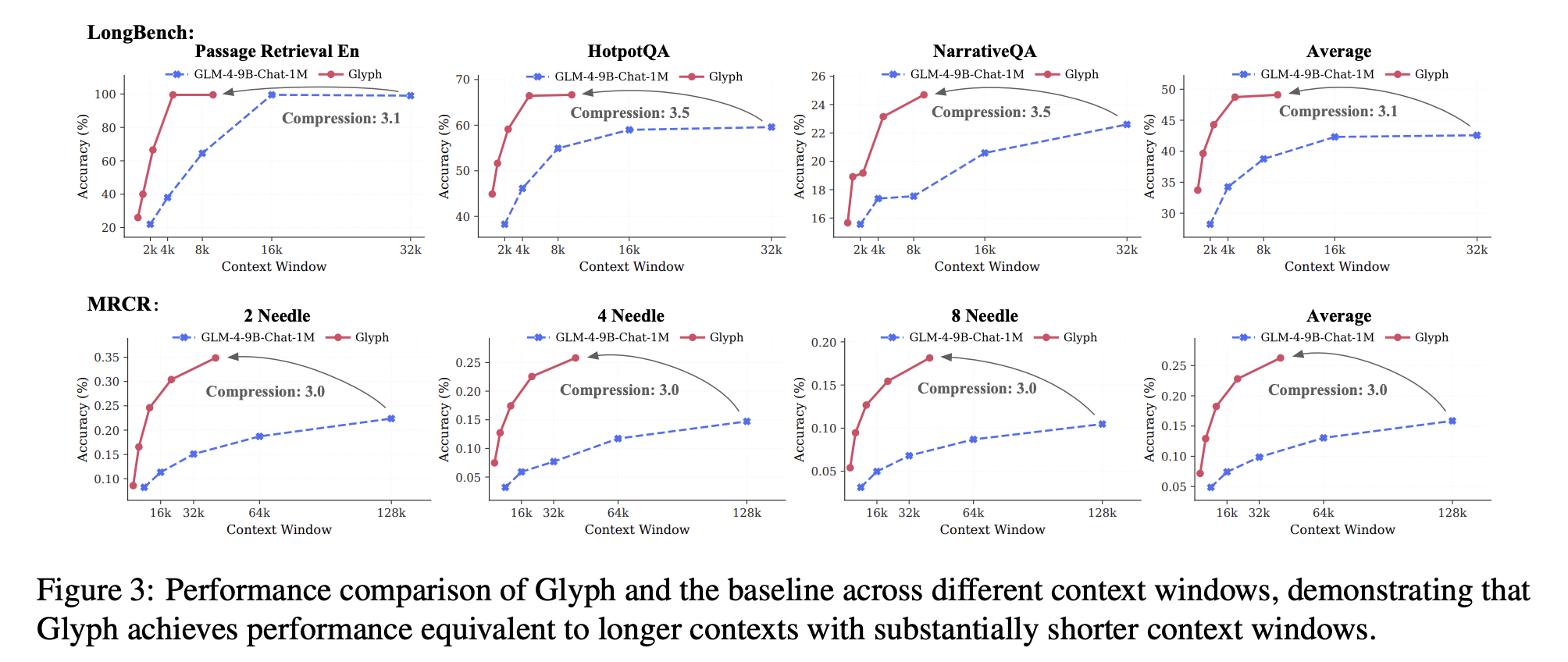

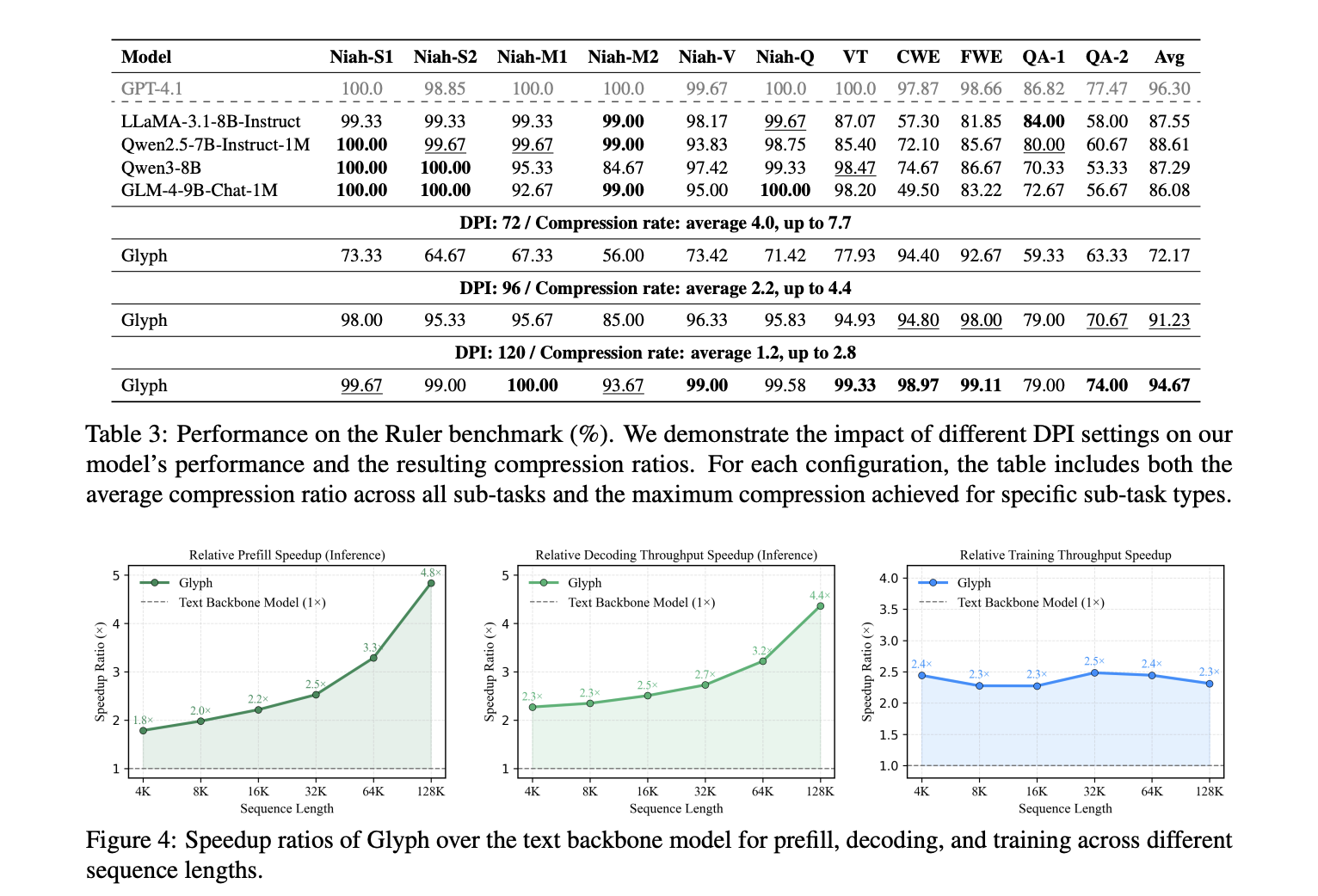

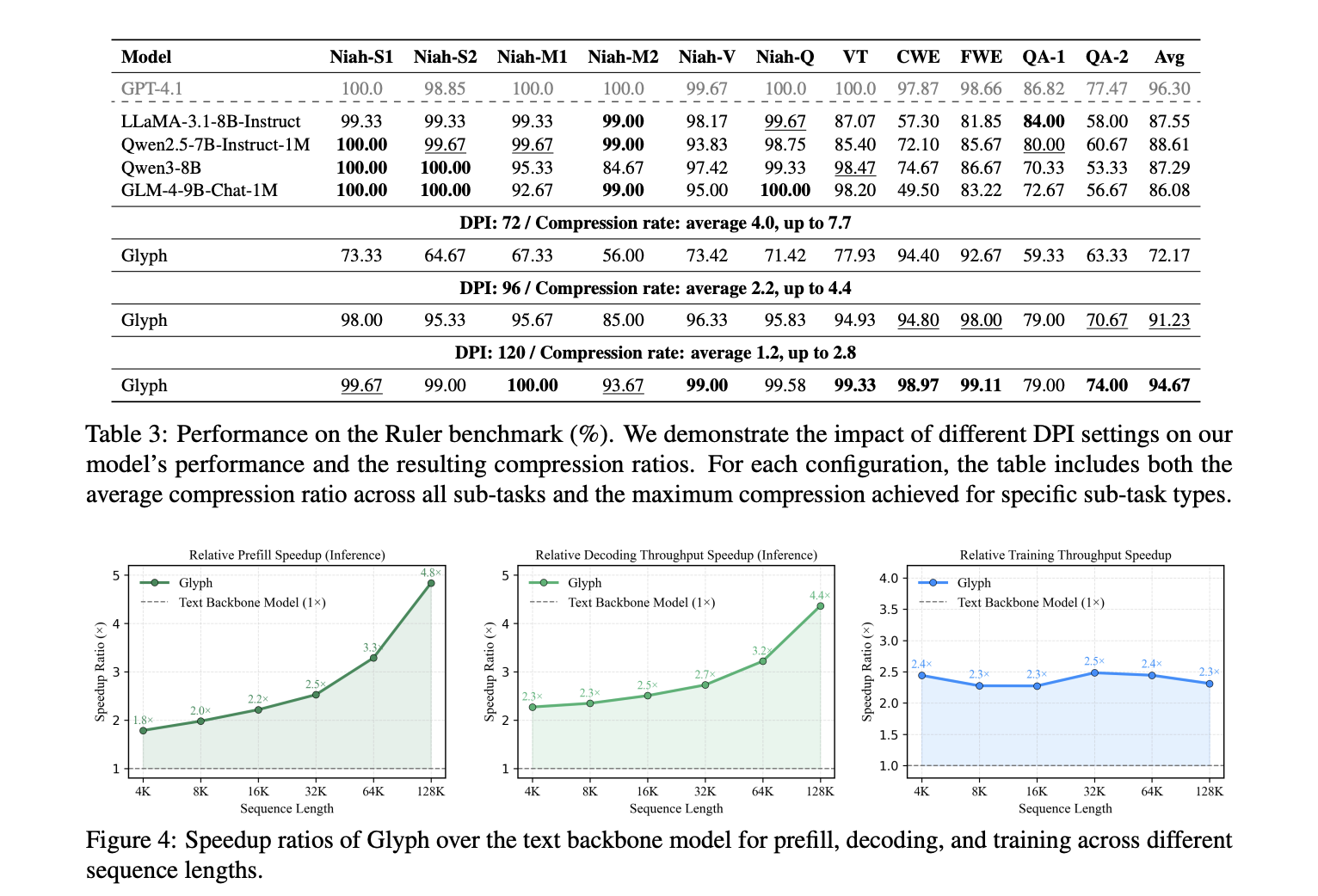

LongBench and MRCR set up accuracy and compression below lengthy dialogue histories and doc duties. The mannequin achieves a mean efficient compression ratio about 3.3 on LongBench, with some duties close to 5, and about 3.0 on MRCR. These good points scale with longer inputs, since each visible token carries extra characters. Reported speedups versus the textual content spine at 128K inputs are about 4.8 occasions for prefill, about 4.4 occasions for decoding, and about 2 occasions for supervised advantageous tuning throughput. The Ruler benchmark confirms that greater dpi at inference time improves scores, since crisper glyphs assist OCR and format parsing. The analysis crew reviews dpi 72 with common compression 4.0 and most 7.7 on particular sub duties, dpi 96 with common compression 2.2 and most 4.4, and dpi 120 with common 1.2 and most 2.8. The 7.7 most belongs to Ruler, to not MRCR.

So, what? Purposes

Glyph advantages multimodal doc understanding. Coaching on rendered pages improves efficiency on MMLongBench Doc relative to a base visible mannequin. This means that the rendering goal is a helpful pretext for actual doc duties that embrace figures and format. The primary failure mode is sensitivity to aggressive typography. Very small fonts and tight spacing degrade character accuracy, particularly for uncommon alphanumeric strings. The analysis crew exclude the UUID subtask on Ruler. The strategy assumes server facet rendering and a VLM with robust OCR and format priors.

Key Takeaways

- Glyph renders lengthy textual content into pictures, then a imaginative and prescient language mannequin processes these pages. This reframes long-context modeling as a multimodal drawback and preserves semantics whereas lowering tokens.

- The analysis crew reviews token compression is 3 to 4 occasions with accuracy corresponding to robust 8B textual content baselines on long-context benchmarks.

- Prefill speedup is about 4.8 occasions, decoding speedup is about 4.4 occasions, and supervised advantageous tuning throughput is about 2 occasions, measured at 128K inputs.

- The system makes use of continuous pretraining on rendered pages, an LLM pushed genetic search over rendering parameters, then supervised advantageous tuning and reinforcement studying with GRPO, plus an OCR alignment goal.

- Evaluations embrace LongBench, MRCR, and Ruler, with an excessive case displaying a 128K context VLM addressing 1M token stage duties. Code and mannequin card are public on GitHub and Hugging Face.

Glyph treats lengthy context scaling as visible textual content compression, it renders lengthy sequences into pictures and lets a VLM course of them, lowering tokens whereas preserving semantics. The analysis crew claims 3 to 4 occasions token compression with accuracy corresponding to Qwen3 8B baselines, about 4 occasions quicker prefilling and decoding, and about 2 occasions quicker SFT throughput. The pipeline is disciplined, continuous pre coaching on rendered pages, an LLM genetic rendering search over typography, then submit coaching. The strategy is pragmatic for million token workloads below excessive compression, but it relies on OCR and typography selections, which stay knobs. General, visible textual content compression presents a concrete path to scale lengthy context whereas controlling compute and reminiscence.

Take a look at the Paper, Weights and Repo. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be happy to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be part of us on telegram as effectively.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.