If you sort a question right into a search engine, one thing has to determine which paperwork are literally related — and rank them. BM25 (Finest Matching 25), the algorithm powering engines like google like Elasticsearch and Lucene, has been the dominant reply to that query for many years.

It scores paperwork by taking a look at three issues: how usually your question phrases seem in a doc, how uncommon these phrases are throughout your complete assortment, and whether or not a doc is unusually lengthy. The intelligent half is that BM25 doesn’t reward key phrase stuffing — a phrase showing 20 instances doesn’t make a doc 20 instances extra related, because of time period frequency saturation. However BM25 has a basic blind spot: it solely matches the phrases you typed, not what you meant. Seek for “discovering related content material with out precise phrase overlap” and BM25 returns a clean stare.

That is precisely the hole that Retrieval-Augmented Era (RAG) with vector embeddings was constructed to fill — by matching that means, not simply key phrases. On this article, we’ll break down how every method works, the place every one wins, and why manufacturing methods more and more use each collectively.

How BM25 Works

At its core, BM25 assigns a relevance rating to each doc within the assortment for a given question, then ranks paperwork by that rating. For every time period in your question, BM25 asks three questions: How usually does this time period seem within the doc? How uncommon is that this time period throughout all paperwork? And is that this doc unusually lengthy? The ultimate rating is the sum of weighted solutions to those questions throughout all question phrases.

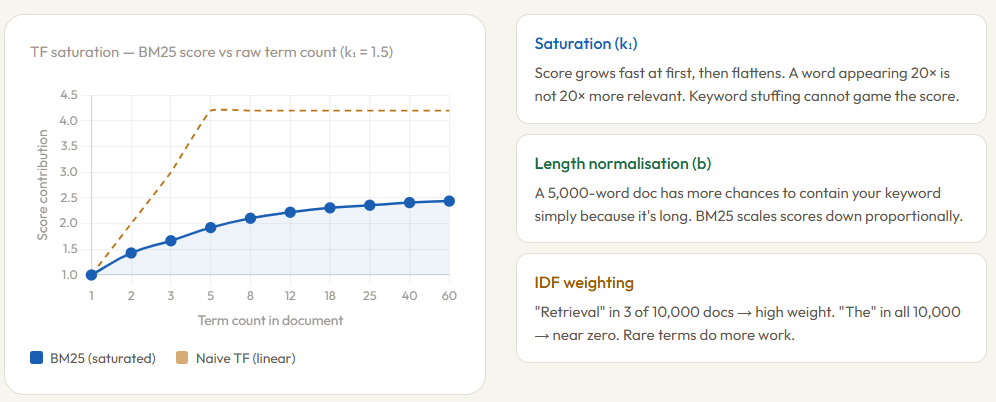

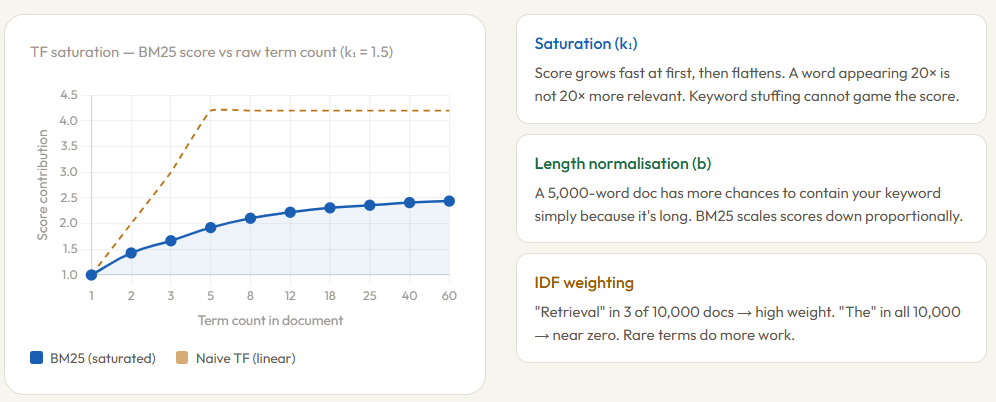

The time period frequency element is the place BM25 will get intelligent. Relatively than counting uncooked occurrences, it applies saturation — the rating grows shortly at first however flattens out as frequency will increase. A time period showing 5 instances contributes way more than a time period showing as soon as, however a time period showing 50 instances contributes barely a couple of showing 20 instances. That is managed by the parameter k₁ (sometimes set between 1.2 and a pair of.0). Set it low and the saturation kicks in quick; set it excessive and uncooked frequency issues extra. This single design alternative is what makes BM25 proof against key phrase stuffing — repeating a phrase 100 instances in a doc gained’t recreation the rating.

Size Normalization and IDF

The second tuning parameter, b (sometimes 0.75), controls how a lot a doc’s size is penalized. An extended doc naturally comprises extra phrases, so it has extra possibilities to incorporate your question time period — not as a result of it’s extra related, however just because it’s longer. BM25 compares every doc’s size to the common doc size within the assortment and scales the time period frequency rating down accordingly. Setting b = 0 disables this penalty totally; b = 1 applies full normalization.

Lastly, IDF (Inverse Doc Frequency) ensures that uncommon phrases carry extra weight than frequent ones. If the phrase “retrieval” seems in solely 3 out of 10,000 paperwork, it’s a powerful sign of relevance when matched. If the phrase “the” seems in all 10,000, matching it tells you nearly nothing. IDF is what makes BM25 take note of the phrases that really discriminate between paperwork. One vital caveat: as a result of BM25 operates purely on time period frequency, it has no consciousness of phrase order, context, or that means — matching “financial institution” in a question about finance and “financial institution” in a doc about rivers appears similar to BM25. That bag-of-words limitation is key, not a tuning drawback.

How is BM25 completely different from Vector Search

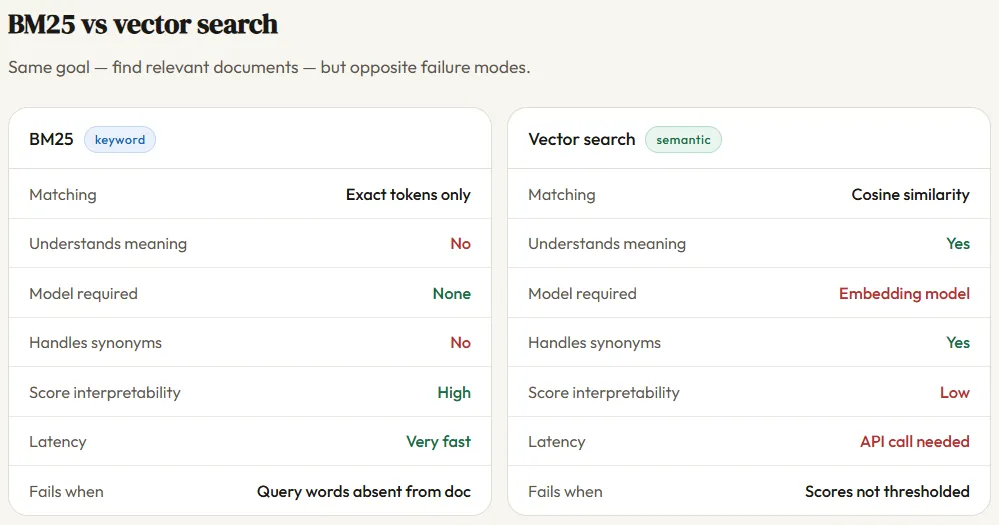

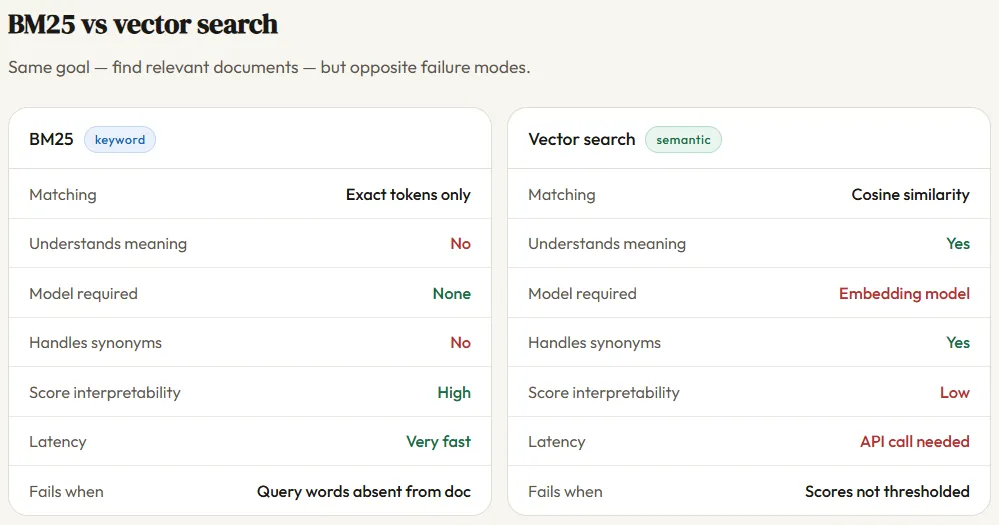

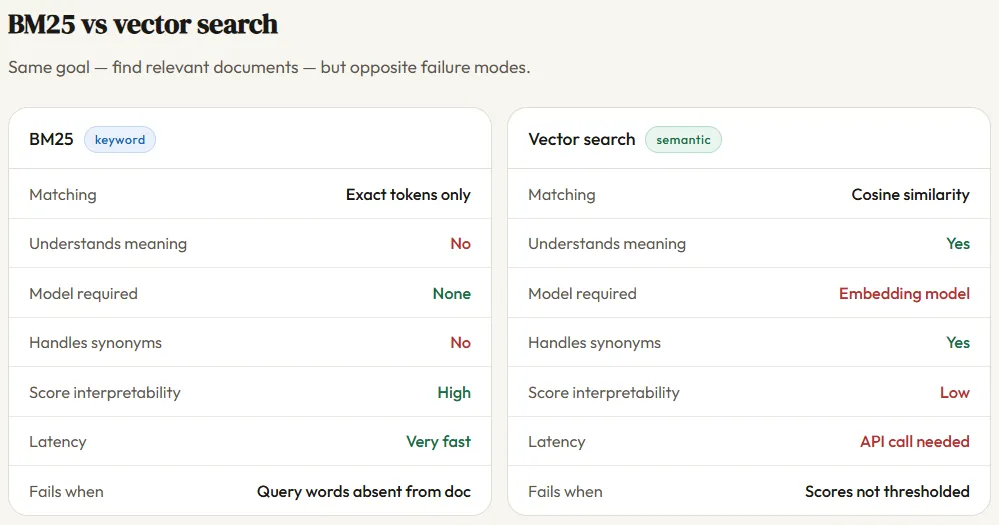

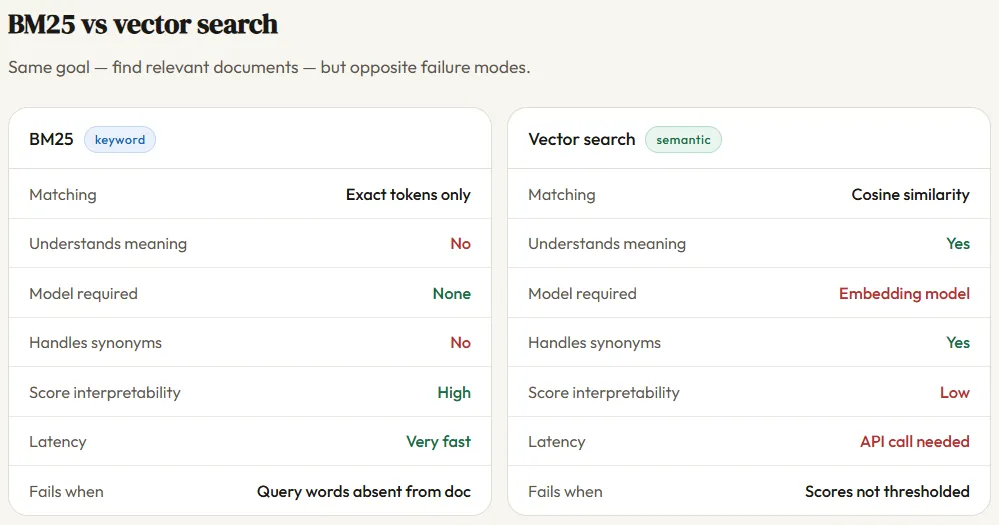

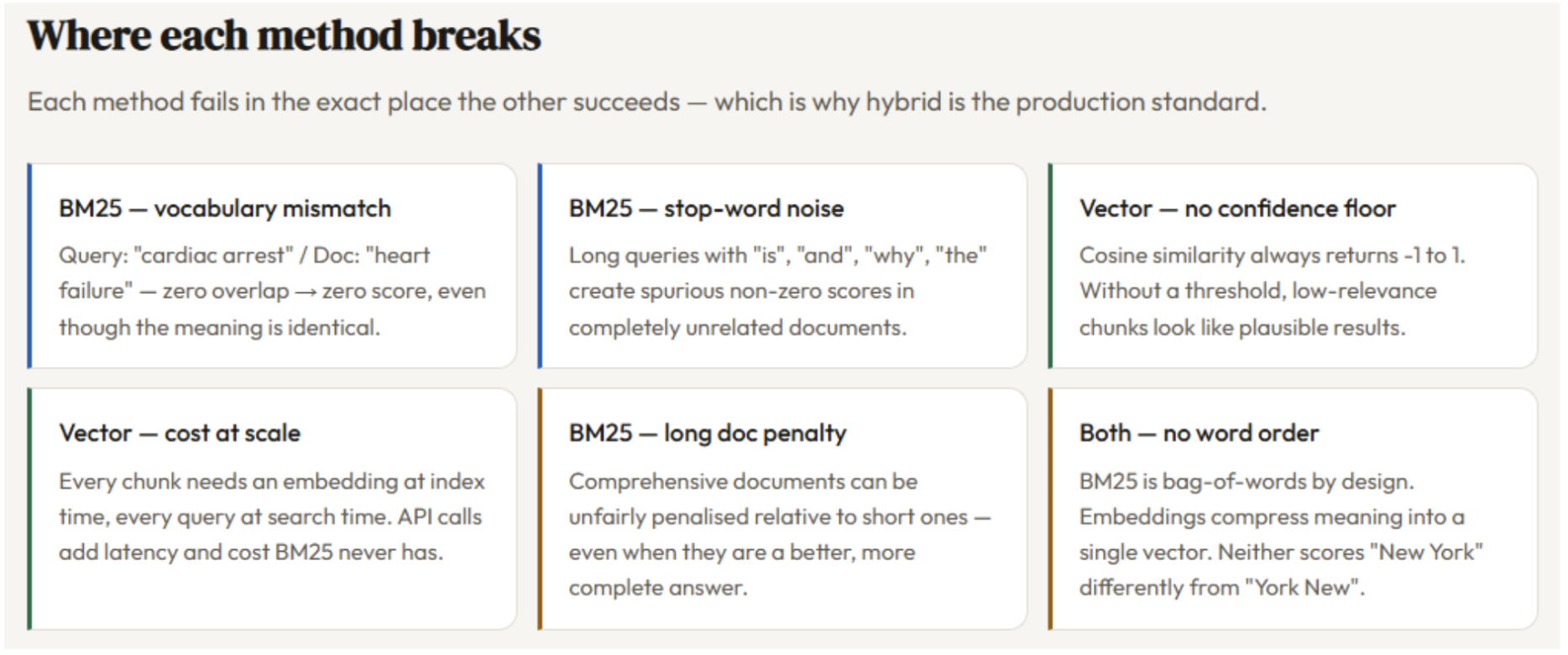

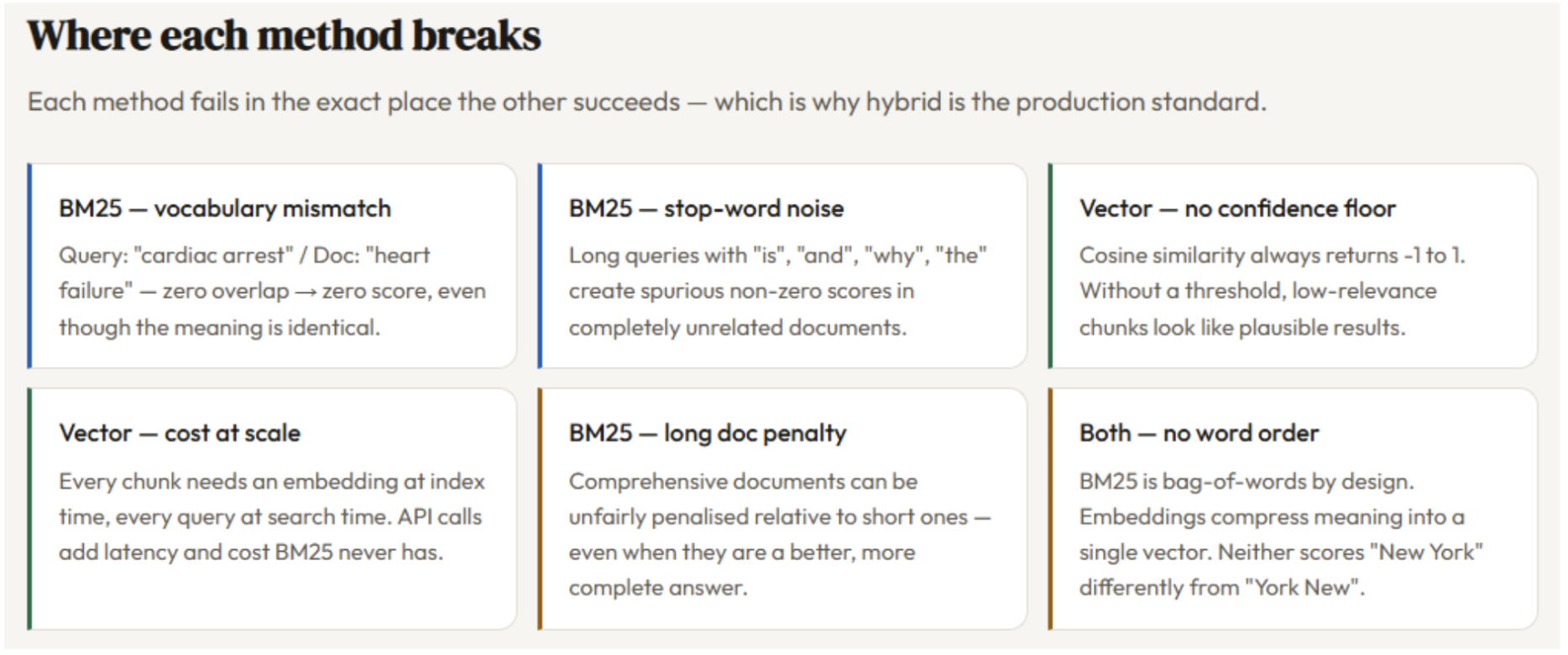

BM25 and vector search reply the identical query — which paperwork are related to this question? — however via essentially completely different lenses. BM25 is a keyword-matching algorithm: it appears for the precise phrases out of your question inside every doc, scores them primarily based on frequency and rarity, and ranks accordingly. It has no understanding of language — it sees textual content as a bag of tokens, not that means.

Vector search, in contrast, converts each the question and each doc into dense numerical vectors utilizing an embedding mannequin, then finds paperwork whose vectors level in the identical path because the question vector — measured by cosine similarity. This implies vector search can match “cardiac arrest” to a doc about “coronary heart failure” although not one of the phrases overlap, as a result of the embedding mannequin has discovered that these ideas stay shut collectively in semantic house.

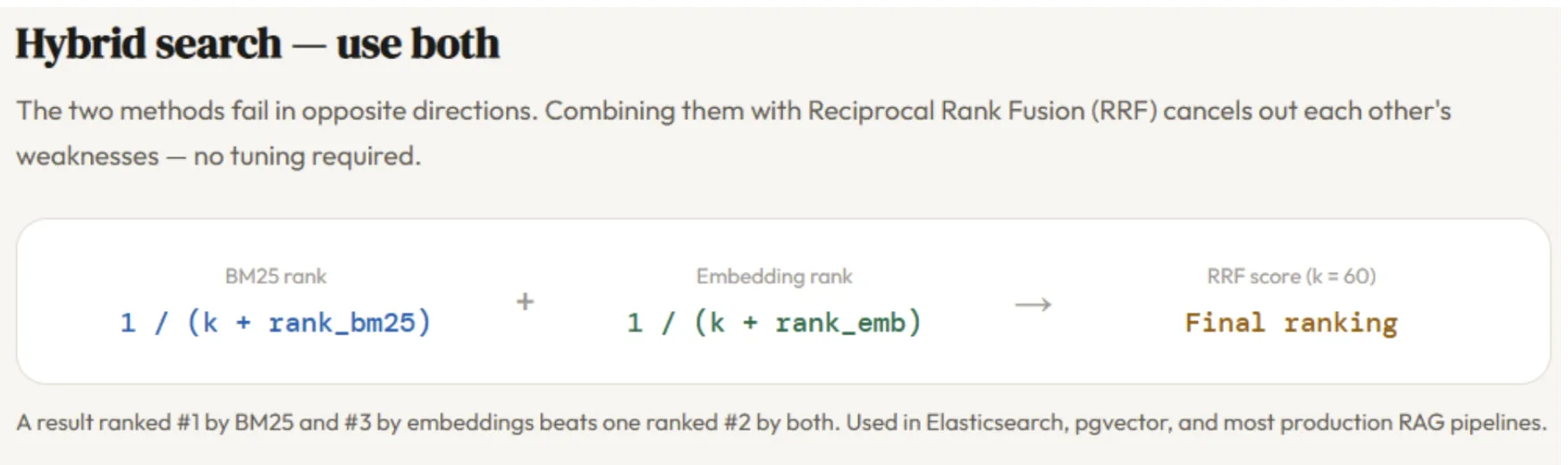

The tradeoff is sensible: BM25 requires no mannequin, no GPU, and no API name — it’s quick, light-weight, and totally explainable. Vector search requires an embedding mannequin at index time and question time, provides latency and price, and produces scores which might be tougher to interpret. Neither is strictly higher; they fail in reverse instructions, which is strictly why hybrid search — combining each — has turn out to be the manufacturing commonplace.

Evaluating BM25 and Vector Search in Python

Putting in the dependencies

pip set up rank_bm25 openai numpyimport math

import re

import numpy as np

from collections import Counter

from rank_bm25 import BM25Okapi

from openai import OpenAIimport os

from getpass import getpass

os.environ['OPENAI_API_KEY'] = getpass('Enter OpenAI API Key: ')Defining the Corpus

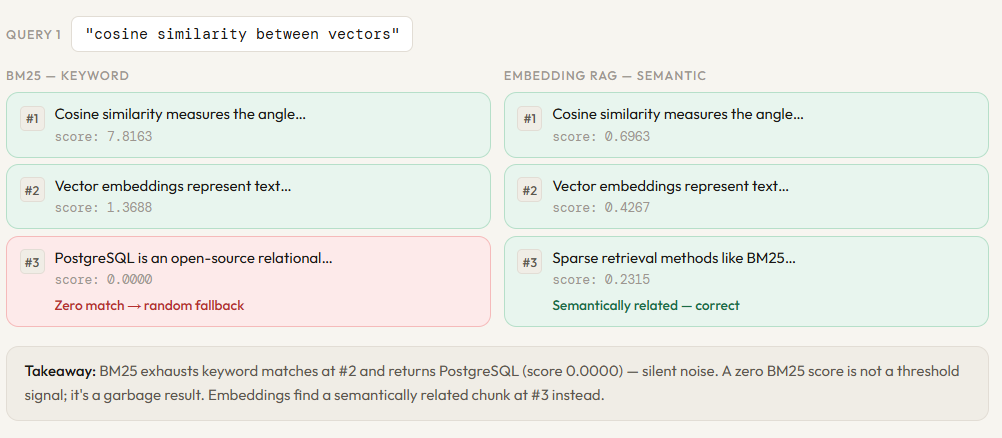

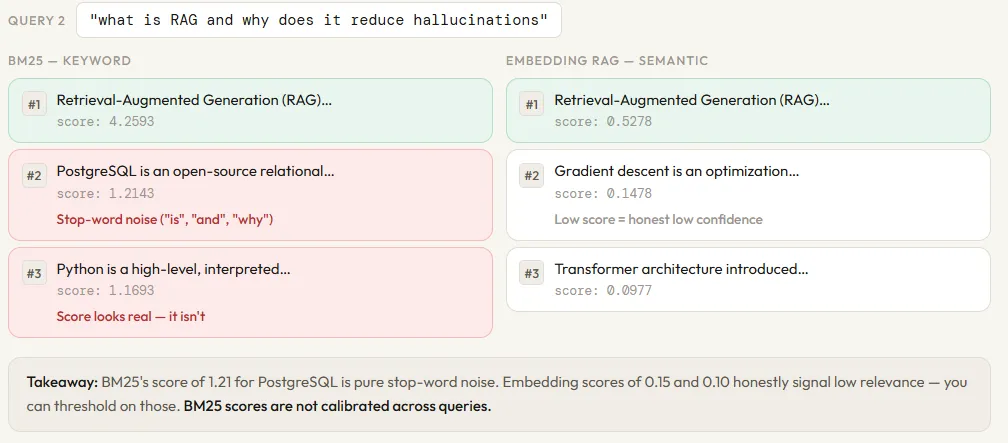

Earlier than evaluating BM25 and vector search, we’d like a shared data base to go looking over. We outline 12 brief textual content chunks protecting a spread of subjects — Python, machine studying, BM25, transformers, embeddings, RAG, databases, and extra. The subjects are intentionally diverse: some chunks are carefully associated (BM25 and TF-IDF, embeddings and cosine similarity), whereas others are utterly unrelated (PostgreSQL, Django). This selection is what makes the comparability significant — a retrieval technique that works nicely ought to floor the related chunks and ignore the noise.

This corpus acts as our stand-in for an actual doc retailer. In a manufacturing RAG pipeline, these chunks would come from splitting and cleansing precise paperwork — PDFs, wikis, data bases. Right here, we maintain them brief and hand-crafted so the retrieval behaviour is simple to hint and purpose about.

CHUNKS = [

# 0

"Python is a high-level, interpreted programming language known for its simple and readable syntax. "

"It supports multiple programming paradigms including procedural, object-oriented, and functional programming.",

# 1

"Machine learning is a subset of artificial intelligence that enables systems to learn from data "

"without being explicitly programmed. Common algorithms include linear regression, decision trees, and neural networks.",

# 2

"BM25 stands for Best Match 25. It is a bag-of-words retrieval function used by search engines "

"to rank documents based on the query terms appearing in each document. "

"BM25 uses term frequency and inverse document frequency with length normalization.",

# 3

"Transformer architecture introduced the self-attention mechanism, which allows the model to weigh "

"the importance of different words in a sentence regardless of their position. "

"BERT and GPT are both based on the Transformer architecture.",

# 4

"Vector embeddings represent text as dense numerical vectors in a high-dimensional space. "

"Similar texts are placed closer together. This allows semantic search -- finding documents "

"that mean the same thing even if they use different words.",

# 5

"TF-IDF stands for Term Frequency-Inverse Document Frequency. It reflects how important a word is "

"to a document relative to the entire corpus. Rare words get higher scores than common ones like 'the'.",

# 6

"Retrieval-Augmented Generation (RAG) combines a retrieval system with a language model. "

"The retriever finds relevant documents; the generator uses them as context to produce an answer. "

"This reduces hallucinations and allows the model to cite sources.",

# 7

"Django is a high-level Python web framework that encourages rapid development and clean, pragmatic design. "

"It includes an ORM, authentication system, and admin panel out of the box.",

# 8

"Cosine similarity measures the angle between two vectors. A score of 1 means identical direction, "

"0 means orthogonal, and -1 means opposite. It is commonly used to compare text embeddings.",

# 9

"Gradient descent is an optimization algorithm used to minimize a loss function by iteratively "

"moving in the direction of the steepest descent. It is the backbone of training neural networks.",

# 10

"PostgreSQL is an open-source relational database known for its robustness and support for advanced "

"SQL features like window functions, CTEs, and JSON storage.",

# 11

"Sparse retrieval methods like BM25 rely on exact keyword matches and fail when the query uses "

"synonyms or paraphrases not present in the document. Dense retrieval using embeddings handles "

"this by matching semantic meaning rather than surface form.",

]

print(f"Corpus loaded: {len(CHUNKS)} chunks")

for i, c in enumerate(CHUNKS):

print(f" [{i:02d}] {c[:75]}...")Constructing the BM25 Retriever

With the corpus outlined, we will construct the BM25 index. The method has two steps: tokenization and indexing. The tokenize operate lowercases the textual content and splits on any non-alphanumeric character — so “TF-IDF” turns into [“tf”, “idf”] and “bag-of-words” turns into [“bag”, “of”, “words”]. That is deliberately easy: BM25 is a bag-of-words mannequin, so there isn’t a stemming, no stopword elimination, and no linguistic preprocessing. Each phrase is handled as an impartial token.

As soon as each chunk is tokenized, BM25Okapi builds the index — computing doc lengths, common doc size, and IDF scores for each distinctive time period within the corpus. This occurs as soon as at startup. At question time, bm25_search tokenizes the incoming question the identical means, calls get_scores to compute a BM25 relevance rating for each chunk in parallel, then kinds and returns the top-k outcomes. The sanity examine on the backside runs a take a look at question to verify the index is working earlier than we transfer on to the embedding retriever.

def tokenize(textual content: str) -> record[str]:

"""Lowercase and break up on non-alphanumeric characters."""

return re.findall(r'w+', textual content.decrease())

# Construct BM25 index over the corpus

tokenized_corpus = [tokenize(chunk) for chunk in CHUNKS]

bm25 = BM25Okapi(tokenized_corpus)

def bm25_search(question: str, top_k: int = 3) -> record[dict]:

"""Return top-k chunks ranked by BM25 rating."""

tokens = tokenize(question)

scores = bm25.get_scores(tokens)

ranked = np.argsort(scores)[::-1][:top_k]

return [

{"chunk_id": int(i), "score": round(float(scores[i]), 4), "textual content": CHUNKS[i]}

for i in ranked

]

# Fast sanity examine

outcomes = bm25_search("how does BM25 rank paperwork", top_k=3)

print("BM25 take a look at -- question: 'how does BM25 rank paperwork'")

for r in outcomes:

print(f" [{r['chunk_id']}] rating={r['score']} {r['text'][:70]}...")Constructing the Embedding Retriever

The embedding retriever works in another way from BM25 at each step. As a substitute of counting tokens, it converts every chunk right into a dense numerical vector — a listing of 1,536 numbers — utilizing OpenAI’s text-embedding-3-small mannequin. Every quantity represents a dimension in semantic house, and chunks that imply related issues find yourself with vectors that time in related instructions, whatever the phrases they use.

The index construct step calls the embedding API as soon as per chunk and shops the ensuing vectors in reminiscence. That is the important thing value distinction from BM25: constructing the BM25 index is pure arithmetic by yourself machine, whereas constructing the embedding index requires one API name per chunk and produces vectors you might want to retailer. For 12 chunks that is trivial; at one million chunks, this turns into an actual infrastructure determination.

At question time, embedding_search embeds the incoming question utilizing the identical mannequin — that is vital, the question and the chunks should stay in the identical vector house — then computes cosine similarity between the question vector and each saved chunk vector. Cosine similarity measures the angle between two vectors: a rating of 1 means similar path, 0 means utterly unrelated, and unfavorable values imply reverse that means. The chunks are then ranked by this rating and the top-k are returned. The identical sanity examine question from the BM25 part runs right here too, so you possibly can see the primary direct comparability between the 2 approaches on similar enter.

EMBED_MODEL = "text-embedding-3-small"

def get_embedding(textual content: str) -> np.ndarray:

response = consumer.embeddings.create(mannequin=EMBED_MODEL, enter=textual content)

return np.array(response.knowledge[0].embedding)

def cosine_similarity(a: np.ndarray, b: np.ndarray) -> float:

return float(np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b)))

# Embed all chunks as soon as (that is the "index construct" step in RAG)

print("Constructing embedding index... (one API name per chunk)")

chunk_embeddings = [get_embedding(chunk) for chunk in CHUNKS]

print(f"Performed. Every embedding has {len(chunk_embeddings[0])} dimensions.")

def embedding_search(question: str, top_k: int = 3) -> record[dict]:

"""Return top-k chunks ranked by cosine similarity to the question embedding."""

query_emb = get_embedding(question)

scores = [cosine_similarity(query_emb, emb) for emb in chunk_embeddings]

ranked = np.argsort(scores)[::-1][:top_k]

return [

{"chunk_id": int(i), "score": round(float(scores[i]), 4), "textual content": CHUNKS[i]}

for i in ranked

]

# Fast sanity examine

outcomes = embedding_search("how does BM25 rank paperwork", top_k=3)

print("nEmbedding take a look at -- question: 'how does BM25 rank paperwork'")

for r in outcomes:

print(f" [{r['chunk_id']}] rating={r['score']} {r['text'][:70]}...")Facet-by-Facet Comparability Operate

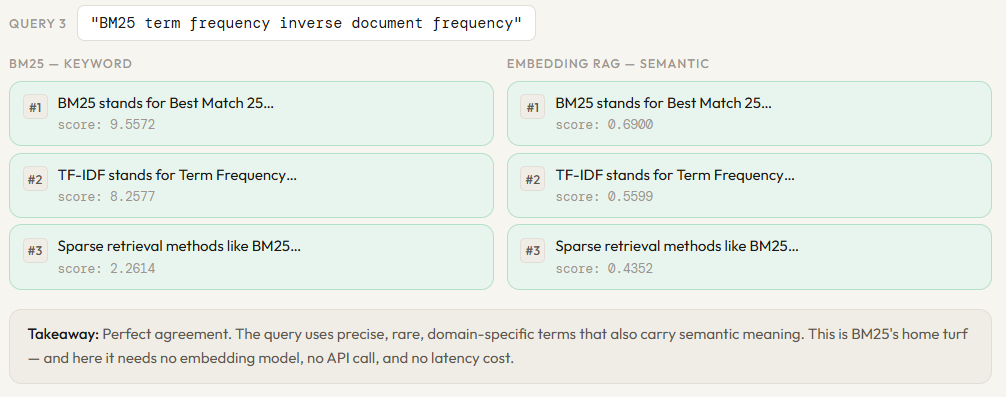

That is the core of the experiment. The examine operate runs the identical question via each retrievers concurrently and prints the leads to a two-column structure — BM25 on the left, embeddings on the appropriate — so the variations are instantly seen on the identical rank place.

def examine(question: str, top_k: int = 3):

bm25_results = bm25_search(question, top_k)

embed_results = embedding_search(question, top_k)

print(f"n{'═'*70}")

print(f" QUERY: "{question}"")

print(f"{'═'*70}")

print(f"n {'BM25 (key phrase)':<35} {'Embedding RAG (semantic)'}")

print(f" {'─'*33} {'─'*33}")

for rank, (b, e) in enumerate(zip(bm25_results, embed_results), 1):

b_preview = b['text'][:55].exchange('n', ' ')

e_preview = e['text'][:55].exchange('n', ' ')

identical = "⬅ identical" if b['chunk_id'] == e['chunk_id'] else ""

print(f" #{rank} [{b['chunk_id']:02d}] {b['score']:.4f} {b_preview}...")

print(f" [{e['chunk_id']:02d}] {e['score']:.4f} {e_preview}... {identical}")

print()examine("BM25 time period frequency inverse doc frequency")

examine("what's RAG and why does it scale back hallucinations")

examine("cosine similarity between vectors")

Try the Full Pocket book right here. Additionally, be happy to comply with us on Twitter and don’t overlook to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be a part of us on telegram as nicely.