Yann LeCun’s AMI Labs And The Rise Of AI World Fashions

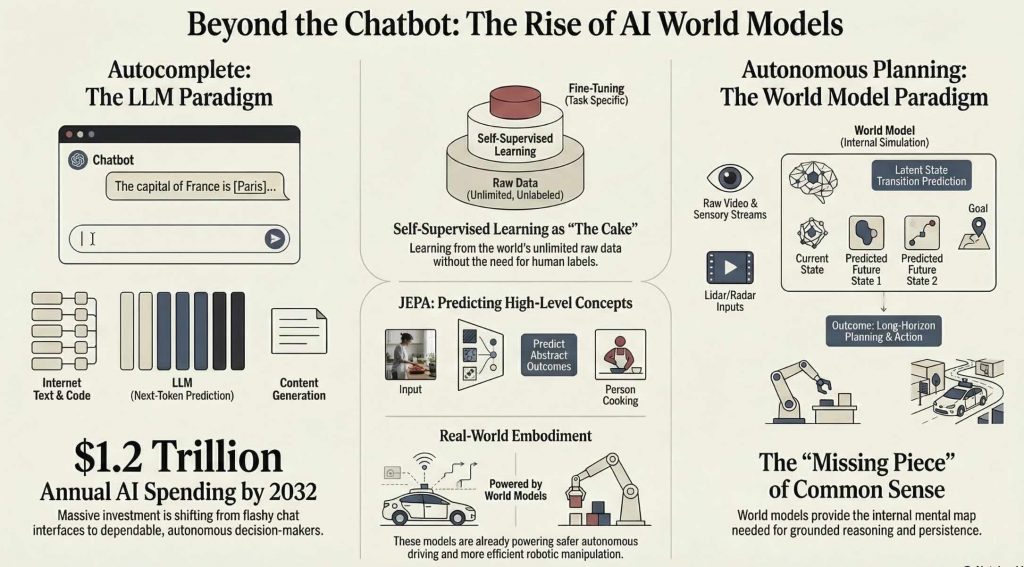

Most AI instruments really feel spectacular in a demo, then disappoint as soon as they hit actual workflows. McKinsey initiatives generative AI spending may attain 1.2 trillion {dollars} a yr by 2032, but many deployed programs nonetheless act like autocomplete engines relatively than dependable resolution makers. This widening hole between flashy chat interfaces and reliable autonomy is strictly what Yann LeCun’s new enterprise, AMI Labs, is constructed to shut. By centering its analysis on AI world fashions, inside simulations that permit machines predict, plan, and act, AMI Labs represents a deliberate break from the pure scale mindset of as we speak’s massive language mannequin leaders. For those who work in AI, robotics, product, or technique, understanding this shift is quick changing into a profession benefit as AI strikes from chat to brokers that function in each bodily and digital environments.

Key Takeaways

- AMI Labs, based by Yann LeCun, is targeted on constructing autonomous machine intelligence based mostly on AI world fashions relatively than merely scaling massive language fashions.

- World fashions give AI programs inside simulations of how the setting adjustments, which helps prediction, planning, and customary sense about bodily and social dynamics.

- LeCun’s strategy builds on self supervised studying and architectures like Joint Embedding Predictive Architectures, which differ technically from autoregressive token prediction.

- Actual progress requires not solely intelligent architectures but additionally sensible work on knowledge, compute, analysis, and governance, the place many present articles gloss over onerous tradeoffs.

Why AMI Labs Alerts A Shift Past Pure Language Fashions

AMI Labs, brief for Autonomous Machine Intelligence Labs, was based by Yann LeCun after greater than a decade main AI analysis at Meta and an extended tutorial profession at New York College. LeCun shared a Turing Award in 2018 with Geoffrey Hinton and Yoshua Bengio for foundational work on deep studying, notably convolutional neural networks that now underpin most pc imaginative and prescient programs. With AMI Labs, he’s explicitly stepping outdoors the consolation zone of pure language modeling to pursue brokers that may perceive and act on this planet. In his public talks, he argues that actual intelligence requires an inside mannequin of the setting, not solely a big reminiscence of textual content scraped from the web. That argument positions AMI Labs in a special conceptual class than labs that focus primarily on ever bigger chat fashions.

To see how important that is on your roadmap, distinction AMI Labs with the present frontier mannequin ecosystem. Within the present AI panorama, names like OpenAI, Google DeepMind, Anthropic, xAI, and Cohere dominate dialogue round frontier fashions. These labs have secured multibillion greenback partnerships with cloud and enterprise distributors to coach and deploy massive language fashions and multimodal programs. AMI Labs emerges as a extra analysis centered group centered on one overarching query, how one can construct a system that learns a predictive, causal mannequin of the world and makes use of it for planning. The place OpenAI would possibly emphasize deployed purposes resembling Copilot model coding assistants, AMI Labs frames success as an agent that may be taught from video, work together with environments, and generalize throughout duties with minimal supervision. In my expertise, that distinction in goal issues quite a bit for architectural selections, knowledge pipelines, and ultimately enterprise fashions.

Investor curiosity in various approaches has grown as the price of scaling LLMs climbs and issues about marginal returns seem. Reviews from the Stanford AI Index present coaching compute for frontier fashions rising by orders of magnitude roughly each one or two years, alongside steep will increase in coaching value estimates. This financial strain creates room for architectures that use fewer human labels, are extra pattern environment friendly, or leverage self supervised studying from uncooked sensory streams. AMI Labs positions world fashions as one reply to this strain, promising programs that may be taught predictively from unlabeled knowledge after which reuse that data throughout many downstream duties. For readers planning careers or investments, it’s value noticing that such a technique just isn’t merely tutorial, it responds on to bottlenecks in knowledge, compute, and reliability that practitioners face every day.

What Are AI World Fashions And Why Do They Matter?

Earlier than you possibly can assess AMI Labs as a wager, you want a transparent image of what a world mannequin is and the place it suits alongside massive language fashions. An AI world mannequin is an inside illustration that lets an AI system predict how the world will change when it or others act. As an alternative of merely mapping inputs on to outputs, a world mannequin learns the dynamics of its setting so it will probably think about future states, consider potential actions, and purpose about trigger and impact. This inside simulation will be discovered from uncooked knowledge resembling video, sensor readings, or interplay logs, usually utilizing self supervised aims that require no human labels. As soon as skilled, the world mannequin helps planning, lengthy horizon management, and extra strong generalization to new conditions. In easy phrases, it provides a man-made agent a sort of psychological mannequin of its environment.

The idea just isn’t new in synthetic intelligence or cognitive science, the place researchers have lengthy argued that clever habits will depend on inside fashions of the setting. Stuart Russell, coauthor of the basic textbook “Synthetic Intelligence: A Fashionable Strategy”, has emphasised that brokers want a mannequin of the world to foretell penalties of actions and make rational selections. Jürgen Schmidhuber and David Ha popularized the phrase “world fashions” in a 2018 paper, the place they skilled a system to be taught a compact illustration of an setting after which used that illustration for management in a easy automotive racing activity. Their strategy confirmed {that a} discovered world mannequin may help planning and management completely in a latent area, decreasing the necessity to work together straight with a fancy simulator. These concepts type an vital backdrop for LeCun’s more moderen work, which integrates them with advances in self supervised illustration studying. For a deeper basis on why world fashions matter, sources like this information to AI world fashions could be a helpful complement to the analysis literature.

Yann LeCun frames world fashions because the lacking piece between sample recognition and customary sense. He has argued in lots of talks that present deep studying programs excel at notion duties resembling picture classification or speech recognition, whereas reinforcement studying strategies deal with trial and error studying in slim domains. In his 2022 essay “A Path In the direction of Autonomous Machine Intelligence”, he writes that the target is to be taught a mannequin that captures the regularities of the world, then use it for reasoning and planning. He usually summarizes self supervised studying as “the cake” of AI, with supervised studying and reinforcement studying as “the icing on the cake”. In that view, world fashions are the cake product of predictive understanding, and downstream duties are decorations that exploit this deep inside data of how the world behaves.

Inside LeCun’s Imaginative and prescient: From Self Supervision To Joint Embedding Predictive Architectures

To grasp what makes AMI Labs distinctive, it helps to unpack LeCun’s technical imaginative and prescient, particularly his concentrate on self supervised studying and Joint Embedding Predictive Architectures, usually referred to as JEPAs. Self supervised studying refers to coaching aims the place a mannequin predicts components of its enter from different components, so it will probably be taught construction from uncooked knowledge with out specific labels. For instance, a imaginative and prescient mannequin would possibly predict lacking patches in a picture, or a video mannequin would possibly predict future frames from previous frames. LeCun and colleagues at Meta have pushed this concept aggressively for imaginative and prescient and multimodal fashions, arguing that self supervision scales higher than manually labeled datasets and captures extra basic options of the setting. Of their view, self supervision is the principle engine for studying world fashions, as a result of the world itself gives a vast stream of supervisory alerts.

JEPAs implement a selected approach of doing this predictive studying. Reasonably than producing pixels or tokens one after the other, a JEPA encodes two observations right into a joint embedding area and learns to foretell one illustration from the opposite. In sensible phrases, a JEPA can take a present sensory state and a goal future state, then be taught to carry their embeddings into alignment if the transition is believable. This differs from autoregressive language fashions that predict the following token on the output layer utilizing a softmax over vocabulary. By specializing in predicting excessive stage representations as an alternative of uncooked outputs, JEPAs can ignore irrelevant particulars and focus on the underlying elements of variation. LeCun argues that this makes them extra appropriate for studying summary world dynamics, for instance understanding that an object persists when it strikes behind one other object.

In his public lectures, LeCun has been vital of pure generative fashions that try to synthesize each element of their output distribution. He famously referred to as present generative fashions “blurry JPEGs of the net”, which means that they compress huge web content material right into a lossy approximation that may be sampled for believable textual content or photos. From his perspective, such fashions are wasteful as a result of they allocate parameters to breed superficial particulars relatively than studying the deep regularities of the world. He proposes that world fashions ought to as an alternative be predictive however not absolutely generative, capturing what issues for management and reasoning whereas discarding floor noise. In my expertise, this concentrate on sufficiency for motion, relatively than good generative constancy, matches how engineers design simulators in robotics, the place simplified physics fashions usually outperform photorealistic renderers for planning.

How AI World Fashions Truly Work In Follow

If you wish to put this into apply in a lab or product setting, it helps to visualise the complete notion motion loop. Beneath the hood, a world mannequin system normally consists of a number of interacting parts that type a notion and motion loop. One element encodes uncooked observations resembling photos, depth maps, textual content descriptions, or sensor readings right into a compact latent illustration. One other element, usually referred to as the dynamics mannequin, predicts how that latent state will evolve given an motion or exterior occasion. A planning module makes use of the dynamics mannequin to simulate completely different motion sequences, roll them ahead, and consider which sequence is more likely to obtain a purpose. Lastly, a coverage or controller converts the chosen plan into low stage actions, resembling motor instructions for a robotic arm or API requires a software program agent. The world mannequin sits on the middle, linking notion to prediction and management.

In analysis, a number of concrete programs embody this sample. The Dreamer household of algorithms, developed by Danijar Hafner and colleagues, trains a world mannequin from uncooked visible enter after which learns a coverage that plans within the discovered latent area. Dreamer and its successors have proven robust efficiency on steady management duties within the DeepMind Management Suite and Atari environments, usually matching or surpassing mannequin free reinforcement studying strategies with far fewer setting interactions. DeepMind’s MuZero, launched by Julian Schrittwieser and coworkers, learns a mannequin of setting dynamics that predicts reward and coverage values relatively than precise observations, and makes use of that mannequin with tree search to realize superhuman efficiency in Go, chess, and Atari. These programs illustrate that discovered world fashions can help lengthy horizon planning in each discrete and steady domains.

What many individuals underestimate is how a lot engineering goes into making such programs secure and environment friendly. Coaching a world mannequin entails deciding on applicable architectures for encoders and dynamics modules, selecting prediction horizons, balancing reconstruction and reward prediction losses, and tuning exploration methods. Information assortment turns into a part of the design, because the agent’s coverage influences what components of the setting it observes and subsequently what the mannequin learns. Analysis additionally turns into extra advanced, as a result of researchers should measure not solely activity efficiency but additionally mannequin accuracy, pattern effectivity, and robustness to distribution shifts. In utilized settings, groups usually mix classical management, hand engineered simulators like MuJoCo, and discovered parts to get sensible efficiency. A typical mistake I usually see is groups making an attempt to exchange all the pieces with a single neural mannequin earlier than they’ve strong baselines and diagnostics.

World Fashions Versus Giant Language Fashions: Extra Than A Dimension Debate

When you see how world fashions are structured, the distinction with as we speak’s massive language fashions turns into a lot clearer. Many public conversations scale back the distinction between AMI Labs and different frontier labs to a easy slogan, world fashions versus larger LLMs. That framing misses the deeper architectural and epistemic variations between the approaches. Giant language fashions resembling GPT 4, Claude, or Gemini are autoregressive transformers skilled to foretell the following token given a sequence of earlier tokens. Their coaching knowledge consists primarily of textual content, code, and a few multimodal inputs, so their understanding of the world is filtered by way of language. They excel at sample completion, model imitation, and brief time period reasoning that may be expressed as token manipulations. They lack an specific illustration of persistent objects, bodily dynamics, or causal construction, which makes lengthy horizon planning and grounded interplay onerous.

World fashions, in distinction, are usually structured round latent states that characterize the setting at a given time, together with transition capabilities that map states and actions to future states. Planning algorithms can then function straight on this latent area, looking over motion sequences and evaluating future outcomes utilizing the dynamics mannequin. This separation between mannequin, planner, and coverage mirrors many years of labor in management concept and robotics, the place engineers use simulators and mannequin predictive management to steer advanced programs. David Silver, recognized for his work on AlphaGo, has emphasised that mannequin based mostly approaches can obtain better knowledge effectivity and long run reasoning, at the price of extra advanced modeling. In lots of world mannequin programs, textual data is only one sensory channel, alongside imaginative and prescient, proprioception, or environmental state variables.

There’s additionally an vital distinction in studying aims. Autoregressive LLMs optimize a chance goal over token sequences, successfully making an attempt to be pretty much as good as potential at subsequent token prediction. World mannequin approaches impressed by LeCun’s JEPA imaginative and prescient concentrate on predicting summary options of future observations, usually with contrastive or embedding based mostly losses. This implies they’ll ignore exact wording or pixel stage element and nonetheless be taught that, for instance, a ball continues in movement until acted upon, or {that a} espresso cup doesn’t disappear when positioned behind a ebook. LeCun has argued that such predictive studying of latent variables is extra aligned with how people and animals be taught from steady sensory streams. In apply, hybrid architectures are more likely to emerge, the place LLMs interface with world fashions, however the important thing level is that the longer term might not belong to a single flat structure that merely grows in parameter depend.

Actual World Case Research: How World Fashions Are Already Altering Follow

At this level, a pure query is whether or not any of this issues outdoors benchmarks and whiteboard diagrams. Work on world fashions just isn’t confined to concept or summary benchmarks, and several other organizations have shared outcomes that illuminate sensible impacts. In robotic manipulation, Google DeepMind and Google Robotics have explored how predictive fashions can enhance pattern effectivity and robustness. For example, Dreamer variants have been utilized to studying management insurance policies from pixel observations in simulated and actual world robotic environments, together with duties resembling cartpole balancing and quadruped locomotion. By studying a compact latent dynamics mannequin, these programs can plan actions utilizing imagined trajectories, which reduces the variety of actual interactions required. The result’s sooner coaching and fewer collisions or failures throughout exploration, which is vital when working with costly or fragile {hardware}. Engineers report that such approaches make it extra possible to iterate on robotic abilities with out labor intensive handbook scripting.

In autonomous driving, corporations like Wayve in the UK advocate for finish to finish discovered fashions that incorporate predictive understanding of visitors scenes. Wayve’s analysis emphasizes world fashions that forecast the movement of surrounding autos and pedestrians, conditioned on the ego car’s potential actions. Utilizing datasets such because the nuScenes benchmark and their very own seize fleets, they practice fashions to roll out future trajectories and consider candidate plans in a differentiable method. This enables the driving coverage to purpose about multi agent interactions, resembling how different vehicles would possibly reply to a lane change or a merge. Case research reported in tutorial papers present improved efficiency on advanced city situations and higher dealing with of lengthy tail occasions in simulation. One factor that turns into clear in apply is that modeling the joint habits of a number of brokers advantages enormously from discovered world fashions that seize delicate patterns in human driving.

A 3rd instance comes from online game AI, the place world fashions help brokers that be taught to play advanced titles with restricted knowledge and higher generalization. DeepMind’s MuZero has been broadly mentioned for its capacity to realize robust efficiency in chess, shogi, and Atari video games with out being given specific guidelines of the sport. As an alternative, MuZero learns a mannequin that predicts reward, worth, and coverage data from latent states, and combines this with a Monte Carlo tree search planner. In Atari domains, MuZero makes use of frames as enter, learns an inside illustration, and plans over that illustration relatively than over uncooked pixels. The system achieved state-of-the-art outcomes on many video games with considerably fewer setting interactions than mannequin free baselines. This case illustrates how discovered fashions can substitute handcrafted simulators, opening the door to brokers that may adapt to new video games, ranges, or mechanics with out human designed rule units.

Financial, Operational, And Governance Implications Of World Fashions

For those who lead a enterprise unit or product line, a very powerful query is commonly not “can this be finished” however “why ought to we do it now”. The rise of world fashions intersects with broader financial tendencies in AI, particularly issues round value, scalability, and return on funding. McKinsey estimates that generative AI may add between 2.6 and 4.4 trillion {dollars} in annual worth throughout industries by 2030, however organizations are already dealing with diminishing returns from easy deployment of chatbots and textual content summarization instruments. World fashions promise capabilities that transcend content material technology, for instance optimizing logistics, controlling manufacturing processes, or working fleets of robots. These duties tie on to bodily property and provide chains, the place efficiency enhancements translate into actual value financial savings and new income. If architectures like these championed by AMI Labs can ship extra pattern environment friendly studying and safer lengthy horizon planning, they might shift AI funding towards embodied and operational use instances.

From an operational standpoint, adopting world mannequin based mostly programs raises new necessities and challenges for organizations. Information assortment shifts from static corpora of paperwork towards steady streams of sensor knowledge, video, and interplay logs, which require strong infrastructure for storage, annotation, and privateness administration. Coaching pipelines should combine reinforcement studying, simulation instruments, and doubtlessly on system studying in edge environments resembling factories or autos. Analysis practices additionally have to evolve, since conventional accuracy metrics on held out datasets will not be enough to seize long run security or reliability in closed loop management. In my expertise, enterprises usually underestimate the organizational complexity of coordinating software program engineers, controls consultants, area specialists, and security groups round such programs. The promise of autonomous brokers interacting with the actual world comes with a corresponding want for detailed monitoring, fail safes, and incident response plans.

Governance and regulation add one other layer of complexity that intersects in fascinating methods with world fashions. The European Union’s AI Act, authorised in 2024, defines threat based mostly classes for AI programs, with excessive threat classifications protecting purposes resembling autonomous autos, industrial robots, and significant infrastructure administration. These are precisely the domains the place world fashions and embodied AI brokers are more likely to play a central position. On the identical time, governance frameworks just like the NIST AI Danger Administration Framework in the USA emphasize transparency, robustness, and controllability. Proponents of world fashions argue that specific inside fashions can enhance interpretability and management, since planners can expose trajectories and predicted outcomes. Critics, together with some AI security researchers, counter that extra succesful world fashions may improve the autonomy and potential impression of AI programs in methods which can be more durable to supervise. It’s probably that regulators and auditors can pay very shut consideration to how organizations design, validate, and monitor such programs over the following decade.

Frequent Misconceptions And Hidden Challenges In Constructing World Fashions

At a look, it’s straightforward to imagine that world fashions will merely repair what LLMs get flawed. Many widespread articles current world fashions as an easy repair for the restrictions of enormous language fashions, however consultants know that new architectures hardly ever take away complexity. One false impression is that including a world mannequin routinely grants widespread sense and robustness, as if predictive coaching alone may remedy all reasoning challenges. In apply, discovered fashions are solely pretty much as good as their coaching knowledge and aims, and so they can nonetheless fail badly on uncommon occasions or distribution shifts. One other false impression is that world fashions require much less compute as a result of they’re extra environment friendly in precept. Coaching excessive capability predictive fashions from uncooked excessive dimensional knowledge resembling video will be extraordinarily compute intensive, particularly when coupled with on-line reinforcement studying loops. As of as we speak, solely a handful of analysis labs and enormous corporations can maintain the mandatory experiments at scale.

Three knowledgeable stage gaps usually go below mentioned in nontechnical protection. One hole issues analysis metrics for world fashions themselves, separate from downstream activity efficiency. Researchers have proposed measures resembling prediction error, illustration disentanglement, or planning worth estimates, however there is no such thing as a consensus on standardized benchmarks for basic world modeling. A second hole lies in knowledge curation and setting design, since studying helpful fashions requires publicity to wealthy, various, and difficult situations relatively than slim coaching regimes. For example, coaching a driving world mannequin solely on clear daytime highways might result in catastrophic failures at evening in dense city visitors. A 3rd hole entails integration with current operational expertise, resembling PLC controllers in factories or licensed avionics in plane, the place security constraints restrict how a lot of the management stack will be discovered. These gaps matter as a result of they decide whether or not world fashions stay analysis curiosities or turn into dependable parts in vital programs.

There are additionally problems with organizational readiness that hardly ever obtain consideration in technical blogs however dominate actual deployments. Many enterprises lack groups with mixed experience in deep studying, management concept, and security engineering, that are all wanted for efficient world mannequin initiatives. Tooling ecosystems for debugging and visualizing discovered dynamics are much less mature than these for traditional supervised studying, making it more durable for practitioners to diagnose failures. Distributors usually oversell early prototypes, creating expectations amongst executives that an apparently spectacular demo will scale simply to advanced multi website operations. In my expertise, success with world fashions requires a staged strategy that begins with slim, effectively instrumented duties, adopted by gradual growth and rigorous testing. With out that self-discipline, organizations can find yourself with brittle programs that work effectively in a single lab setting however fail unpredictably in manufacturing.

Future Outlook: How AMI Labs And World Fashions Might Redefine AI

For those who zoom out, AMI Labs sits on the intersection of a number of converging tendencies in AI analysis and trade. On the scientific aspect, work on self supervised illustration studying, causal discovery, and mannequin based mostly reinforcement studying continues to mature, offering substances for world mannequin architectures. On the {hardware} aspect, GPU and accelerator improvement by corporations like NVIDIA, AMD, and rising AI chip startups goals to help extra versatile, reminiscence wealthy workloads suited to simulation and multi module programs. On the trade aspect, early deployments of robotics, autonomous logistics, and agentic software program programs are stressing the boundaries of purely reactive or sample matching fashions. If AMI Labs and comparable efforts can exhibit world fashions that scale throughout duties and domains, they might shift the middle of gravity in AI analysis away from static textual content pretraining towards dynamic interplay and continuous studying.

Yann LeCun’s public statements recommend a long run, analysis heavy roadmap relatively than a fast product pivot. He has argued that human stage autonomous intelligence may require a number of main conceptual advances past present programs, and he usually criticizes each ungrounded optimism and excessive pessimism about close to time period synthetic basic intelligence. He contends that the appropriate structure, constructed on self supervised world modeling and modular planning, can ship secure and helpful brokers with out resorting to brittle immediate engineering or huge human supervision. On the identical time, he rejects doomer narratives that body superior AI as an existential risk by default, pointing as an alternative to historic patterns of technological adaptation. Whether or not one agrees together with his stance or prefers extra cautious security frameworks, AMI Labs will probably turn into a focus in debates about how a lot construction, grounding, and management our most succesful AI programs ought to have.

For college kids and practitioners, the rise of world fashions alerts a have to broaden ability units past immediate engineering and nice tuning language fashions. Data of management concept, dynamical programs, simulation, and robotics will turn into extra beneficial, alongside experience in self supervised studying and illustration studying. Organizations on this trajectory can begin by experimenting with mannequin based mostly reinforcement studying in simulation, evaluating instruments from open supply ecosystems resembling PyTorch and JAX. They’ll additionally monitor analysis output from entities resembling Meta AI, AMI Labs, DeepMind, and tutorial teams at establishments like MIT and ETH Zurich that work on world fashions and embodied AI. One factor that turns into clear in apply is that the following decade of AI shall be formed not solely by ever bigger fashions, but additionally by smarter constructions that permit machines construct and use their very own inside fashions of the world.

FAQ: Yann LeCun, AMI Labs, And AI World Fashions

Who’s Yann LeCun and why is he vital in AI?

Yann LeCun is a pc scientist recognized for pioneering convolutional neural networks and trendy deep studying. He co developed architectures that now energy picture recognition, speech recognition, and lots of imaginative and prescient programs deployed worldwide. In 2018 he obtained the Turing Award with Geoffrey Hinton and Yoshua Bengio for this foundational work. He has led AI analysis at Meta, the place he promoted self supervised studying as a key paradigm. His new enterprise, AMI Labs, focuses on constructing autonomous machine intelligence based mostly on world fashions, which may form the following period of AI. For a concise overview of his present stance and analysis themes, you may as well assessment this abstract of Yann LeCun’s daring AI rethink.

What’s AMI Labs and what’s its mission?

AMI Labs, or Autonomous Machine Intelligence Labs, is a analysis centered firm based by Yann LeCun. Its mission is to design AI programs that may perceive and act on this planet utilizing inside world fashions relatively than solely processing textual content. AMI Labs goals to construct brokers that be taught from notion and interplay, then use predictive fashions for planning and management. It positions itself alongside however distinct from labs that primarily scale massive language fashions for chat and content material technology. In sensible phrases, AMI Labs desires to supply architectures and algorithms that transfer AI nearer to strong, grounded autonomy.

What does Yann LeCun imply by a “world mannequin”?

Yann LeCun makes use of the time period “world mannequin” to explain an inside illustration that captures how the setting behaves below completely different actions. In his view, a world mannequin lets an AI system predict future states, perceive object persistence, and purpose about trigger and impact. That is completely different from an LLM that primarily captures statistical regularities in textual content sequences. LeCun argues that such fashions must be discovered by way of self supervised prediction from uncooked sensory streams like video. He sees world fashions because the core of autonomous machine intelligence, enabling brokers to plan and be taught with far fewer specific labels. If you’d like a extra intuitive psychological image, sources on AI thought processes could make these concepts simpler to hook up with on a regular basis cognition.

How are AI world fashions completely different from massive language fashions?

AI world fashions concentrate on studying latent states and dynamics that describe how an setting adjustments over time, given actions and occasions. Giant language fashions, against this, are skilled to foretell the following token in sequences of textual content or multimodal tokens. World fashions are normally grounded in sensory knowledge resembling photos, sensor readings, or simulator states, which give a extra direct hyperlink to bodily or digital environments. LLMs primarily be taught from language, which implies their data of the world is oblique and generally inconsistent. In apply, future programs might mix each, however the core distinction is that world fashions are about simulating actuality, whereas LLMs are about persevering with textual content.

What’s the Joint Embedding Predictive Structure (JEPA) that LeCun proposes?

The Joint Embedding Predictive Structure, or JEPA, is an strategy the place fashions be taught to foretell excessive stage representations of future observations from present ones. As an alternative of producing uncooked pixels or tokens, a JEPA maps inputs into an embedding area and trains a predictor to match the embedding of a goal remark. This enables the mannequin to concentrate on summary construction and ignore irrelevant particulars. LeCun argues that JEPA model aims are higher suited to studying world fashions than autoregressive token prediction. They help self supervised studying from video and different sensory streams, which is vital to constructing brokers that may perceive and act in advanced environments.

Are any corporations moreover AMI Labs engaged on AI world fashions?

A number of main organizations are exploring world fashions or carefully associated ideas. DeepMind has developed MuZero and Dreamer model algorithms that be taught setting dynamics for planning and management. Google Analysis and Google DeepMind work on video prediction and robotic world fashions in initiatives resembling RT sequence and different robotic studying efforts. Wayve focuses on discovered world fashions for finish to finish autonomous driving, modeling interactions amongst a number of brokers. NVIDIA researchers research mannequin based mostly reinforcement studying and simulation instruments that help discovered dynamics. Educational teams at establishments like MIT, ETH Zurich, and UC Berkeley additionally publish extensively on world fashions and embodied AI.

How shut are world fashions to attaining human stage widespread sense?

Right this moment’s world fashions are removed from human stage widespread sense, though they characterize significant progress on sure duties. Methods like Dreamer, MuZero, and numerous video world fashions can deal with particular environments, resembling Atari video games or simulated robots, with spectacular effectivity. They normally wrestle to generalize far past their coaching domains or purpose about summary ideas. Human widespread sense spans physics, social norms, language, and long run planning in a unified approach, which no current mannequin can replicate. Researchers like LeCun see present programs as early steps towards richer world modeling, not as completed options. Important conceptual and engineering advances are nonetheless wanted earlier than machines can rival human widespread sense throughout on a regular basis conditions.

What abilities ought to engineers be taught in the event that they need to work on world fashions?

Engineers concerned about world fashions profit from a mixture of deep studying, reinforcement studying, and management concept abilities. Data of self supervised studying, contrastive strategies, and latent variable fashions is vital, since world fashions depend on compact representations. Familiarity with simulation environments resembling MuJoCo, Unity, or Isaac Health club helps in designing and testing brokers. Understanding mannequin based mostly reinforcement studying algorithms, resembling Dreamer or MuZero, gives a concrete place to begin. Sensible coding expertise with PyTorch or JAX and abilities in massive scale coaching infrastructure are additionally beneficial. Lastly, publicity to robotics or actual time programs is helpful for individuals who need to work on embodied AI.

How do world fashions relate to AI security and alignment discussions?

World fashions intersect with AI security in each optimistic and difficult methods. On the optimistic aspect, specific fashions of the setting can provide builders extra visibility into how an AI system predicts and plans its actions. Some security researchers argue that inspectable world fashions and planners may help higher verification and oversight. On the difficult aspect, extra succesful world fashions might allow better autonomy and impression, which heightens the significance of strong management and alignment mechanisms. Teams like Anthropic and OpenAI emphasize security analysis centered on scalable oversight and interpretability, which would want to adapt to world mannequin architectures. Debates proceed about whether or not world fashions make alignment simpler or more durable general, and considerate governance shall be wanted as these programs mature.

Will massive language fashions turn into out of date if world fashions succeed?

It’s unlikely that giant language fashions will turn into out of date, even when world fashions acquire prominence. LLMs are extraordinarily efficient for a lot of duties involving textual content, code, and communication, and they’ll probably stay central instruments for data work. As an alternative, many researchers anticipate hybrid programs the place LLMs deal with language and interface duties, whereas world fashions deal with grounded notion and planning. For instance, an LLM would possibly interpret a consumer’s directions and translate them into objectives for a planning module that makes use of a world mannequin. The important thing shift can be from monolithic token predictors towards modular architectures that mix strengths from completely different parts. In that situation, experience with each LLMs and world fashions can be beneficial.

How quickly does Yann LeCun assume autonomous machine intelligence will arrive?

Yann LeCun tends to explain timelines for autonomous machine intelligence when it comes to many years relatively than a number of years. He has steered in interviews that reaching human stage competence throughout a broad vary of duties would require a number of main analysis breakthroughs. He usually stresses that present programs, together with LLMs and world fashions, are nonetheless lacking key capabilities resembling strong widespread sense and protracted reminiscence. On the identical time, he believes progress will be regular if the neighborhood pursues the appropriate architectural concepts, resembling self supervised world modeling and modular planning. He’s skeptical of claims that scaling current architectures alone will rapidly result in synthetic basic intelligence. For a contrasting lens on how unpredictable AI progress will be, reflections like Sutskever’s notes on AI evolution present helpful context on forecast uncertainty.

How can organizations begin experimenting with world fashions as we speak?

Organizations can start by exploring mannequin based mostly reinforcement studying in simulation environments that mirror their domains. For instance, industrial companies would possibly create digital twins of manufacturing traces utilizing instruments like Siemens Tecnomatix or NVIDIA Omniverse, then combine discovered dynamics fashions for optimization. Groups can experiment with open supply implementations of algorithms resembling Dreamer or PlaNet in PyTorch or JAX. It’s useful to begin with slim, effectively outlined duties and clear security boundaries earlier than increasing to extra advanced purposes. Collaborations with tutorial labs or specialised distributors can speed up studying and scale back threat. Over time, classes from these pilots can inform broader methods for integrating world fashions into operations.

Conclusion

Yann LeCun’s AMI Labs highlights a rising conviction amongst researchers and practitioners that actual progress in AI would require greater than ever bigger language fashions. By specializing in world fashions, self supervised studying, and predictive architectures like JEPAs, AMI Labs goals to present machines inside simulations of their environments. That functionality underpins planning, widespread sense about objects and brokers, and strong management of bodily and digital programs. When mixed with cautious consideration to knowledge, analysis, and governance, world fashions may also help transfer AI from sample matching chatbots towards reliable autonomous brokers.

For readers designing careers, merchandise, or analysis agendas, the important thing takeaway is that understanding world fashions is changing into as vital as figuring out how one can immediate an LLM. Studying about mannequin based mostly reinforcement studying, illustration studying, and management can place you for rising roles in robotics, autonomous programs, and sophisticated resolution help. Organizations that make investments thoughtfully in these applied sciences, beginning with secure simulations and clear analysis plans, shall be higher ready as world mannequin based mostly architectures mature. The rise of AMI Labs alerts that the following frontier of AI just isn’t solely about what fashions say, however about how effectively they’ll predict and act inside the world we share.

References

- McKinsey World Institute, “The financial potential of generative AI: The subsequent productiveness frontier”, 2023. Hyperlink

- Yann LeCun, “A Path In the direction of Autonomous Machine Intelligence”, 2022, arXiv:2208.10604. Hyperlink

- Meta AI Analysis, “Joint Embedding Predictive Architectures”, venture supplies and weblog posts. Hyperlink

- David Ha and Jürgen Schmidhuber, “World Fashions”, 2018, arXiv:1803.10122. Hyperlink

- Danijar Hafner et al., “Dreamer: Reinforcement Studying with World Fashions”, Worldwide Convention on Studying Representations, 2020. Hyperlink

- Julian Schrittwieser et al., “Mastering Atari, Go, Chess and Shogi by Planning with a Realized Mannequin”, Nature, 2020, MuZero. Hyperlink

- Stuart Russell and Peter Norvig, “Synthetic Intelligence: A Fashionable Strategy”, 4th version, Pearson, 2020.

- Wayve, analysis weblog and publications on finish to finish autonomous driving and world fashions. Hyperlink

- Stanford Institute for Human-Centered Synthetic Intelligence, “AI Index Report 2024”. Hyperlink

- European Fee, “Synthetic Intelligence Act: 2024 compromise textual content and summaries”. Hyperlink

- NIST, “AI Danger Administration Framework (AI RMF 1.0)”, 2023. Hyperlink

- NVIDIA technical blogs on mannequin based mostly reinforcement studying and simulation platforms resembling Isaac Health club and Omniverse. Hyperlink