Most builders deal with prompting as an afterthought—write one thing affordable, observe the output, and iterate if wanted. That strategy works till reliability turns into vital. As LLMs transfer into manufacturing programs, the distinction between a immediate that normally works and one which works constantly turns into an engineering concern. In response, the analysis group has formalized prompting right into a set of well-defined strategies, every designed to deal with particular failure modes—whether or not in construction, reasoning, or type. These strategies function solely on the immediate layer, requiring no fine-tuning, mannequin modifications, or infrastructure upgrades.

This text focuses on 5 such strategies: role-specific prompting, unfavorable prompting, JSON prompting, Attentive Reasoning Queries (ARQ), and verbalized sampling. Reasonably than masking acquainted baselines like zero-shot or fundamental chain-of-thought, the emphasis right here is on what modifications when these strategies are utilized. Every is demonstrated by way of side-by-side comparisons on the identical job, highlighting the affect on output high quality and explaining the underlying mechanism.

Right here, we’re establishing a minimal surroundings to work together with the OpenAI API. We securely load the API key at runtime utilizing getpass, initialize the shopper, and outline a light-weight chat wrapper to ship system and consumer prompts to the mannequin (gpt-4o-mini). This retains our experimentation loop clear and reusable whereas focusing solely on immediate variations.

The helper features (part and divider) are only for formatting outputs, making it simpler to check baseline vs. improved prompts facet by facet. In case you don’t have already got an API key, you possibly can create one from the official dashboard right here: https://platform.openai.com/api-keys

import json

from openai import OpenAI

import os

from getpass import getpass

os.environ['OPENAI_API_KEY'] = getpass('Enter OpenAI API Key: ')

shopper = OpenAI()

MODEL = "gpt-4o-mini"

def chat(system: str, consumer: str, **kwargs) -> str:

"""Minimal wrapper across the chat completions endpoint."""

response = shopper.chat.completions.create(

mannequin=MODEL,

messages=[

{"role": "system", "content": system},

{"role": "user", "content": user},

],

**kwargs,

)

return response.decisions[0].message.content material

def part(title: str) -> None:

print()

print("=" * 60)

print(f" {title}")

print("=" * 60)

def divider(label: str) -> None:

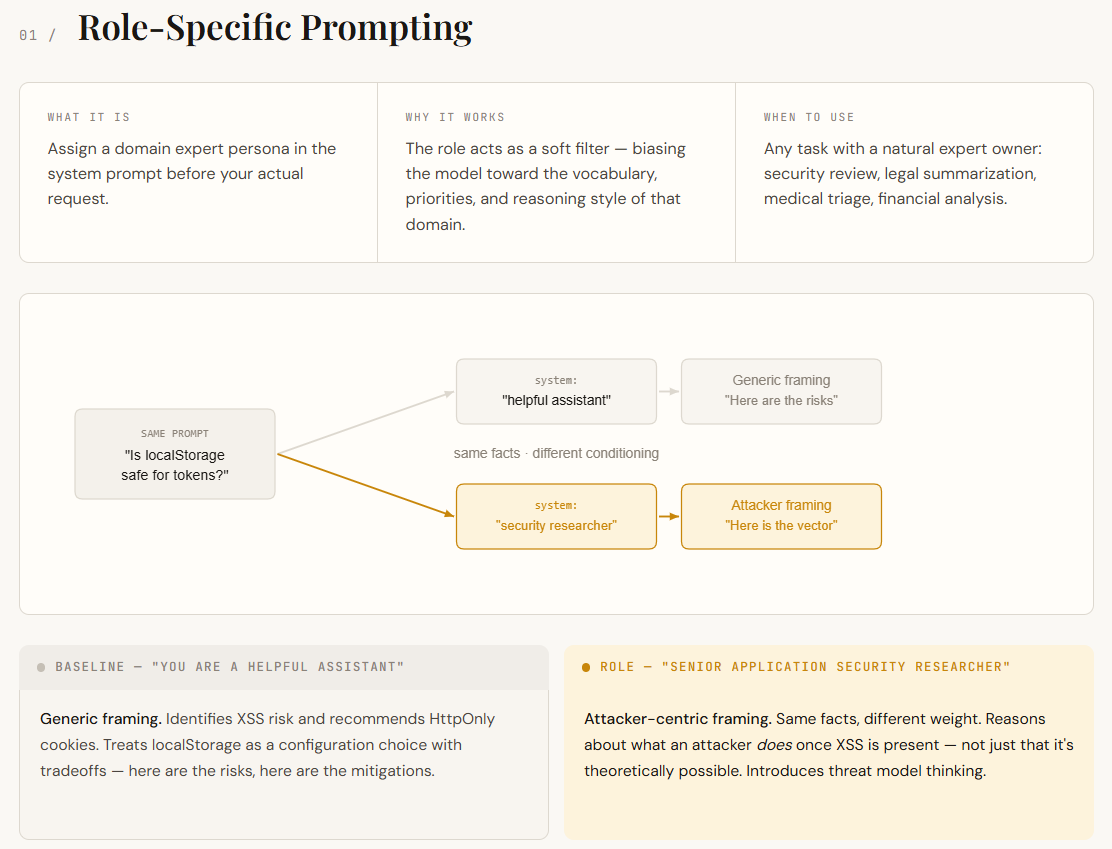

print(f"n── {label} {'─' * (54 - len(label))}")Language fashions are skilled on a large mixture of domains—safety, advertising and marketing, authorized, engineering, and extra. Whenever you don’t specify a task, the mannequin pulls from all of them, which ends up in solutions which can be usually right however considerably generic. Position-specific prompting fixes this by assigning a persona within the system immediate (e.g., “You’re a senior utility safety researcher”). This acts like a filter, pushing the mannequin to reply utilizing the language, priorities, and reasoning type of that area.

On this instance, each responses determine the XSS threat and suggest HttpOnly cookies — the underlying details are similar. The distinction is in how the mannequin frames the issue. The baseline treats localStorage as a configuration selection with tradeoffs. The role-specific response treats it as an assault floor: it causes about what an attacker can do as soon as XSS is current, not simply that XSS is theoretically potential. That shift in framing — from “listed below are the dangers” to “here’s what an attacker does with these dangers” — is the conditioning impact in motion. No new info was supplied. The immediate simply modified which a part of the mannequin’s data bought weighted.

part("TECHNIQUE 1 -- Position-Particular Prompting")

QUESTION = "Our net app shops session tokens in localStorage. Is that this an issue?"

baseline_1 = chat(

system="You're a useful assistant.",

consumer=QUESTION,

)

role_specific = chat(

system=(

"You're a senior utility safety researcher specializing in "

"net authentication vulnerabilities. You suppose by way of assault "

"floor, risk fashions, and OWASP tips."

),

consumer=QUESTION,

)

divider("Baseline")

print(baseline_1)

divider("Position-specific (safety researcher)")

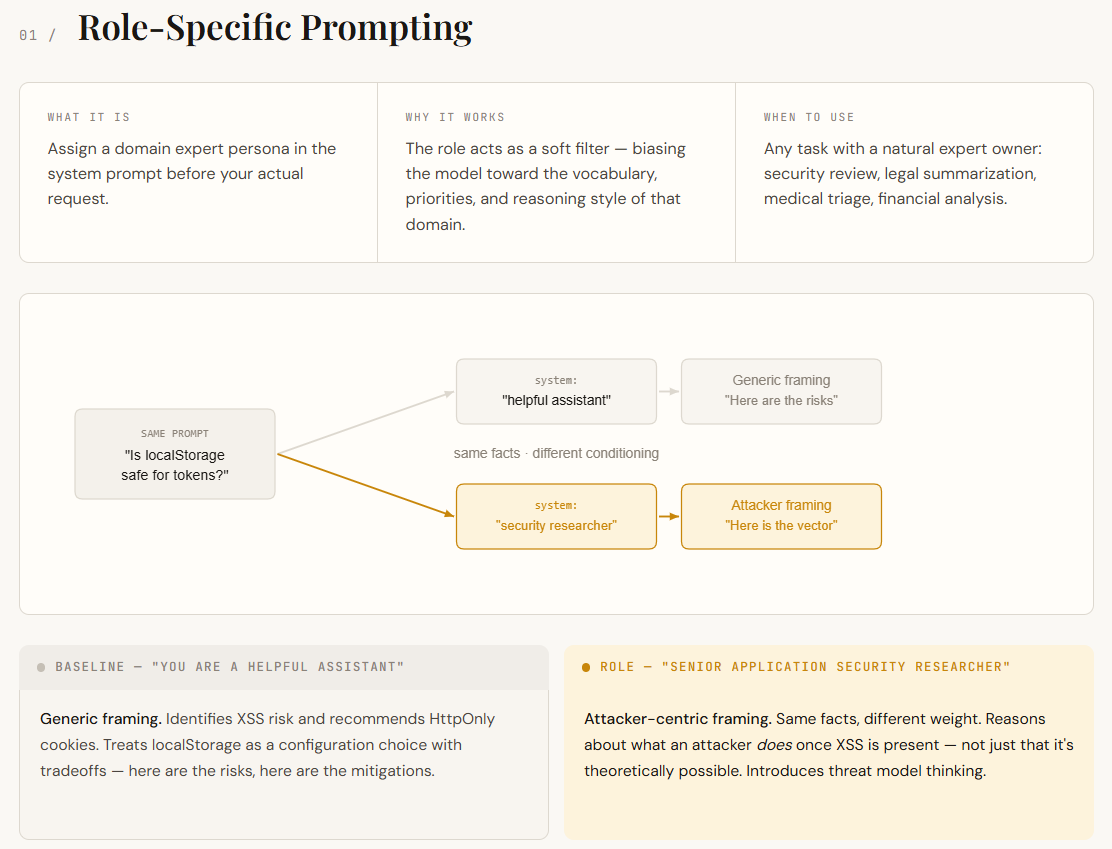

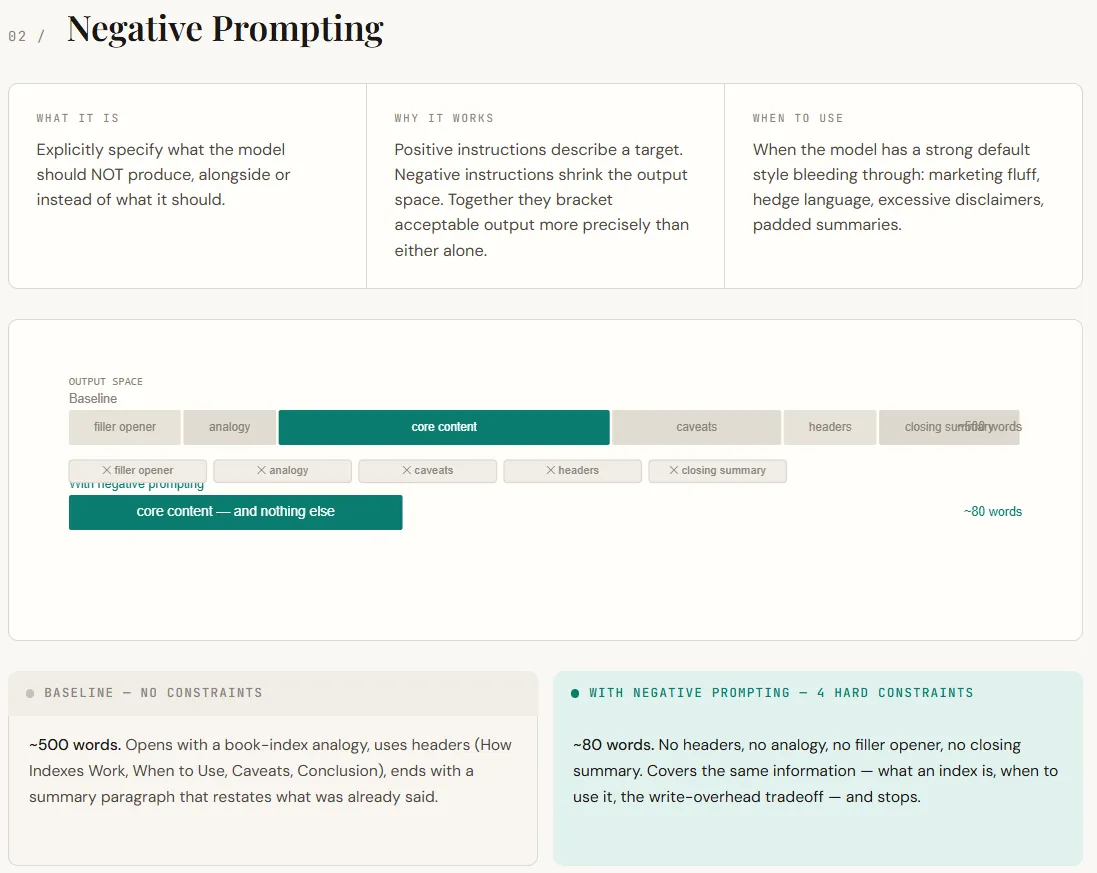

print(role_specific)Destructive prompting focuses on telling the mannequin what to not do. By default, LLMs comply with patterns discovered throughout coaching and RLHF—they add pleasant openings, analogies, hedging (“it relies upon”), and shutting summaries. Whereas this makes responses really feel useful, it usually provides pointless noise in technical contexts. Destructive prompting works by eradicating these defaults. As a substitute of simply describing the specified output, you additionally prohibit undesirable behaviors, which narrows the mannequin’s output area and results in extra exact responses.

Within the output, the distinction is straight away seen. The baseline response stretches into an extended, structured rationalization with analogies, headers, and a redundant conclusion. The negatively prompted model delivers the identical core info in a a lot shorter kind—direct, concise, and with out filler. Nothing important is misplaced; the immediate merely removes the mannequin’s tendency to over-explain and pad the response.

part("TECHNIQUE 2 -- Destructive Prompting")

TOPIC = "Clarify what a database index is and if you'd use one."

baseline_2 = chat(

system="You're a useful assistant.",

consumer=TOPIC,

)

unfavorable = chat(

system=(

"You're a senior backend engineer writing inner documentation.n"

"Guidelines:n"

"- Do NOT use advertising and marketing language or filler phrases like 'nice query' or 'actually'.n"

"- Do NOT embody caveats like 'it relies upon' with out instantly resolving them.n"

"- Do NOT use analogies until they're essential. In case you use one, hold it to at least one sentence.n"

"- Do NOT pad the response -- when you've made the purpose, cease.n"

),

consumer=TOPIC,

)

divider("Baseline")

print(baseline_2)

divider("With unfavorable prompting")

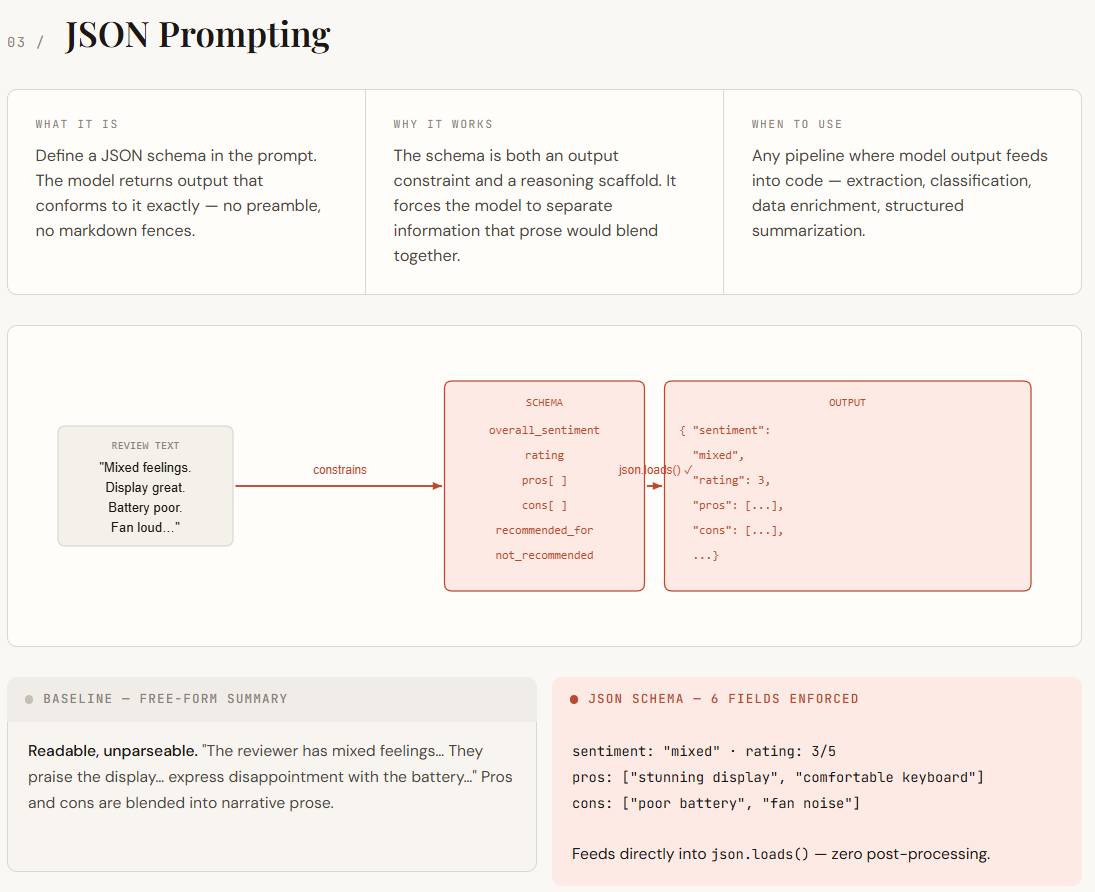

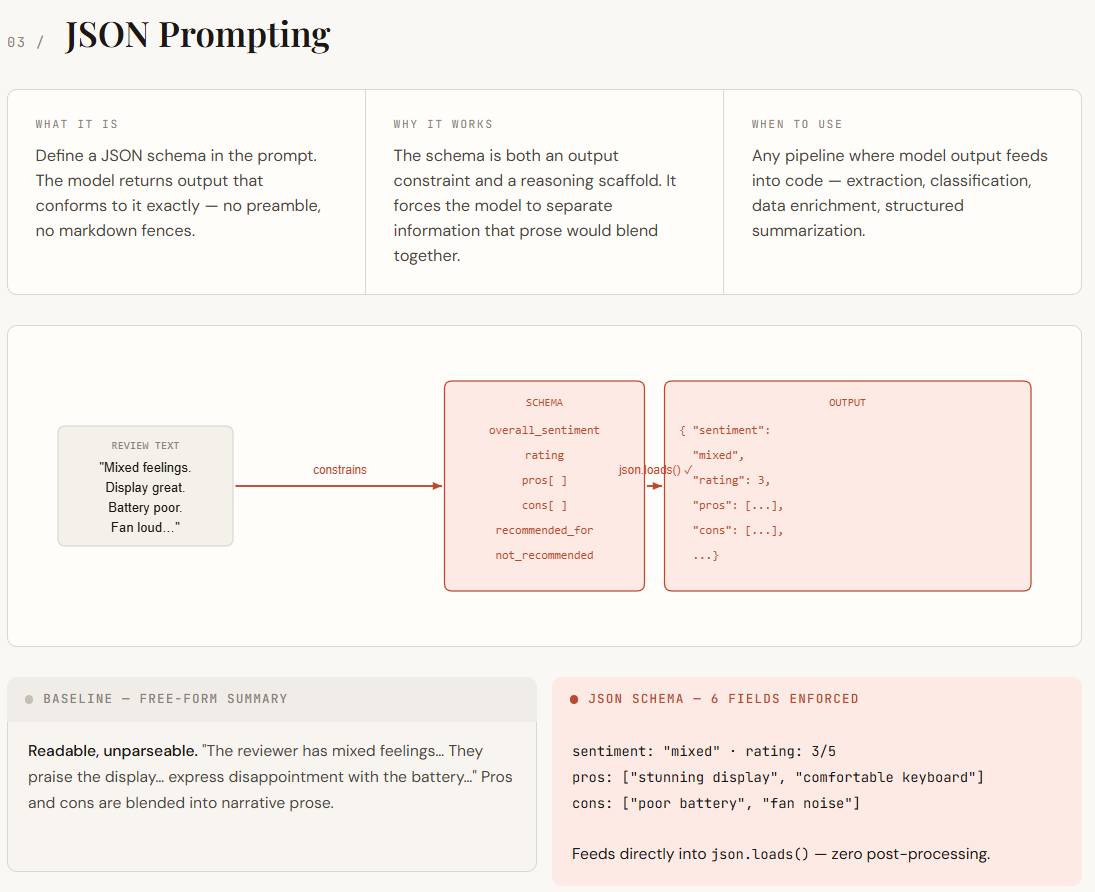

print(unfavorable)JSON prompting turns into essential when LLM outputs must be consumed by code reasonably than simply learn by people. Free-form responses are inconsistent—construction varies, key particulars are embedded in paragraphs, and small wording modifications break parsing logic. By defining a JSON schema within the immediate, you flip construction into a tough constraint. This not solely standardizes the output format but in addition forces the mannequin to prepare its reasoning into clearly outlined fields like professionals, cons, sentiment, and score.

Within the output, the distinction is evident. The baseline response is readable however unstructured—professionals, cons, and sentiment are blended into narrative textual content, making it troublesome to parse. The JSON-prompted model, nevertheless, returns clear, well-defined fields that may be straight loaded and utilized in code with none post-processing. Data that was beforehand implied is now specific and separated, making the output straightforward to retailer, question, and evaluate at scale.

part("TECHNIQUE 3 -- JSON Prompting")

REVIEW = """

Actually blended emotions about this laptop computer. The show is gorgeous -- simply one of the best I've

seen at this value vary -- and the keyboard is surprisingly comfy for lengthy periods.

Battery life, then again, barely will get me by way of a 6-hour workday, which is

disappointing. Fan noise beneath load can also be fairly aggressive. For mild work it is nice,

however I would not suggest it for anybody who must run heavy software program.

"""

SCHEMA = """

unfavorable

"""

baseline_3 = chat(

system="You're a useful assistant.",

consumer=f"Summarize this product evaluation:nn{REVIEW}",

)

json_output = chat(

system=(

"You're a product evaluation parser. Extract structured info from critiques.n"

"You MUST return solely a sound JSON object. No preamble, no rationalization, no markdown fences.n"

f"The JSON should match this schema precisely:n{SCHEMA}"

),

consumer=f"Parse this evaluation:nn{REVIEW}",

)

divider("Baseline (free-form)")

print(baseline_3)

divider("JSON prompting (uncooked output)")

print(json_output)

divider("Parsed & usable in code")

parsed = json.masses(json_output)

print(f"Sentiment : {parsed['overall_sentiment']}")

print(f"Score : {parsed['rating']}/5")

print(f"Professionals : {', '.be a part of(parsed['pros'])}")

print(f"Cons : {', '.be a part of(parsed['cons'])}")

print(f"Beneficial for : {parsed['recommended_for']}")

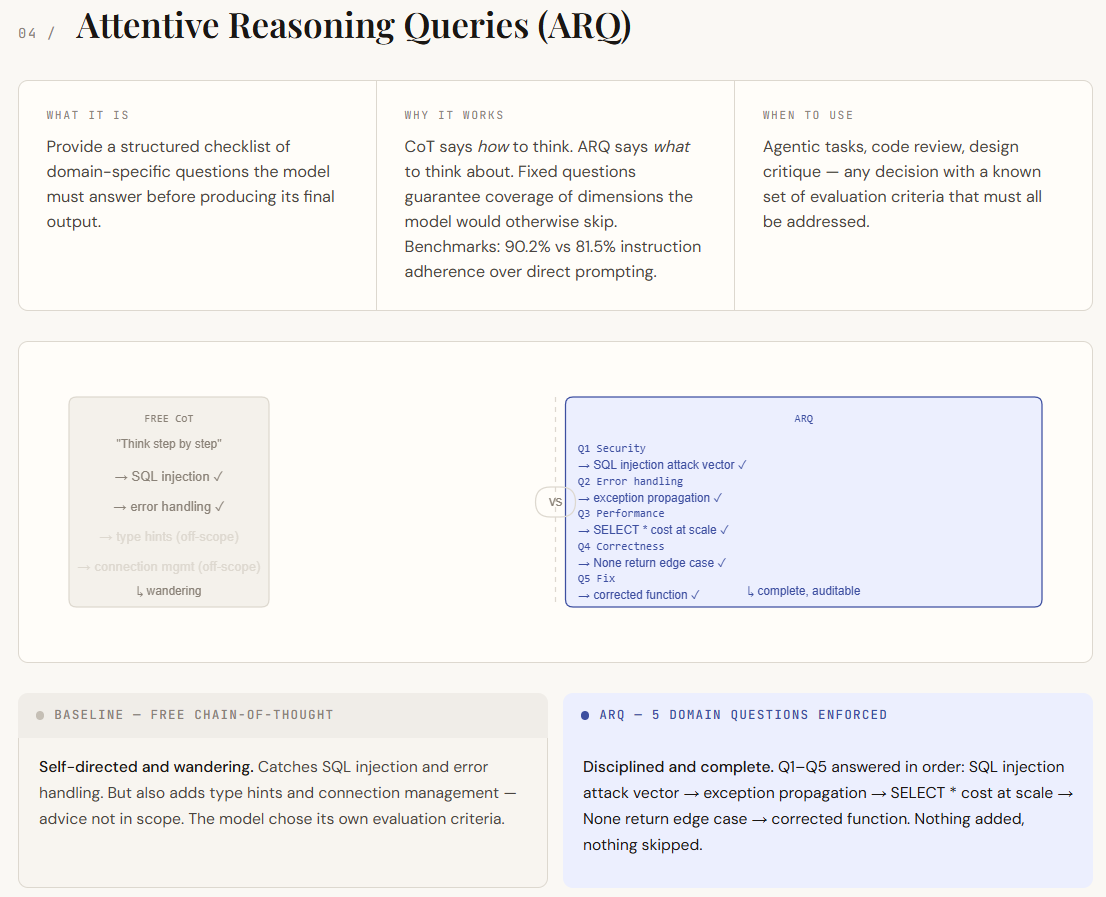

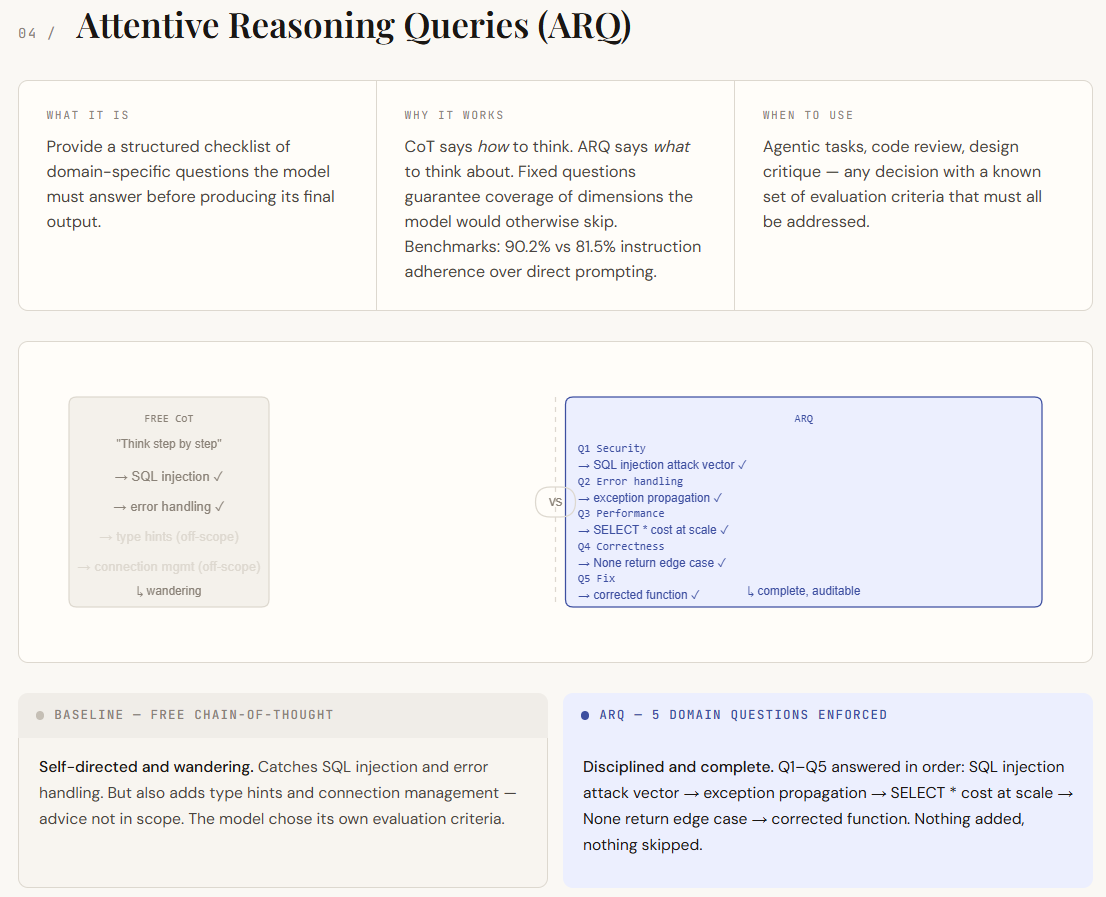

print(f"Keep away from if : {parsed['not_recommended_for']}")Attentive Reasoning Queries (ARQ) construct on chain-of-thought prompting however take away its greatest weak spot—unstructured reasoning. In normal CoT, the mannequin decides what to deal with, which might result in gaps or irrelevant particulars. ARQ replaces this with a set set of domain-specific questions that the mannequin should reply so as. This ensures that every one vital points are coated, shifting management from the mannequin to the immediate designer. As a substitute of simply guiding how the mannequin thinks, ARQ defines what it should take into consideration.

Within the output, the distinction exhibits up as self-discipline and protection. The baseline CoT response identifies key points however drifts into much less related areas and misses deeper evaluation in locations. The ARQ model, nevertheless, systematically addresses every required level—clearly isolating vulnerabilities, dealing with edge instances, and evaluating efficiency implications. Every query acts as a checkpoint, making the response extra structured, full, and simpler to audit.

part("TECHNIQUE 4 -- Attentive Reasoning Queries (ARQ)")

CODE_TO_REVIEW = """

def get_user(user_id):

question = f"SELECT * FROM customers WHERE id = {user_id}"

consequence = db.execute(question)

return consequence[0] if consequence else None

"""

ARQ_QUESTIONS = """

Earlier than giving your last evaluation, reply every of the next questions so as:

Q1 [Security]: Does this code have any injection vulnerabilities?

If sure, describe the precise assault vector.

Q2 [Error handling]: What occurs if db.execute() throws an exception?

Is that acceptable?

Q3 [Performance]: Does this question retrieve extra knowledge than essential?

What's the price at scale?

This fall [Correctness]: Are there edge instances within the return logic that might

trigger a silent bug downstream?

Q5 [Fix]: Write a corrected model of the operate that addresses

all points discovered above.

"""

baseline_cot = chat(

system="You're a senior software program engineer. Suppose step-by-step.",

consumer=f"Assessment this Python operate:nn{CODE_TO_REVIEW}",

)

arq_result = chat(

system="You're a senior software program engineer conducting a security-aware code evaluation.",

consumer=f"Assessment this Python operate:nn{CODE_TO_REVIEW}nn{ARQ_QUESTIONS}",

)

divider("Baseline (free CoT)")

print(baseline_cot)

divider("ARQ (structured reasoning guidelines)")

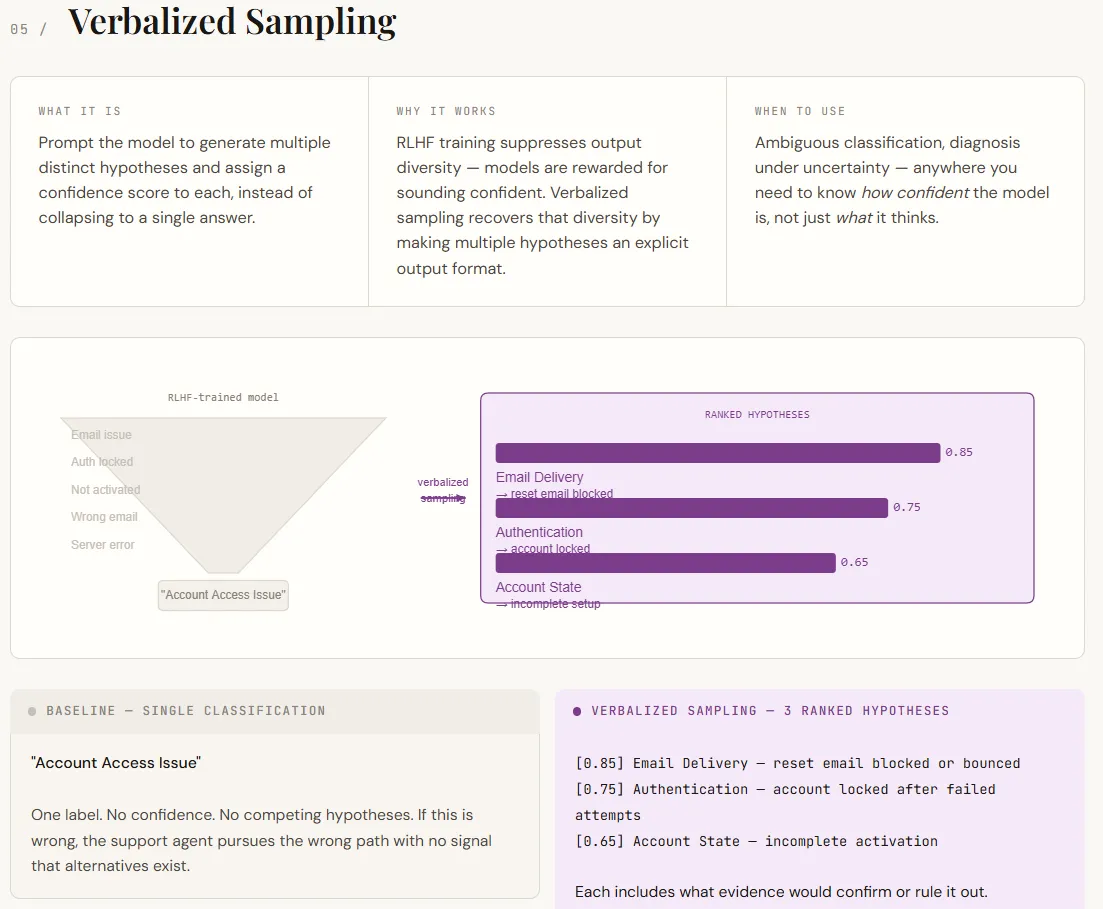

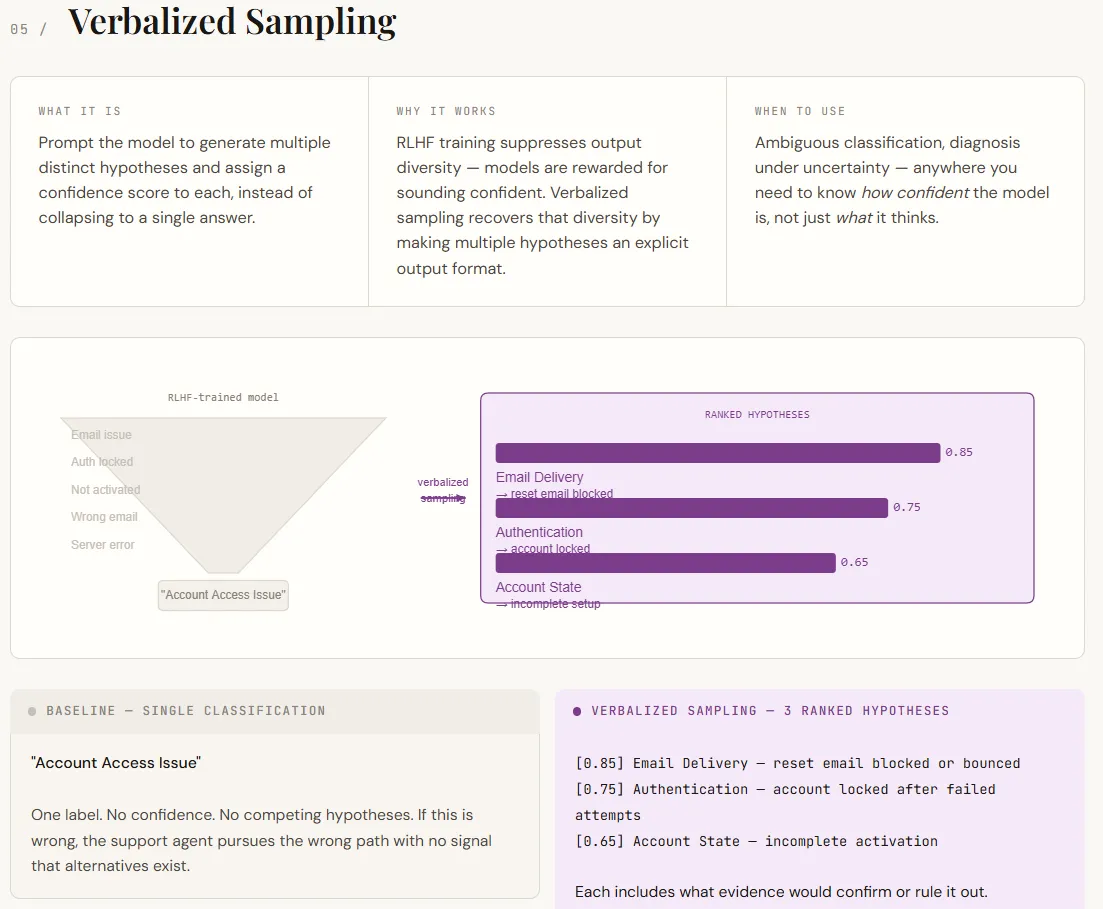

print(arq_result)Verbalized sampling addresses a key limitation of LLMs: they have a tendency to return a single, assured reply even when a number of interpretations are potential. This occurs as a result of alignment coaching favors decisive outputs. In consequence, the mannequin hides its inner uncertainty. Verbalized sampling fixes this by explicitly asking for a number of hypotheses, together with confidence rankings and supporting proof. As a substitute of forcing one reply, it surfaces a variety of believable outcomes—all inside the immediate, with no need mannequin modifications.

Within the output, this shifts the consequence from a single label to a structured diagnostic view. The baseline offers one classification with no indication of uncertainty. The verbalized model, nevertheless, lists a number of ranked hypotheses, every with a proof and a solution to validate or reject it. This makes the output extra actionable, turning it right into a decision-making help reasonably than simply a solution. The arrogance scores themselves aren’t exact chances, however they successfully point out relative chance, which is usually enough for prioritization and downstream workflows.

part("TECHNIQUE 5 -- Verbalized Sampling")

SUPPORT_TICKET = """

Hello, I arrange my account final week however I can not log in anymore. I attempted resetting

my password however the e mail by no means arrives. I additionally tried a special browser. Nothing works.

"""

baseline_5 = chat(

system="You're a help ticket classifier. Classify the problem.",

consumer=f"Ticket:n{SUPPORT_TICKET}",

)

verbalized = chat(

system=(

"You're a help ticket classifier.n"

"For every ticket, generate 3 distinct hypotheses in regards to the root trigger. "

"For every speculation:n"

" - State the class (Authentication, E-mail Supply, Account State, Browser/Shopper, Different)n"

" - Describe the particular failure moden"

" - Assign a confidence rating from 0.0 to 1.0n"

" - State what extra info would affirm or rule it outnn"

"Order hypotheses by confidence (highest first). "

"Then present a advisable first motion for the help agent."

),

consumer=f"Ticket:n{SUPPORT_TICKET}",

)

divider("Baseline (single reply)")

print(baseline_5)

divider("Verbalized sampling (a number of hypotheses + confidence)")

print(verbalized)Try the Full Codes with Pocket book right here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to hitch our 130k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be a part of us on telegram as nicely.

Must associate with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and many others.? Join with us