Your file add works completely in improvement.

You check it regionally. Possibly even with a number of customers. All the things feels easy and dependable.

Then actual customers arrive.

All of a sudden, uploads fail midway. Giant recordsdata outing. Servers decelerate. And customers begin abandoning the method.

That is the place most groups hit a harsh actuality:

What works in improvement not often works at scale.

A scalable file add API isn’t nearly dealing with extra customers. It’s about surviving real-world situations like unstable networks, giant recordsdata, world visitors, and unpredictable conduct.

On this information, you’ll study:

- Why file add methods fail at scale

- The hidden architectural points behind these failures

- How one can design a dependable, scalable add system that really works in manufacturing

Key Takeaways

- File add failures at scale are brought on by concurrency, giant recordsdata, and unstable networks

- Single-request uploads are fragile and unreliable in manufacturing environments

- Chunking, retries, and parallel uploads are important for scalability

- Backend-heavy architectures create efficiency bottlenecks

- Managed options simplify complexity and enhance reliability

Why File Add APIs Work in Testing however Fail in Manufacturing

File add APIs usually really feel dependable throughout testing as a result of every thing occurs beneath best situations corresponding to quick networks, small recordsdata, and minimal visitors. However as soon as actual customers are available with bigger recordsdata, unstable connections, and simultaneous uploads, those self same methods begin to break in methods you didn’t anticipate.

The “It Works on My Machine” Drawback

In improvement, every thing feels predictable. You’re working with a quick, secure web connection, testing with small recordsdata, and normally working only one or two uploads at a time. Underneath these situations, your file add API performs precisely as anticipated. It’s easy, quick, and dependable.

However manufacturing is a totally completely different story.

Actual customers don’t behave like check environments. They add giant recordsdata, typically 100MB or extra. A number of customers are importing on the similar time. And never everybody has a secure connection; some are on sluggish WiFi, others on cellular information with frequent interruptions.

This mismatch between managed testing and real-world utilization is the place issues begin to disintegrate. What appeared like a strong system instantly struggles beneath stress, revealing weaknesses that have been by no means seen throughout improvement.

What “Scale” Actually Means

When individuals discuss scale, they usually suppose it merely means extra customers or extra visitors. However in file add methods, scale is way more advanced than that.

It’s a mixture of a number of components occurring on the similar time. You might need a whole lot of customers importing recordsdata concurrently, every with completely different file sizes; some small, some extraordinarily giant. On high of that, these customers are unfold throughout completely different places, all connecting via networks that fluctuate in pace and reliability.

All of those variables mix to create stress in your system in ways in which aren’t apparent throughout testing. A setup that works completely for 10 uploads can begin to wrestle and even fail utterly when it has to deal with 1,000 uploads beneath real-world situations.

7 Causes Your File Add API Fails at Scale

When add methods begin failing in manufacturing, it’s not often on account of a single situation. Extra usually, it’s a mixture of architectural choices that work advantageous in small-scale environments however break beneath real-world stress. Let’s stroll via the most typical causes this occurs.

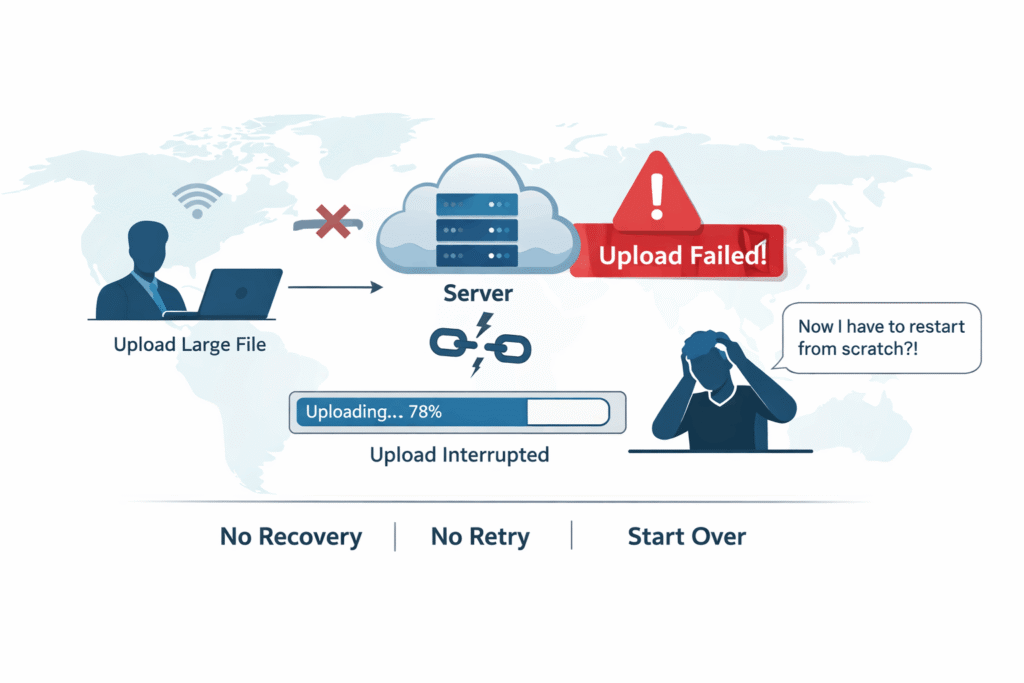

1. Single Request Add Structure

Some of the widespread errors is attempting to add a complete file in a single request. It appears easy and works properly throughout testing, but it surely turns into extraordinarily fragile at scale.

In real-world situations, even a small interruption like a quick community drop or a timeout could cause your complete add to fail. And when that occurs, the person has to begin over from the start. There’s no restoration mechanism, no retry logic, and no solution to resume progress. It’s all or nothing.

2. No Chunking or Resumable Uploads

With out chunking, your add system has no flexibility. Information are handled as one giant unit, which implies any failure resets your complete course of.

This leads to a couple main issues:

- Customers should restart uploads from zero after any interruption

- Frustration will increase, particularly with giant recordsdata

- Completion charges drop considerably

At scale, this method merely doesn’t maintain up. Resumable uploads aren’t a “nice-to-have” function; they’re a necessity for sustaining reliability and person belief.

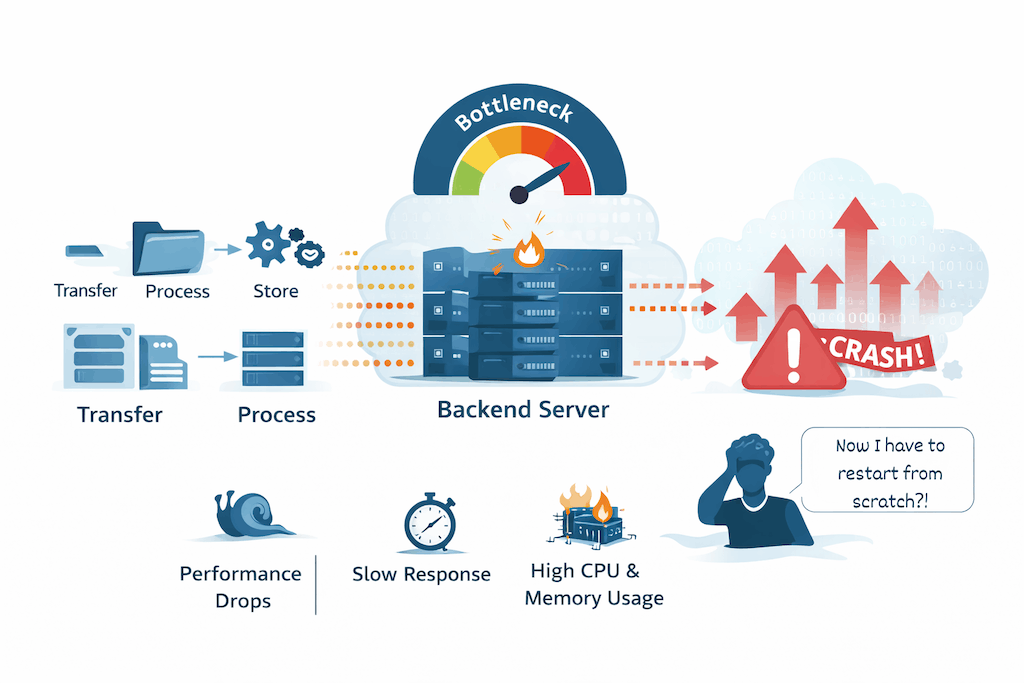

3. Backend Bottlenecks

Many methods route file uploads via their backend servers. Whereas this would possibly appear to be an easy method, it shortly turns into a bottleneck as utilization grows.

Your backend finally ends up doing every thing:

- Dealing with file transfers

- Processing uploads

- Storing information

As visitors will increase, this creates heavy stress in your server’s CPU and reminiscence. Efficiency begins to degrade, response instances improve, and in some instances, the system may even crash beneath load.

4. Poor Community Failure Dealing with

In improvement, networks are secure. In manufacturing, they’re not.

Customers expertise:

- Sudden connection drops

- Fluctuating bandwidth

- Packet loss

In case your system isn’t designed to deal with these points, uploads will fail unpredictably. With out correct retry logic or restoration mechanisms, these failures usually occur silently, leaving customers confused and annoyed.

5. Lack of Parallel Add Technique

Importing recordsdata one after one other may appear environment friendly in small-scale situations, but it surely doesn’t work properly when demand will increase.

Sequential uploads:

- Take longer to finish

- Underutilize out there assets

- Decelerate the general expertise

At scale, this results in noticeable delays and poor efficiency. Programs that don’t help parallel uploads wrestle to maintain up with person expectations.

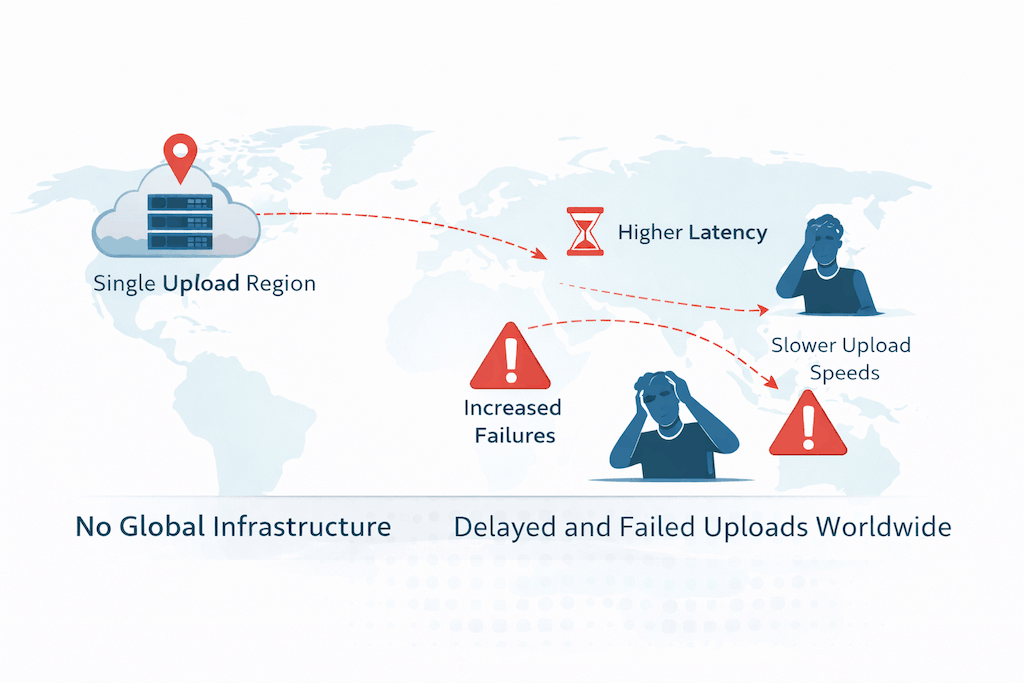

6. No International Infrastructure

In case your add system is tied to a single area, customers in different components of the world will really feel the impression instantly.

They expertise:

- Greater latency

- Slower add speeds

- Elevated probabilities of failure

As your person base grows globally, these points develop into extra pronounced. With out distributed infrastructure, your system merely can’t ship constant efficiency.

7. Lacking File Validation and Processing Technique

At scale, file uploads contain extra than simply storing information. You should handle what’s being uploaded and the way it’s dealt with.

This contains:

- Validating file sorts

- Imposing measurement limits

- Changing codecs when wanted

- Extracting metadata

If these processes aren’t automated, your system turns into inconsistent and more durable to keep up. Errors improve, edge instances pile up, and the general reliability of your add pipeline begins to say no.

What Occurs When Add Programs Fail

When a file add system begins failing, the impression goes far past only a damaged function. It creates a ripple impact throughout customers, enterprise efficiency, and engineering groups, usually abruptly.

Consumer Affect

From a person’s perspective, even a single failed add feels irritating. The expertise shortly breaks down when uploads stall midway or fail with out clear explanations. Most customers don’t perceive what went incorrect. They simply see that it didn’t work.

They struggle once more. And typically once more.

However after a number of failed makes an attempt, persistence runs out. Many customers merely abandon the method altogether, particularly if the duty feels time-consuming or unreliable.

Enterprise Affect

These small moments of frustration add up shortly on the enterprise stage. Failed uploads can immediately impression conversions, particularly in workflows like onboarding, content material submission, or transactions that rely on file uploads.

Over time, this results in:

- Decrease conversion charges

- Interrupted or failed transactions

- A noticeable improve in help requests

Extra importantly, it damages belief. If customers really feel like your platform isn’t dependable, they’re far much less more likely to come again.

Engineering Affect

Behind the scenes, failing add methods put fixed stress on engineering groups. As a substitute of constructing new options, builders find yourself spending time debugging points in manufacturing.

This usually results in:

- Ongoing firefighting and reactive fixes

- Rising infrastructure and upkeep prices

- Rising issue when attempting to scale additional

What begins as a small technical situation can shortly flip right into a long-term operational burden if not addressed correctly.

How one can Construct a Scalable File Add API

Now let’s transfer from issues to options. Constructing a scalable file add API isn’t about one single repair; it’s about combining the precise methods to deal with real-world situations reliably.

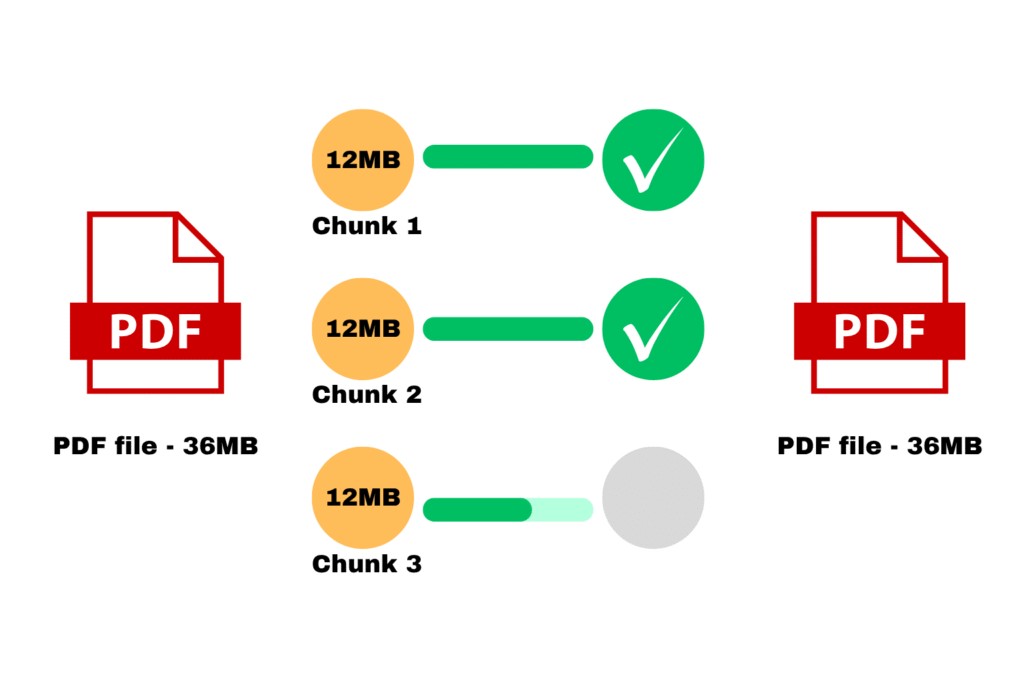

1. Implement Chunked Uploads

As a substitute of importing a complete file in a single go, break it into smaller items. Every chunk could be uploaded independently, which makes the method way more resilient.

If one thing fails, you don’t should restart every thing. Solely the failed chunks should be retried, permitting customers to renew uploads with out shedding progress. This easy shift dramatically improves reliability, particularly for giant recordsdata and unstable networks.

Parallel chunk file importing

2. Add Clever Retry Logic

Failures are inevitable, so your system needs to be designed to deal with them gracefully.

A sturdy add system contains:

- Computerized retries when a bit fails

- Exponential backoff to keep away from overwhelming the community

- The flexibility to get well partially accomplished uploads

As a substitute of treating failures as exceptions, you deal with them as anticipated occasions and that’s what makes the system resilient.

3. Use Direct-to-Cloud Uploads

Routing recordsdata via your backend may appear logical at first, but it surely doesn’t scale properly. A greater method is to add recordsdata immediately from the person to cloud storage.

The stream turns into easy:

Consumer → Cloud Storage

This method reduces the load in your servers, hastens uploads, and removes a serious bottleneck out of your structure. It additionally permits your backend to deal with what it does greatest, as an alternative of dealing with heavy file transfers.

4. Allow Parallel Importing

Importing recordsdata or chunks one after the other is inefficient, particularly when customers are coping with giant recordsdata.

By permitting a number of chunks to add concurrently, you’ll be able to considerably enhance efficiency. This results in sooner add instances, higher use of accessible bandwidth, and a smoother expertise total.

5. Present Correct Progress Suggestions

From the person’s perspective, visibility is every thing. In the event that they don’t know what’s occurring, even a working add can really feel damaged.

That’s why it’s essential to indicate:

- Actual-time progress indicators

- Clear add standing updates

- Significant error messages when one thing goes incorrect

This not solely reduces frustration but additionally builds belief in your system.

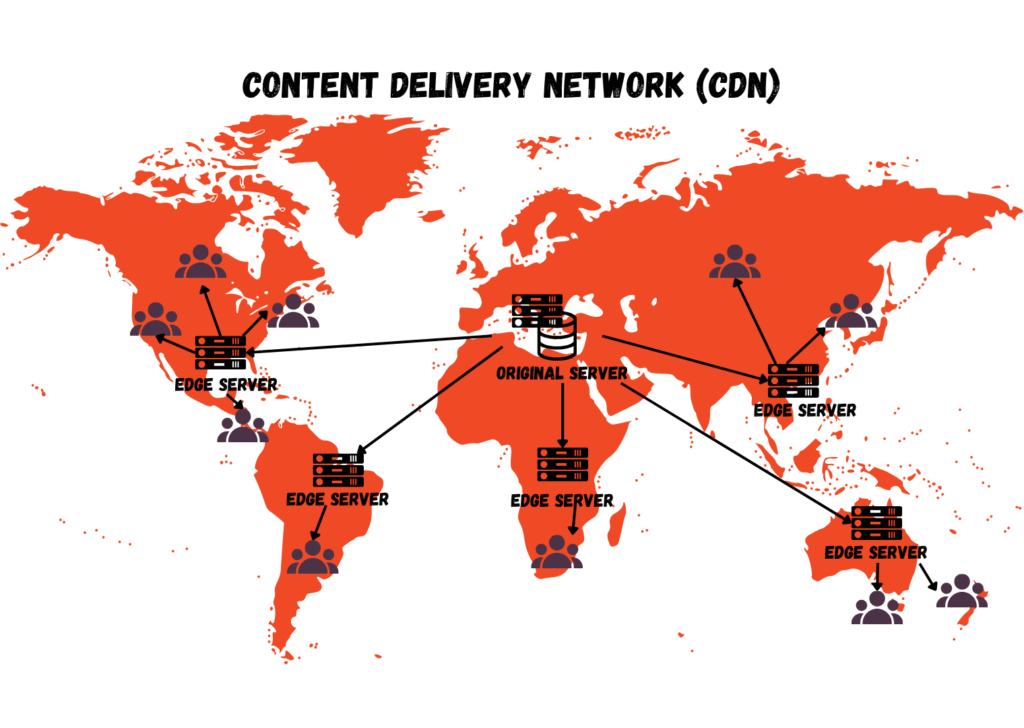

6. Optimize for International Efficiency

In case your customers are unfold throughout completely different areas, your add system must help that.

Utilizing globally distributed infrastructure, corresponding to CDN-backed uploads, regional endpoints, and edge networks helps be sure that customers get constant efficiency regardless of the place they’re. It reduces latency, hastens uploads, and lowers the probabilities of failure.

A content material supply community (CDN)

7. Automate File Processing

At scale, handbook dealing with of recordsdata isn’t sensible. Your system ought to routinely handle every thing that occurs after add.

This contains:

- Compressing recordsdata

- Changing codecs

- Validating file sorts and sizes

- Optimizing content material for supply

Automation retains your workflow constant, reduces errors, and ensures your system can deal with rising demand with out added complexity.

Why Constructing This Internally Will get Difficult

At first, file uploads appear easy.

Only a file enter and an API endpoint.

However at scale, complexity grows shortly:

- Chunk administration

- Retry methods

- Distributed structure

- Storage integrations

- Safety necessities

What begins as a easy function turns into a long-term engineering problem.

How Managed Add APIs Clear up These Issues

As a substitute of constructing every thing from scratch, many groups use managed options like Filestack.

These platforms are designed particularly to deal with scale.

Key Capabilities

- Constructed-in chunking and resumable uploads

- Direct-to-cloud infrastructure

- International CDN supply

- Automated file processing

- Safety and validation options

This enables groups to deal with their product as an alternative of infrastructure.

Instance Implementation Strategy

A typical implementation is simple:

- Combine the add SDK into your frontend

- Configure storage and safety insurance policies

- Allow chunking and retry logic

- Join uploads on to cloud storage

Generally, you’ll be able to go from setup to production-ready uploads in a fraction of the time it might take to construct every thing internally.

Conclusion

File add APIs don’t fail due to small bugs.

They fail as a result of they aren’t designed for real-world scale.

A really scalable file add API requires:

- Chunked uploads

- Retry mechanisms

- Direct-to-cloud structure

Constructing this from scratch is feasible—however advanced.

For many groups, the smarter method is to take away failure factors as an alternative of including complexity.

As a result of on the finish of the day, the purpose isn’t simply to add recordsdata.

It’s to verify uploads work reliably—each single time.