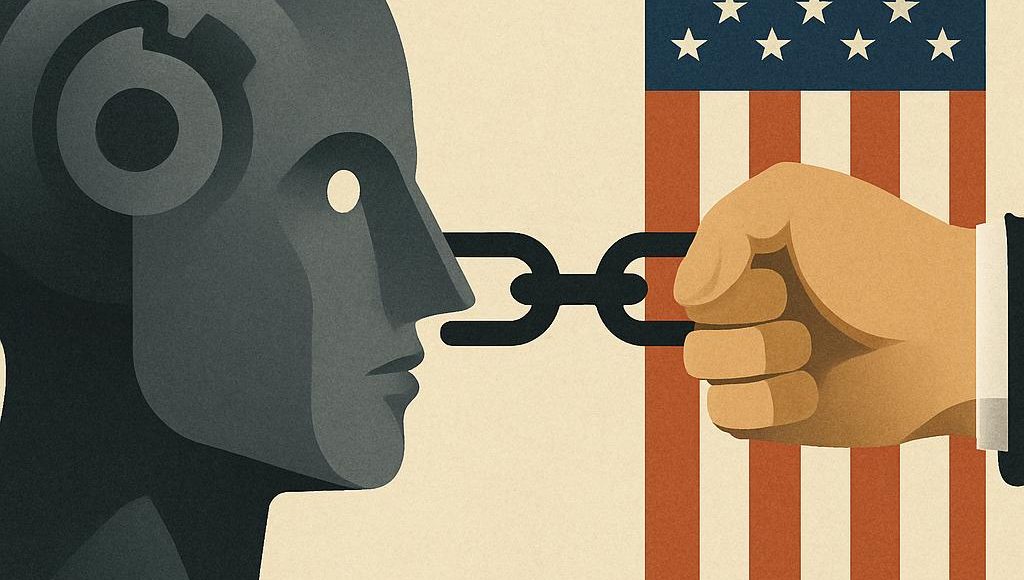

US Threats to Anthropic And The Future Of AI

America authorities is shifting from enthusiastic AI booster to assertive rule setter, and Anthropic, the protection targeted lab behind Claude, now sits in the course of that flip. In 2023, the US accounted for about 60 % of worldwide frontier AI mannequin coaching runs, in accordance with the Stanford AI Index, so decisions made in Washington can reshape which instruments college students, builders, and corporations can depend on worldwide. As officers float more durable scrutiny of frontier labs and trace at “additional motion” towards firms like Anthropic, the result will affect not just one firm’s destiny but additionally world norms on security, openness, and innovation. When you use superior AI for examine, work, or analysis, understanding these US threats is now not a distinct segment coverage concern, it’s a sensible query about whether or not your favourite instruments will nonetheless be there subsequent semester or subsequent quarter.

Key Takeaways

- US coverage choices on nationwide safety, competitors, and security might materially limit or reshape Anthropic’s merchandise, partnerships, and progress.

- Anthropic’s security targeted identification will be each a protect and a goal, attracting cooperation with regulators whereas inviting deeper scrutiny and obligations.

- Export controls, licensing guidelines, and antitrust actions are probably the most reasonable channels for severe US authorities stress on frontier AI labs.

- How US establishments deal with Anthropic will assist set world precedents for AI governance, entry, and the steadiness between innovation and management.

Your AI Future May Change Sooner Than You Count on

Think about Dropping Entry to Claude Mid Semester

Image a scholar who depends on Anthropic’s Claude fashions for drafting essays, debugging code, and exploring sophisticated readings in coverage or biology. These instruments quietly weave themselves into each day routines, particularly as soon as instructors and employers begin anticipating AI assisted output. Now think about that in a single day, a brand new US rule forces Anthropic to limit sure areas or education schemes from accessing its most succesful fashions. The coed logs in to Claude throughout midterms and sees a message explaining that entry has been restricted beneath up to date US compliance necessities. Deadlines stay, expectations stay, and but the first AI assistant has modified habits or disappeared for causes that really feel distant and political. This state of affairs feels dramatic, but it captures the downstream influence that nationwide safety and export management choices can set off when frontier AI sits inside a small variety of US managed labs.

Why One Coverage Battle Issues For Everybody Utilizing AI

Most individuals don’t observe US congressional hearings or government orders till these choices out of the blue contact the instruments they use each day. Anthropic operates in a class referred to as frontier AI, that means common objective fashions on the reducing fringe of functionality and potential danger, and US officers more and more deal with such programs as strategic property. In 2023, the White Home AI Govt Order directed the Division of Commerce to discover necessities for firms coaching massive fashions above particular compute thresholds, a class that clearly contains Anthropic and different main labs. When a US administration alerts that it’d pursue “additional motion” towards a specific lab, buyers, universities, startups, and international governments listen. This isn’t solely about one firm’s regulatory complications, since choices taken in Washington can affect which security strategies grow to be necessary, which enterprise fashions survive, and who will get to entry probably the most highly effective AI programs. For readers who merely need dependable instruments, the stakes revolve round continued entry, belief in safeguards, and the tempo of enchancment in fashions like Claude.

Anthropic, Possession, And Why Washington Cares

What Is Anthropic?

Anthropic is a San Francisco primarily based synthetic intelligence firm that describes itself as an AI security and analysis lab targeted on constructing useful, trustworthy, and innocent programs. It was based in 2021 by former OpenAI researchers, together with Dario Amodei and Daniela Amodei, who left over disagreements about security and route. The corporate is finest recognized for its Claude household of fashions, which compete with instruments like OpenAI’s GPT sequence and Google’s Gemini for duties equivalent to writing, coding, evaluation, and dialog. Anthropic’s researchers helped popularize the thought of “Constitutional AI,” a coaching methodology that guides fashions utilizing a written set of ideas quite than purely human reinforcement. For readers new to this house, it will possibly assist to see Anthropic as each a product firm and a coverage experiment in easy methods to embed security values into frontier AI from the beginning, an strategy that regulators watch very carefully.

Who Owns Anthropic?

Anthropic is a privately held firm with a fancy cap desk that blends founders, workers, enterprise backers, and main strategic buyers like Amazon and Google. In 2023, Amazon introduced as much as 4 billion {dollars} in funding in Anthropic, together with an preliminary 1.25 billion greenback dedication, in change for making Amazon Internet Providers a main cloud supplier for mannequin coaching and deployment. Google’s mother or father firm Alphabet has additionally invested a whole lot of hundreds of thousands of {dollars} and hosts some Anthropic workloads on Google Cloud, so two US tech giants maintain vital stakes and operational affect. Public reporting from The Info and different retailers has positioned Anthropic’s valuation within the 15 to twenty billion greenback vary after these funding rounds, though the corporate stays removed from public itemizing. No authorities owns Anthropic, but the mix of US investor management, US primarily based infrastructure, and US employees makes the corporate deeply uncovered to American regulation and coverage. For regulators, this possession combine raises questions on competitors, focus of AI energy, and the leverage that enormous cloud suppliers may train over frontier analysis labs.

Why Is The US Authorities Involved About Anthropic?

US concern about Anthropic varieties a part of a broader unease about all frontier AI labs, quite than a novel obsession with one agency. Officers fear that extraordinarily succesful fashions might be misused for cyber assaults, organic weapon design, or massive scale disinformation, themes that seem within the White Home AI Govt Order and in Division of Protection discussions. As a result of Anthropic trains fashions at scale utilizing superior chips like NVIDIA’s A100 and H100, its work intersects instantly with export controls and nationwide safety debates about China and different opponents. Lawmakers additionally see focus of energy amongst a handful of labs tied to massive tech platforms and ask whether or not that construction threatens competitors or public accountability. Businesses such because the Federal Commerce Fee have warned AI firms towards overstating security claims or underplaying dangers, which is especially related for a agency that markets itself as security first. When Anthropic executives testify earlier than Congress, they’re typically handled as each companions in managing danger and as potential topics of future oversight, a twin function that creates strategic uncertainty for product planning and partnerships.

How US Politics Turned Frontier Labs Into Strategic Property

From AI Darlings To Nationwide Safety Priorities

Within the early wave of public pleasure round instruments like ChatGPT and Claude, a lot of the political dialog targeted on innovation, productiveness, and competitiveness. Inside a 12 months, that tone shifted as nationwide safety businesses, together with the Division of Protection and the intelligence group, started describing superior AI as essential infrastructure, comparable in sensitivity to satellites or cryptography. The Stanford AI Index reported that in 2023, greater than 80 % of huge language fashions with publicly recognized coaching areas had been educated in the USA, which concentrated functionality and scrutiny in a single jurisdiction. Coverage specialists at organizations just like the Middle for Safety and Rising Know-how and RAND started publishing detailed reviews about how generative fashions might decrease limitations for cybercrime or organic threats. This type of evaluation influenced White Home considering and gave political cowl to strikes like directing NIST to provide the AI Threat Administration Framework, a voluntary however influential steering doc. In consequence, firms like Anthropic now discover themselves handled much less like strange software program startups and extra like twin use know-how distributors whose work touches protection, intelligence, and world energy balances, an evolution that impacts every thing from hiring to launch cadence.

What “Additional Motion” Towards Anthropic May Really Imply

When a US administration says it is not going to rule out “additional motion” towards a particular AI firm, that phrase can sound like a risk of shutdown, but the reasonable instruments are extra nuanced. In apply, Washington can open investigations by businesses just like the FTC or Division of Justice, which could study claims about security, knowledge use, or competitors with companions equivalent to Amazon and Google. Officers also can tighten export controls on superior chips or on offering mannequin entry to sure international customers, which might not directly stress Anthropic’s progress technique with out instantly concentrating on the corporate by identify. In excessive circumstances, nationwide safety critiques by our bodies just like the Committee on Overseas Funding in the USA, referred to as CFIUS, may study specific funding preparations or knowledge flows if international buyers are concerned. One other believable type of “additional motion” is to require licensing or registration for coaching fashions above an outlined compute threshold, which the White Home government order already directed businesses to discover and that matches into broader AI governance developments. For customers, what many individuals underestimate is that these bureaucratic sounding steps can translate into slower releases, regional restrictions, or extra restricted mannequin capabilities in on a regular basis instruments.

The Most important US Coverage Instruments That May Stress Anthropic

Regulatory Levers Washington Can Pull

A number of branches of the US authorities already maintain concrete instruments that might change Anthropic’s trajectory with out passing model new legal guidelines. The Federal Commerce Fee can examine whether or not advertising and marketing claims about security, reliability, or coaching knowledge privateness are misleading or unfair, as FTC Chair Lina Khan has warned in weblog posts about AI hype. The Division of Justice and state attorneys common can have a look at whether or not Anthropic’s partnerships with Amazon and Google reduce competitors in cloud companies or AI markets, drawing on current antitrust circumstances towards Google and Meta as templates. The Division of Commerce, by the Bureau of Trade and Safety, controls export licenses for superior chips and should lengthen sure export restrictions to cowl entry to mannequin weights or extremely succesful hosted programs. Nationwide safety businesses, such because the Division of Protection, can form habits by tying contract alternatives to particular security, audit, and entry management necessities, which might grow to be de facto requirements for the entire sector. Congress also can create legal responsibility guidelines for AI harms, and even with out new statutes, plaintiffs can attempt to use present product legal responsibility or client safety doctrines to sue labs over misuse, which might push regulators to make clear expectations. For an organization like Anthropic, every of those levers provides a distinct sort of strategic danger, from compliance value and delay to potential fines or structural modifications.

Fable Versus Actuality About A Potential Crackdown

A standard mistake in public debate is the belief that US officers need to shut down frontier AI labs solely, which doesn’t match present coverage paperwork or public hearings. Lawmakers from each events repeatedly state that they view superior AI as important to financial progress and nationwide protection, they usually fear that an excessive amount of restriction would hand a bonus to rivals like China and speed up the AI arms race. The extra reasonable state of affairs includes progressively tighter obligations equivalent to necessary purple teaming, third occasion security audits, and incident reporting, much like patterns seen in cybersecurity regulation. One other false impression is that if Anthropic had been severely constrained, various instruments would instantly fill the hole on the identical degree of functionality and trustworthiness. In actuality, most frontier labs rely upon comparable US primarily based compute and provide chains, and open supply fashions, whereas highly effective, might not but match closed programs like Claude on security alignment for probably the most hazardous duties. Meaning heavy handed or poorly tailor-made actions might slender the frontier mannequin ecosystem quite than merely shifting utilization from one firm to a different, a danger that coverage makers and customers typically overlook.

Why Coverage Hits Anthropic, OpenAI, And Google In a different way

Key Variations Between Anthropic And OpenAI

Anthropic and OpenAI are sometimes grouped collectively as frontier labs, but their governance and positioning create totally different coverage exposures. OpenAI operates beneath a capped revenue construction anchored by a nonprofit, with Microsoft as a controlling companion for a lot of enterprise choices and cloud operations. Anthropic, in contrast, stays a typical for revenue company, though it has mentioned exploring profit company constructions, and it deliberately cut up main investments between Amazon and Google quite than counting on a single large companion. OpenAI leans closely on mass client deployment by ChatGPT, which raises content material moderation, youngster security, and misinformation questions for regulators worldwide. Anthropic, at the very least to date, has targeted extra on enterprise and developer APIs, which shifts consideration towards safety, reliability, and compliance in skilled settings. In public testimony and weblog posts, Anthropic leaders repeatedly foreground Constitutional AI and alignment analysis, whereas OpenAI tends to spotlight a mixture of openness, ubiquity, and security, which creates totally different expectations when policymakers choose efficiency towards guarantees. These distinctions matter when businesses select targets for antitrust fits, client safety actions, or partnership contracts which will reshape market construction.

How US Coverage Publicity Varies Throughout Main Labs

US regulatory and political dangers don’t fall evenly throughout Anthropic, OpenAI, Google DeepMind, and Meta, as a result of every group has a distinct mixture of enterprise strains and public narratives. Google and Meta already face lengthy operating antitrust and privateness battles, so any AI investments will be seen as extensions of present dominant platforms, a context that Anthropic partly avoids as a more recent unbiased agency. OpenAI’s shut relationship with Microsoft concentrates danger round a single company alliance, elevating questions on the Division of Justice and European Fee about bundling AI into Home windows and Workplace. Meta’s determination to launch open weight Llama fashions has attracted consideration from safety specialists and policymakers anxious that diffuse highly effective programs could be more durable to regulate, despite the fact that supporters argue that openness improves oversight. Anthropic’s heavy reliance on exterior cloud suppliers makes it weak to shifts in export controls and knowledge residency guidelines, as a result of it can not absolutely reconfigure infrastructure with out cooperation from companions. When the White Home or Congress designs guidelines round frontier fashions, every lab’s structure, funding, and branding affect how expensive compliance can be and the way credible their enter seems throughout negotiations.

Comparability Desk: Coverage Publicity Throughout Main AI Labs

The next comparability highlights how totally different AI labs may expertise US coverage stress when regulators give attention to security, competitors, and nationwide safety.

| Issue / Threat | Anthropic | OpenAI | Google / DeepMind | Meta (Llama) |

|---|---|---|---|---|

| Most important enterprise mannequin | API and enterprise entry to Claude fashions | API, ChatGPT client apps, enterprise merchandise | Built-in AI throughout search, cloud, and productiveness instruments | Open weight fashions plus integration into social platforms |

| Security branding | Robust AI security and alignment emphasis | Security plus fast product launches | Accountable AI framed inside massive tech governance | Open innovation posture with guardrails messaging |

| Huge tech dependence | Vital reliance on Amazon and Google cloud | Deep integration with Microsoft Azure | Primarily in home knowledge facilities and infrastructure | In home infrastructure with some exterior companions |

| Main US coverage dangers | Frontier mannequin controls, export compliance, partnership scrutiny | Licensing, security obligations, competitors questions | Antitrust, content material regulation, privateness enforcement | Content material moderation, antitrust, open mannequin misuse considerations |

Seeing Anthropic towards this panorama clarifies that its greatest vulnerabilities lie the place security and nationwide safety considerations intersect with concentrated compute and cloud partnerships, quite than in client going through moderation fights that dominate debate about social networks.

Timeline: How US Scrutiny Of Frontier AI Has Escalated

Key Moments In Authorities Consideration To Anthropic

Anthropic’s story unfolds in parallel with a fast shift in US AI governance, and mapping that timeline helps clarify why threats from Washington have grown sharper. In 2021 and early 2022, Anthropic raised its first main funding rounds and printed early analysis on Constitutional AI, whereas US debate centered totally on algorithmic bias and social media suggestion programs. In 2023, the Biden administration launched the Blueprint for an AI Invoice of Rights after which the AI Govt Order, which explicitly referenced frontier fashions and directed businesses to work with labs, together with Anthropic, on security testing and reporting. Across the identical time, Anthropic joined the Frontier Mannequin Discussion board with OpenAI, Microsoft, and Google, committing to voluntary security measures equivalent to purple teaming and vulnerability disclosure applications. In 2024, congressional hearings started drilling into the small print of compute thresholds, licensing, and export guidelines, and Anthropic’s leaders testified in regards to the want for cautious however agency oversight of probably the most succesful programs. As political management shifted and a few officers started signaling that stronger measures towards particular person labs had been on the desk, analysts began treating Anthropic as a bellwether case for the way the US may self-discipline frontier AI gamers and handle world AI competitiveness.

Election Cycles And Shifting AI Rhetoric

Election years are likely to amplify considerations about know-how, and AI now sits on the middle of debates over jobs, nationwide safety, and knowledge integrity. Candidates in each main US events promise to be powerful on massive tech whereas additionally endorsing innovation, a balancing act that may create sudden rhetorical swings between reward for AI management and calls to crack down on perceived threats. Coverage researchers at Brookings and Carnegie Endowment word that rising know-how typically turns into a symbolic problem in campaigns, the place nuanced regulation provides option to headline pleasant proposals. This political volatility issues for Anthropic as a result of new appointees at businesses just like the FTC, DOJ, and Commerce can reinterpret present authorities with out ready for Congress to go detailed AI legal guidelines. Some election platforms emphasize defending jobs from automation and limiting company management over AI, which might translate into stronger labor and competitors oversight on labs that offer enterprise automation instruments. Different platforms prioritize strategic rivalry with China and due to this fact favor heavy help for home leaders like Anthropic whereas demanding strict controls on mannequin exports and entry, a sample that matches into narratives about whether or not China is advancing sooner in AI. The result of those cycles will affect whether or not Anthropic faces a extra cooperative or a extra confrontational coverage setting within the subsequent few years.

Inside Anthropic’s Security And Governance Strategies

How Constitutional AI And Security Evaluations Work

Understanding Anthropic’s technical strategy helps clarify why US regulators watch it carefully and generally deal with it as a mannequin for safer practices. Constitutional AI, launched in Anthropic analysis papers, trains fashions to observe a written set of ideas derived from paperwork just like the UN Common Declaration of Human Rights and different normative sources. Throughout coaching, these ideas information the mannequin’s habits by automated suggestions and human evaluation, instructing it to refuse dangerous requests equivalent to directions for constructing weapons, whereas nonetheless aiding on benign duties. Anthropic publicly describes intensive purple teaming of Claude fashions, the place inside groups and exterior companions probe for vulnerabilities in areas like cyber safety, biology, and persuasion. The corporate has participated in authorities organized evaluations, together with purple teaming occasions hosted on the UK AI Security Summit, which align with NIST’s AI Threat Administration Framework that encourages systematic testing, transparency, and documentation. These technical and procedural steps present up in Anthropic’s mannequin playing cards and system reviews, which regulators and enterprise patrons more and more use as reference factors for what “accountable AI” appears to be like like in apply. Many observers underestimate how useful resource intensive this analysis pipeline is, because it requires specialised area specialists and steady iteration as fashions evolve and as regulatory expectations increase.

Operational Safety, Export Controls, And Compute Administration

The US authorities’s concern about mannequin weights leaking or being stolen by adversaries has pushed Anthropic and friends to undertake stricter operational safety practices. That features segregated coaching environments on cloud platforms, superb grained entry management for delicate mannequin artifacts, and logging programs that observe who interacts with inside instruments, according to steering from businesses like CISA on defending essential software program infrastructure. Anthropic should additionally navigate US export management guidelines that limit the sale of superior chips and, more and more, restrict sure AI companies to areas of concern, particularly the place there may be perceived danger of army or surveillance utilization. Studies from CSET estimate {that a} very excessive share of high tier AI accelerators originate from US firms equivalent to NVIDIA, which suggests denial of export licenses or new guidelines on distant entry can ripple instantly into Anthropic’s coaching schedules. To handle prices and compliance, the lab has to plan compute utilization years forward, negotiate capability with Amazon and Google, and design mannequin architectures that may scale beneath constrained {hardware} budgets. These operational realities form every thing from launch timing to which analysis instructions are possible, since some explorations of bigger or extra specialised fashions could be delayed in the event that they threaten to exceed regulatory thresholds or cloud capability commitments.

Three Ignored Realities About US Threats To Anthropic

Hidden Infrastructure And Compliance Prices

One perception that many public discussions miss is the sheer value of complying with evolving US AI guidelines, significantly for a corporation that markets security as a core product characteristic. Constructing inside coverage groups, sustaining audit trails that meet doable NIST or FTC expectations, and integrating purple teaming outcomes into product workflows all require vital employees and tooling. As regulators request extra detailed reporting on coaching runs, security incidents, and mitigation plans, Anthropic should divert engineering expertise from pure analysis into documentation and governance programs. This burden could be manageable for a nicely funded lab, but it creates a aggressive moat that disadvantages smaller entrants and open supply initiatives that can’t match compliance expenditure. When coverage commentators describe security necessities as gentle contact or voluntary, they typically ignore these alternative prices that form which organizations can realistically take part on the frontier. For Anthropic, accepting this overhead is partly a strategic alternative, because it reinforces its popularity with policymakers, however it additionally locks the corporate right into a path the place failure to fulfill rising requirements might be judged extra harshly than for much less vocal opponents.

Dependence On A Slender Chip And Cloud Provide Chain

One other underexplored problem lies in Anthropic’s dependence on a slender set of {hardware} and cloud suppliers which are themselves targets of US regulatory and geopolitical stress. Coaching Claude degree fashions usually requires tens of 1000’s of superior GPUs, and proper now which means processors designed by US corporations and manufactured by advanced world provide chains monitored by export management businesses. If Washington broadens restrictions on promoting or remotely offering entry to particular chip generations for fashions above sure sizes, Anthropic would have restricted speedy choices for various {hardware}. Cloud companions like Amazon and Google additionally should adjust to sanctions, intelligence group tips, and potential requests for presidency entry beneath legal guidelines such because the CLOUD Act, all of which might affect Anthropic’s structure choices. This stack of dependencies signifies that even when US regulators by no means identify Anthropic instantly, coverage modifications geared toward chips, cloud, or knowledge flows can influence the corporate’s capabilities and prices. Many practitioners inside massive AI organizations now dedicate as a lot effort to anticipating these provide chain constraints as they do to designing new mannequin architectures, which will increase planning complexity and danger.

Reputational Threat From Being The “Security Lab”

A 3rd hole in frequent evaluation considerations the reputational dynamics that include branding Anthropic as the protection centered various to different frontier labs. This identification opens doorways in Washington and at worldwide summits, as a result of officers need to showcase companions who seem aligned with accountable AI ideas. That very same picture, nevertheless, creates expectations that Anthropic will push for and adjust to the strictest doable norms, which might flip into stress if incidents happen or if inside analysis reveals worrying capabilities. Civil society teams and educational researchers might maintain Anthropic to a better customary than they apply to corporations that don’t emphasize security, scrutinizing deployment choices for indicators of compromise beneath investor or companion stress. Traders, for his or her half, may query how far the corporate is keen to sluggish or restrict product rollouts in response to coverage considerations, significantly if rivals transfer sooner. This mix of political entry and heightened scrutiny makes Anthropic uniquely delicate to US threats that contact its security narrative, equivalent to inquiries into whether or not Claude’s guardrails genuinely stop excessive danger misuse in areas like biosecurity or superior cyber operations.

Actual World Case Research: Coverage, Security, And Frontier Labs

Microsoft And OpenAI Beneath US Antitrust And Security Highlight

One instructive case includes the rising antitrust and security scrutiny of Microsoft’s partnership with OpenAI, which alerts how US regulators may strategy Anthropic’s alliances. The Federal Commerce Fee opened an inquiry in 2024 into whether or not Microsoft’s relationship with OpenAI ought to be handled as a de facto acquisition, regardless of not following conventional merger processes. On the identical time, members of Congress questioned whether or not integrating GPT fashions deeply into Home windows and Workplace centralizes an excessive amount of AI energy beneath a single company umbrella. Microsoft responded by emphasizing security investments and oversight constructions, together with inside Accountable AI workplaces, as proof that highly effective fashions could be monitored and constrained. This expertise means that comparable questions might come up round Amazon’s and Google’s affect over Anthropic, particularly if Claude turns into embedded broadly in cloud and productiveness suites. It additionally demonstrates that security narratives, whereas useful, don’t stop regulators from probing market construction and management over frontier AI capabilities.

Google DeepMind’s Engagement With UK And US Security Initiatives

One other related instance comes from Google DeepMind’s participation within the UK AI Security Summit and associated transatlantic coverage work, which foreshadows how Anthropic could be built-in into formal governance efforts. On the 2023 summit, DeepMind offered technical papers and joined different labs, together with Anthropic and OpenAI, in committing to mannequin evaluations by unbiased specialists from academia and authorities. The UK authorities established an AI Security Institute that now collaborates with NIST and US businesses on shared analysis strategies, creating cross border norms for easy methods to check frontier programs for harmful capabilities. For DeepMind, this involvement strengthens its popularity as a accountable actor, however it additionally binds the corporate to rising requirements which will later inform binding regulation. Anthropic, which took half in comparable discussions and demonstrated Claude’s security options on the summit, faces a comparable path the place voluntary commitments grow to be reference factors for US policymakers. This case illustrates how worldwide security diplomacy can flip into de facto regulatory baselines that frontier labs should internalize of their analysis and product cycles.

Meta’s Open Llama Fashions And Coverage Backlash Considerations

A 3rd case includes Meta’s launch of open weight Llama fashions, which sparked vital debate within the coverage group about open versus closed approaches to highly effective AI. When Llama 2 and Llama 3 appeared, open supply advocates praised Meta for enabling broad analysis and innovation, whereas some safety specialists warned that simply downloadable weights might be tailored for dangerous makes use of with out oversight. Studies from suppose tanks like RAND and CSET explored situations by which open fashions may help in growing malware or designing dangerous organic sequences, though empirical proof of such misuse stays restricted and context dependent. Policymakers took discover, and a few proposed guidelines that may deal with open and closed frontier fashions in a different way by way of reporting obligations or export controls. For Anthropic, which has chosen to not launch Claude weights and as a substitute focuses on tightly managed APIs, this episode demonstrates each the perceived security benefits and the strategic dangers of its strategy. If regulators resolve that managed entry is safer, Anthropic’s mannequin strengthens, but if open supply ecosystems achieve political favor as instruments for transparency and resilience, the corporate may face new types of criticism.

Regularly Requested Questions

What are the principle US threats to Anthropic proper now?

Essentially the most vital US threats to Anthropic come from regulatory and coverage channels quite than speedy bans. Businesses just like the Federal Commerce Fee might examine claims about security, knowledge practices, or competitors, particularly given Anthropic’s shut ties to Amazon and Google. Export controls managed by the Division of Commerce may restrict entry to superior chips or limit offering Claude’s capabilities to sure international locations. Congress might create licensing or reporting necessities for coaching massive fashions that enhance compliance prices and sluggish releases. Collectively, these pressures create uncertainty about Anthropic’s progress, product roadmap, and worldwide attain, a sample that matches current strikes mentioned in evaluation of the White Home AI regulation guidelines.

May the US authorities truly shut down Anthropic’s AI analysis?

A full shutdown of Anthropic’s analysis operations is unlikely beneath present regulation and political situations, but extra focused actions are believable. Officers are extra targeted on shaping habits by oversight, audits, and partnership situations than on eliminating frontier labs solely. Nationwide safety instruments like CFIUS or export controls might constrain particular funding preparations or abroad collaborations in the event that they increase purple flags. Security laws may require pauses or redesigns of sure mannequin coaching runs till analysis standards are met. For many customers, the chance is extra about slowed progress and restricted entry than full disappearance of Claude.

How does the White Home AI Govt Order have an effect on Anthropic?

The 2023 White Home AI Govt Order instantly impacts Anthropic as a result of it targets frontier mannequin builders above sure compute thresholds. It requires labs coaching very massive fashions to inform the federal government and share security check outcomes, which suggests Anthropic should construct strong analysis and reporting programs. The order additionally duties NIST with growing technical requirements that Anthropic is anticipated to observe if it needs to be seen as a accountable chief. Commerce and different businesses should contemplate export controls and demanding infrastructure safeguards that contact Anthropic’s chip provide and cloud utilization. Over time, these provisions will possible flip into extra detailed steering and presumably binding guidelines that affect each main product Anthropic ships.

Why is Anthropic handled as a nationwide safety concern?

Anthropic is handled as a nationwide safety concern as a result of its Claude fashions might, in precept, be misused for prime influence threats if not correctly aligned and managed. Policymakers fear about capabilities like serving to design refined cyber assaults, aiding in organic weapon analysis, or producing convincing disinformation at scale. Frontier labs additionally eat massive quantities of superior computing {hardware} that’s central to US financial and army benefit. When a single firm controls such highly effective programs and tightens partnerships with main cloud suppliers, officers see each alternative and danger. In consequence, nationwide safety businesses watch Anthropic’s analysis and deployment decisions with the identical seriousness they apply to different twin use applied sciences.

How do US export controls on chips influence Anthropic?

US export controls on superior chips like NVIDIA A100 and H100 have an effect on Anthropic by limiting the place and the way it can practice or serve its most succesful fashions. If sure areas or entities can not legally entry excessive finish compute, Anthropic can not deploy Claude at full energy there with out operating afoul of Commerce Division guidelines. Potential future controls on offering distant mannequin entry for delicate duties might additional limit Anthropic’s worldwide buyer base. These constraints additionally affect long run infrastructure planning, for the reason that firm should safe compliant capability on US aligned cloud platforms. In apply, export guidelines act as each a safety measure and a strategic bottleneck for scaling Anthropic’s choices globally.

Is Anthropic extra weak to US regulation than OpenAI or Google?

Anthropic’s vulnerability to US regulation differs from OpenAI or Google quite than merely being higher or smaller. As a youthful firm closely depending on exterior cloud companions, it could have much less leverage in negotiations over compliance and infrastructure than a tech large like Google. Its sturdy security branding invitations each belief and scrutiny, which might lead regulators to count on particularly rigorous safeguards and transparency. OpenAI faces extra consideration round client merchandise and its tight integration with Microsoft, whereas Google offers with lengthy standing antitrust and privateness investigations. Anthropic occupies a center place the place frontier security and nationwide safety considerations are central, and this focus might intensify beneath future coverage regimes.

What function do Amazon and Google play in Anthropic’s US coverage dangers?

Amazon and Google play a big function in Anthropic’s publicity as a result of they supply essential cloud infrastructure and maintain main fairness stakes. Their present regulatory challenges, together with antitrust fits and knowledge safety questions, can spill over into how authorities view their AI partnerships. If regulators fear that Amazon or Google are utilizing Anthropic to entrench dominance in cloud or AI companies, they may scrutinize contract phrases and market impacts. These companions additionally should adjust to export controls and surveillance associated legal guidelines, shaping how Anthropic can architect its programs. Briefly, the identical relationships that give Anthropic scale and assets additionally tie its destiny extra carefully to massive tech regulatory battles.

How might new US AI licensing guidelines have an effect on entry to Claude?

New AI licensing guidelines in the USA might require Anthropic to register sure coaching runs, receive approvals, or show security measures earlier than deploying highly effective Claude variations broadly. That course of may decelerate how rapidly the most recent capabilities attain common customers or particular excessive danger sectors equivalent to bio analysis or cyber operations. Licensing situations may additionally mandate regional restrictions, logging necessities, or identification verification for delicate use circumstances. For enterprises, this might enhance onboarding steps and compliance checks when integrating Claude into workflows. For particular person customers, the influence would possible seem as extra gradual characteristic rollouts and clearer explanations of why some actions are blocked.

What does NIST’s AI Threat Administration Framework imply for Anthropic?

NIST’s AI Threat Administration Framework gives detailed steering on figuring out, assessing, and mitigating dangers throughout an AI system’s lifecycle, which carefully aligns with Anthropic’s security narrative. Whereas the framework is formally voluntary, federal businesses and lots of enterprises use it to form procurement and analysis, successfully turning it right into a tender customary. Anthropic should present that its improvement processes, purple teaming, and monitoring align with NIST’s classes of governance, mapping, measuring, and managing dangers. Doing so helps authorities and enterprise contracts however requires ongoing funding in documentation and course of engineering. Over time, elements of the framework could also be codified into sector particular guidelines, making early adoption a strategic hedge for Anthropic.

How does US public opinion about AI affect authorities threats?

US public opinion influences how aggressive or cautious lawmakers really feel they are often when regulating firms like Anthropic. Surveys from Pew Analysis Middle present {that a} vital share of People really feel extra involved than enthusiastic about AI, and lots of help stronger authorities oversight. Politicians reply to those sentiments by promising to “rein in” AI dangers and shield jobs, which might translate into more durable hearings and stricter proposals concentrating on frontier labs. On the identical time, companies and universities foyer for entry to superior instruments, reminding officers that overly restrictive guidelines might damage competitiveness. This push and pull shapes the tone and content material of threats directed at Anthropic, particularly throughout election seasons.

What’s the distinction between voluntary security commitments and binding regulation for Anthropic?

Voluntary security commitments, equivalent to these Anthropic signed on the White Home and worldwide summits, sign good religion and permit experimentation with finest practices with out speedy authorized penalties. These commitments typically embody pledges to conduct purple teaming, publish system playing cards, and coordinate with authorities on main incidents. Binding regulation, in distinction, would connect authorized penalties to failing particular requirements or ignoring reporting necessities. For Anthropic, voluntary steps assist construct credibility however can evolve into expectations that regulators later formalize into guidelines. The transition from voluntary norms to binding obligations is a central dynamic within the present coverage setting and a key supply of uncertainty.

How may US elections change the trajectory of Anthropic and AI?

US elections can change the trajectory of Anthropic and AI by reshaping management at businesses that management enforcement priorities and interpret present legal guidelines. A authorities that emphasizes nationwide safety may push for stricter export controls and licensing, benefiting labs thought-about cooperative companions however constraining worldwide progress. A management targeted on competitors and labor points might prioritize antitrust actions and employee safety guidelines that have an effect on how rapidly firms undertake automation instruments. Totally different administrations may additionally differ in how a lot they belief trade self regulation versus detailed statutory frameworks for AI. For Anthropic, this implies long run planning should account for a number of situations, starting from collaborative public personal governance to extra adversarial regulatory stances.

Can customers or organizations affect how the US treats Anthropic?

Customers and organizations can affect US therapy of Anthropic not directly by collaborating in public remark processes, trade coalitions, and civil society advocacy. When businesses suggest new AI guidelines or steering, they typically invite suggestions from firms, researchers, and the general public by official remark portals. Universities, enterprises, and advocacy teams that rely upon Claude can submit proof about advantages, dangers, and sensible wants, which might form last laws. Skilled associations and requirements our bodies, equivalent to IEEE or sector particular teams, additionally foyer for balanced approaches that shield security whereas preserving innovation. Whereas particular person customers have much less direct influence, their experiences and considerations typically feed into surveys and media protection that policymakers learn when evaluating AI labs.

Conclusion

US threats to Anthropic sit on the intersection of nationwide safety, competitors, and a real effort to forestall hurt from more and more highly effective AI programs. The identical technical advances that make Claude helpful for college kids, researchers, and companies additionally create stress for governments to know and management how such fashions are educated, deployed, and accessed. Anthropic’s security centered identification provides it a robust voice in these debates, but it additionally signifies that lapses or misalignments might invite particularly sharp responses from regulators who view the corporate as a check case.

For anybody counting on superior AI, the sensible takeaway is to acknowledge that coverage and infrastructure form which instruments stay out there and the way they behave as a lot as mannequin structure does. Organizations ought to observe developments from businesses like NIST, the FTC, and the Division of Commerce, and design their very own AI adoption plans with flexibility for altering entry guidelines or licensing situations. People ought to count on extra transparency, clearer security explanations, and generally slower rollouts as Anthropic and its friends adapt to evolving US necessities. The way forward for AI is not going to be determined solely in analysis labs or market competitors, it would emerge from the continuing dialogue between firms like Anthropic and the governments that more and more deal with their programs as strategic infrastructure, and people who observe that dialogue carefully can be higher ready to guard their very own AI methods.

References

- Stanford Institute for Human-Centered Synthetic Intelligence, “Synthetic Intelligence Index Report 2024.” https://aiindex.stanford.edu/report/

- The White Home, “Govt Order on the Secure, Safe, and Reliable Growth and Use of Synthetic Intelligence,” October 30, 2023. https://www.whitehouse.gov/briefing-room/presidential-actions/2023/10/30/executive-order-on-the-safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence/

- Nationwide Institute of Requirements and Know-how, “Synthetic Intelligence Threat Administration Framework (AI RMF 1.0),” 2023. https://www.nist.gov/itl/ai-risk-management-framework

- Federal Commerce Fee, “Generative AI: A Client Safety and Competitors Enforcement Problem,” FTC Enterprise Weblog, 2023. https://www.ftc.gov/business-guidance/weblog

- Middle for Safety and Rising Know-how, “AI and Compute: How A lot Is Sufficient,” CSET Studies. https://cset.georgetown.edu

- Anthropic, “Constitutional AI: Harmlessness from AI Suggestions,” Anthropic analysis paper and weblog. https://www.anthropic.com

- Amazon, “Amazon and Anthropic announce strategic collaboration,” firm press launch, 2023. https://press.aboutamazon.com

- Alphabet Inc., quarterly and annual reviews referencing AI investments and dangers, SEC EDGAR database. https://www.sec.gov/edgar/search/

- UK Authorities, “AI Security Summit 2023: Outcomes and Proceedings.” https://www.gov.uk/authorities/topical-events/ai-safety-summit-2023

- Pew Analysis Middle, “Public Consciousness of AI and Views on Its Influence on Each day Life,” 2023. https://www.pewresearch.org

- RAND Company, “Dangers of Giant-Scale Misuse of Generative AI,” analysis reviews. https://www.rand.org