AI will get its information from three distinct layers: coaching knowledge, retrieval techniques, and dwell instrument entry like APIs and MCPs.

Every knowledge layer has its personal professionals and cons, so when you’ve ever questioned why an AI confidently advised you one thing flawed, why one instrument appears to find out about final week’s information and one other doesn’t, or why your competitor’s product will get talked about tons whereas yours doesn’t, the reply nearly at all times traces again to which layer answered your query.[/intro_text]

This text is a plain-English clarification of the place AI information really comes from—and why that issues for the way a lot it is best to belief any given response.

Earlier than an AI mannequin ever solutions a single query, it goes by means of a section referred to as coaching.

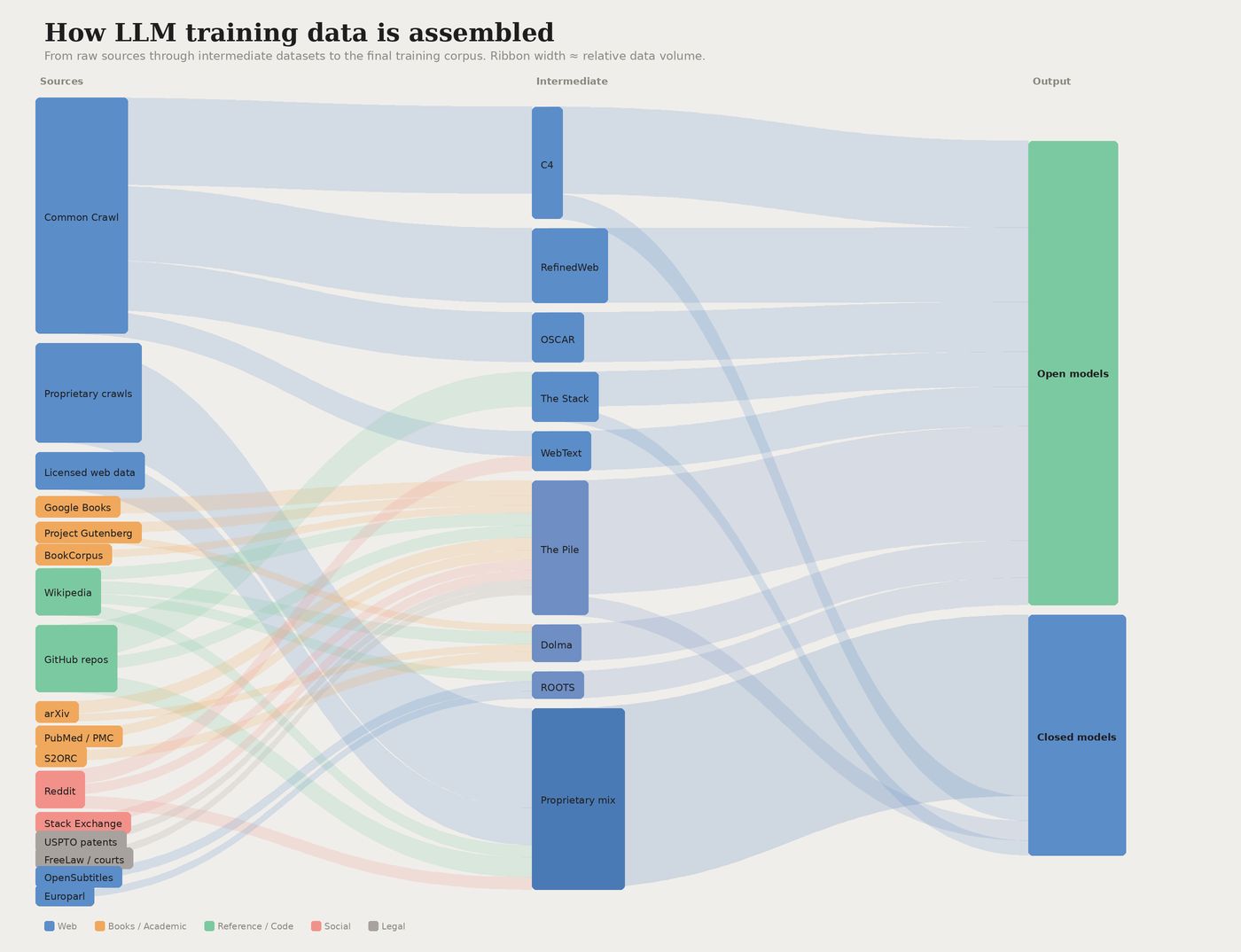

Throughout coaching, the mannequin ingests billions of textual content, picture, and code examples—public net crawls, books, Wikipedia, code repositories, licensed databases —and learns to foretell patterns throughout all of it. By the point coaching ends, the mannequin has successfully memorized a statistical snapshot of human information as much as that level.

A visualisation of widespread knowledge sources utilized in coaching giant language fashions.

That is how AI fashions develop their “understanding” of the world. The incidence of various entities within the coaching knowledge (like your model identify, or your merchandise: assume “Patagonia” or “Nanopuff Hoody”), and the phrases they generally co-occur with (like “environmentally-friendly” or “prime quality”), shapes the mannequin’s understanding of your model.

As Gianluca Fiorelli explains:

LLMs be taught the relationships between your model and ideas like ‘health club’ or ‘noise-cancellation.’ These semantic associations immediately affect whether or not and the way you’re talked about.

The dimensions concerned in coaching is sort of onerous to image. Coaching knowledge for main fashions is measured in trillions of tokens (roughly, word-chunks). The prices offer you a way of what that requires: coaching GPT-4 value an estimated $78 million; Google’s Gemini Extremely value round $191 million.

The worldwide marketplace for AI coaching datasets was $3.2 billion in 2025, and it’s projected to hit $16.3 billion by 2033—a 22.6% annual development fee that displays how central knowledge has change into to the entire enterprise.

Right here’s the important factor to grasp: as soon as coaching ends, the mannequin’s information is frozen. It may possibly’t be taught from new occasions. It has no thought what occurred yesterday, or final month, or after no matter date its coaching knowledge was lower off.

Some suppliers periodically fine-tune their fashions on newer knowledge, however that’s nonetheless a discrete course of—extra like issuing a software program replace than repeatedly studying the information.

The opposite main failure mode is hallucination. When a mannequin doesn’t have dependable coaching knowledge to attract on, it fills the hole with one thing plausible-sounding—a fabricated quotation, a made-up statistic, a assured non-answer (like Google’s AI Overview citing an April Idiot’s satire article as a factual supply).

The mannequin had no method to know the article was a joke; it simply seemed authoritative sufficient to suit the sample.

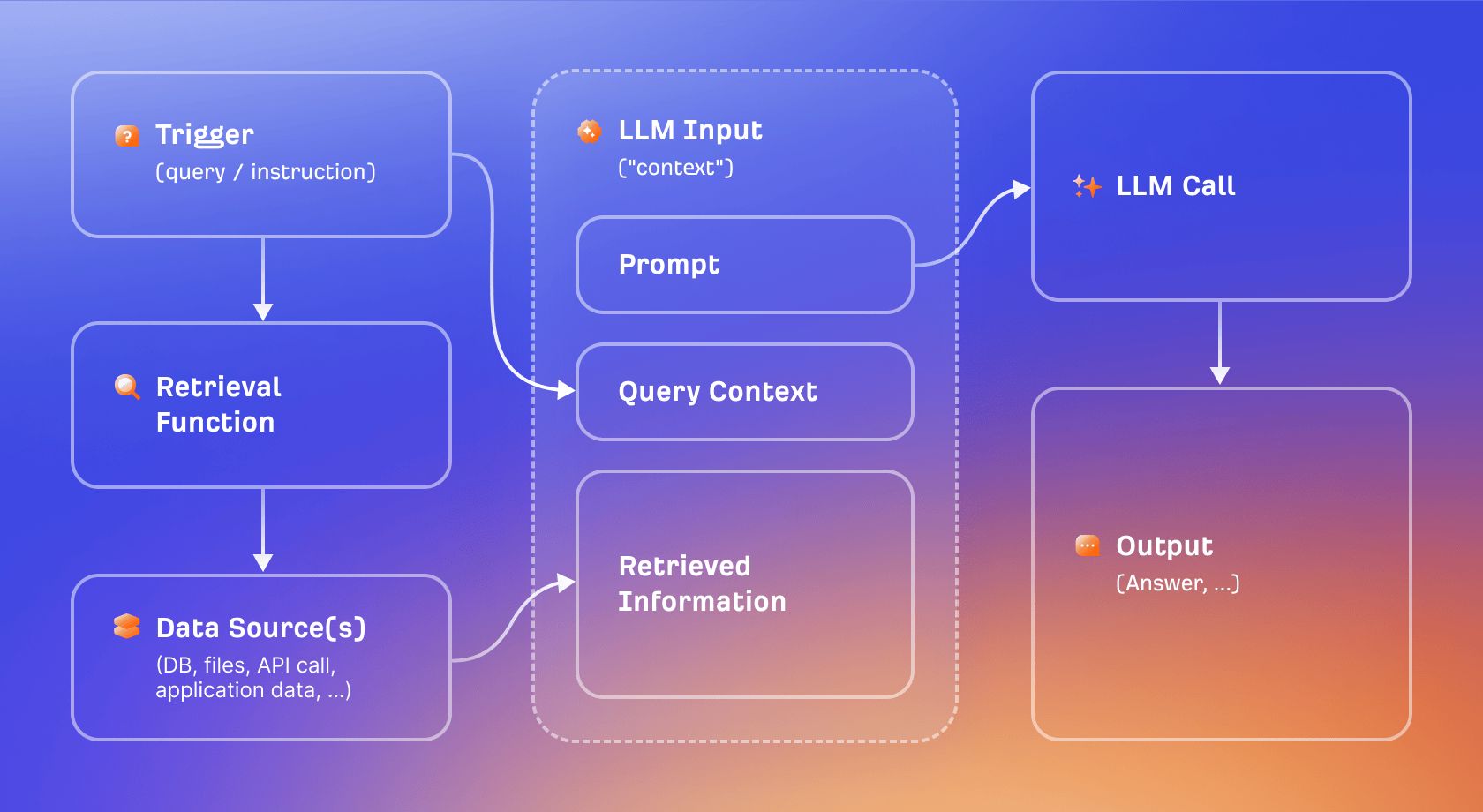

Retrieval-Augmented Technology (RAG) is the principle method used to work across the information cutoff downside.

As an alternative of relying purely on what the mannequin realized throughout coaching, RAG lets the mannequin pull in related paperwork in the mean time a query is requested, then use these paperwork as context when producing a response.

Consider it because the distinction between a closed-book examination and an open-book one. A training-only mannequin has to reply from reminiscence. A RAG-enabled mannequin can look issues up first, then reply. The result’s extra present and, in precept, extra verifiable, as a result of the reply is grounded in precise retrieved content material moderately than statistical pattern-matching.

Retrieval augmented technology visualised.

“Grounding” is the broader time period for this anchoring. When an AI reply is grounded, it’s tethered to particular retrieved sources, which dramatically reduces the hallucination danger.

As Britney Muller explains:

Grounding comes from floor fact, rooted in statistics and initially cartography, the place it actually meant going exterior to confirm that your map matched actuality.

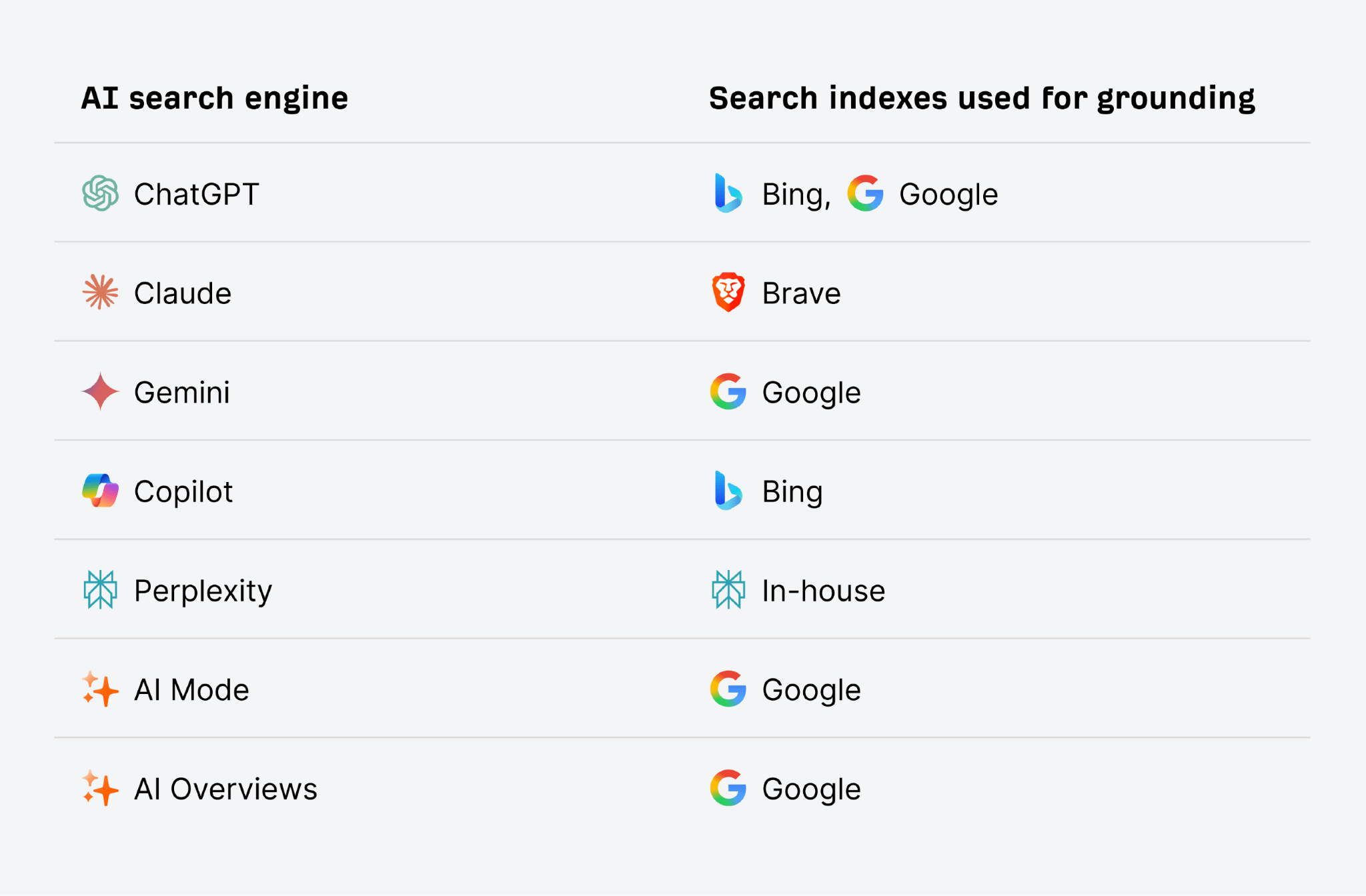

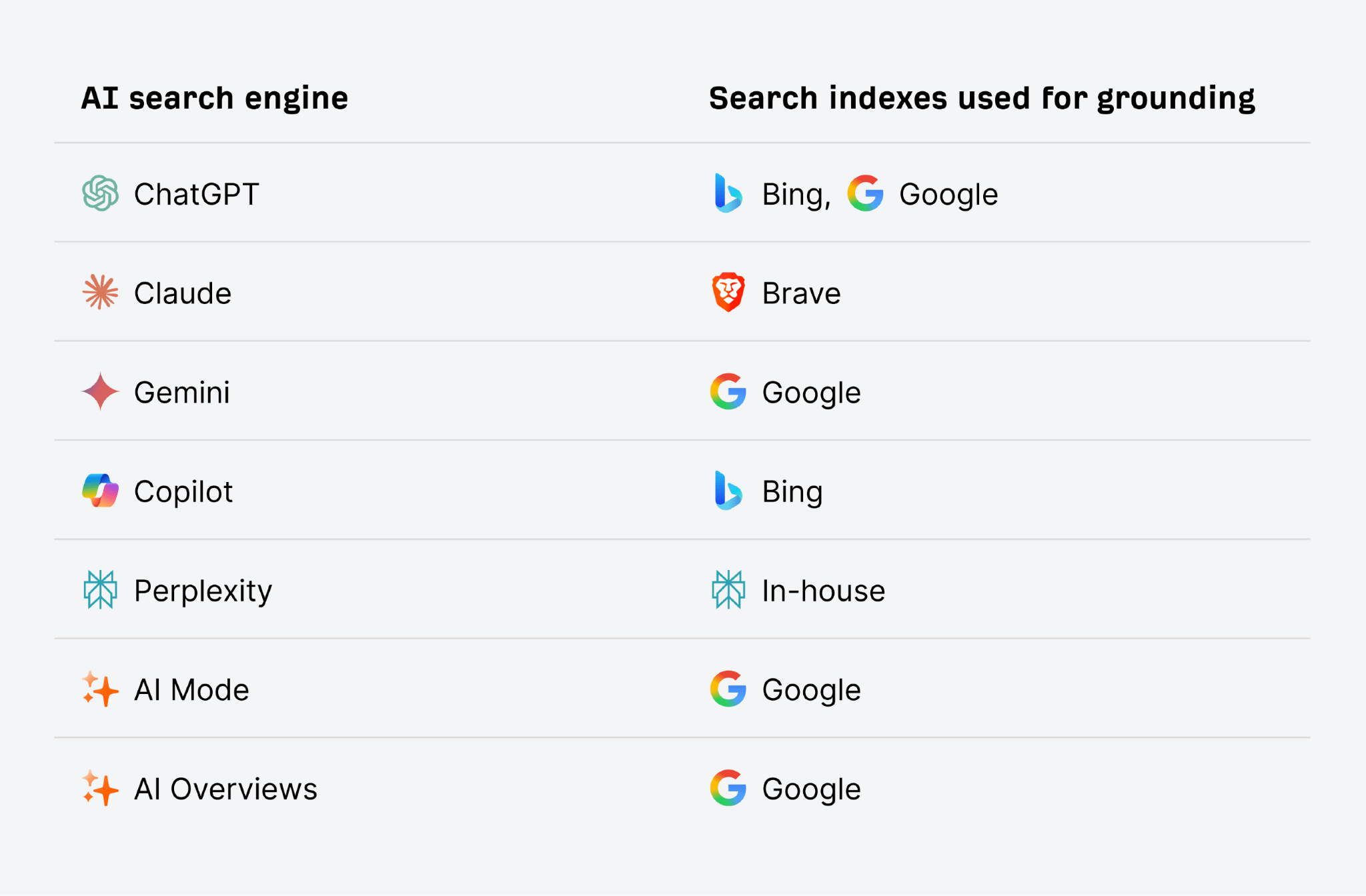

AI search engines like google like ChatGPT and Gemini use conventional search indexes like Google and Bing for this grounding course of. That’s why good search engine marketing, and rating extremely in conventional search, may even enhance your AI visibility. The upper you seem within the search index for the time period the AI searches for, the upper your probability of being retrieved and cite din the reply.

Not each AI product makes use of RAG. A base ChatGPT session with shopping disabled, for instance, is solely training-based: it has no entry to present data and no method to confirm its solutions in opposition to dwell sources.

The tradeoff is pace and ease. Coaching-only responses are quick, however they’re completely dated. RAG provides latency and introduces a brand new failure mode (retrieval errors—pulling within the flawed supply, or a poor-quality one), however it makes recency attainable.

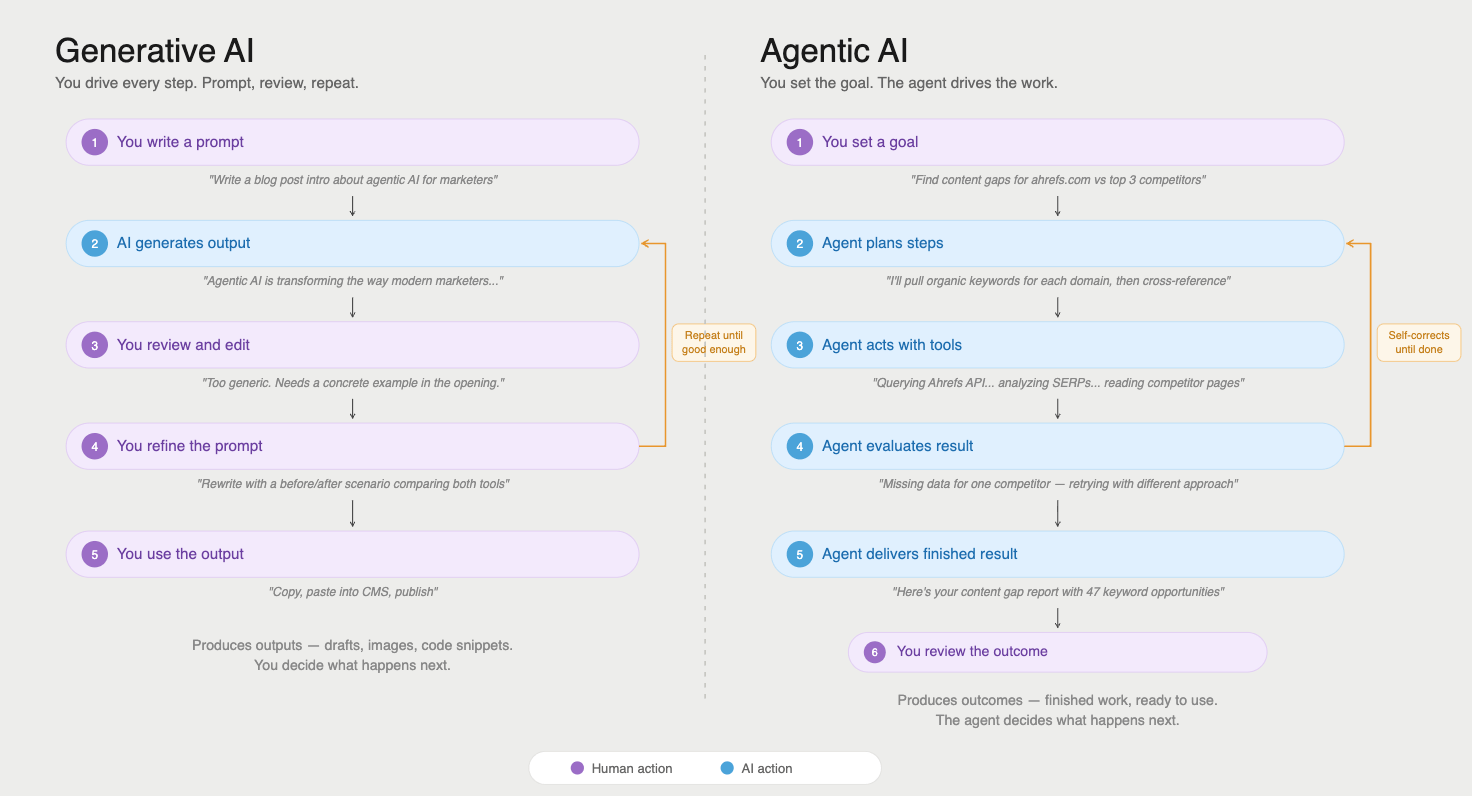

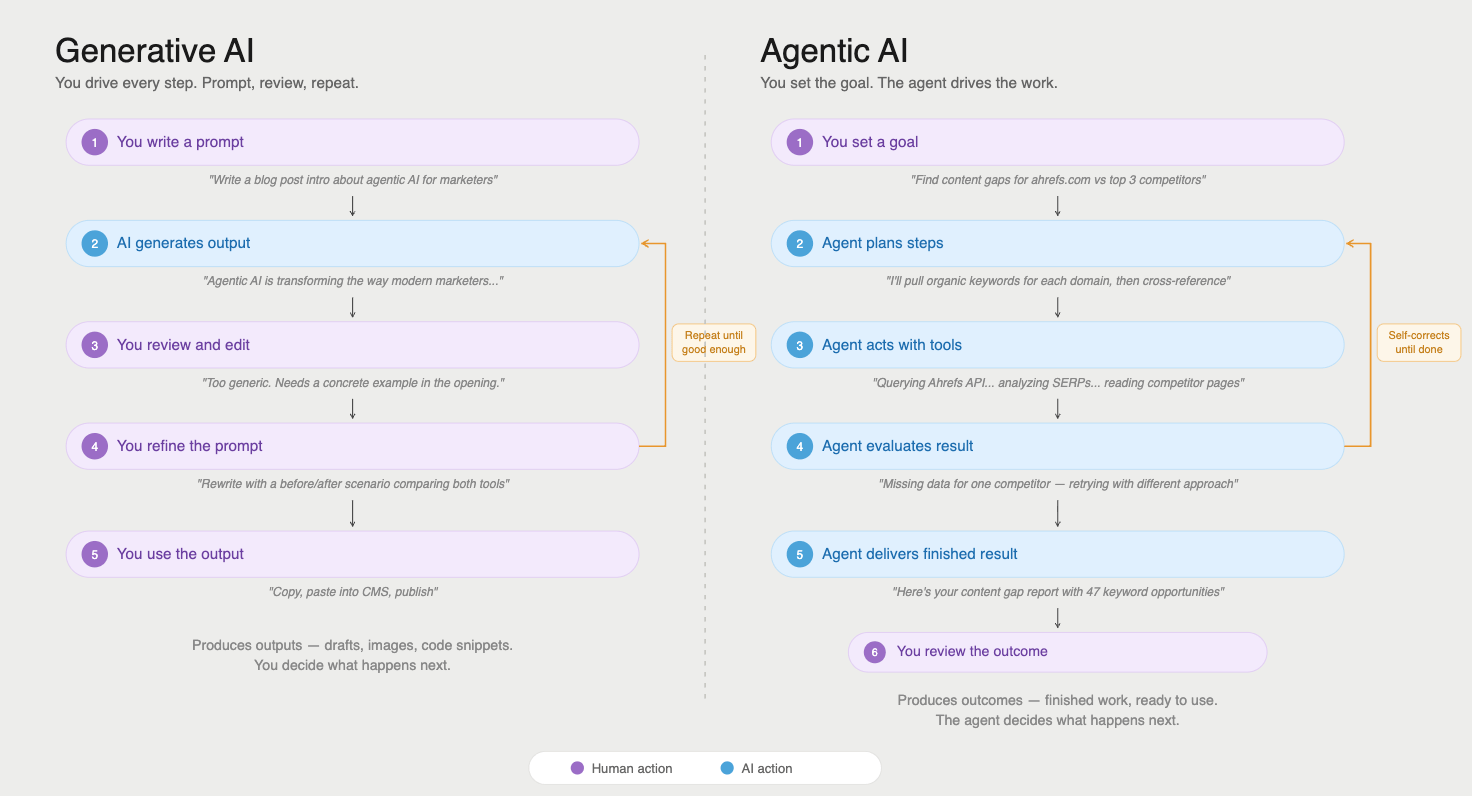

RAG is one method to get contemporary data into an AI response. However fashionable AI techniques are more and more going additional, giving fashions the flexibility to name exterior instruments mid-conversation. That is the territory of AI brokers.

An AI agent doesn’t simply retrieve paperwork; it might question APIs, run searches, execute code, and work together with dwell knowledge sources as a part of working by means of a activity.

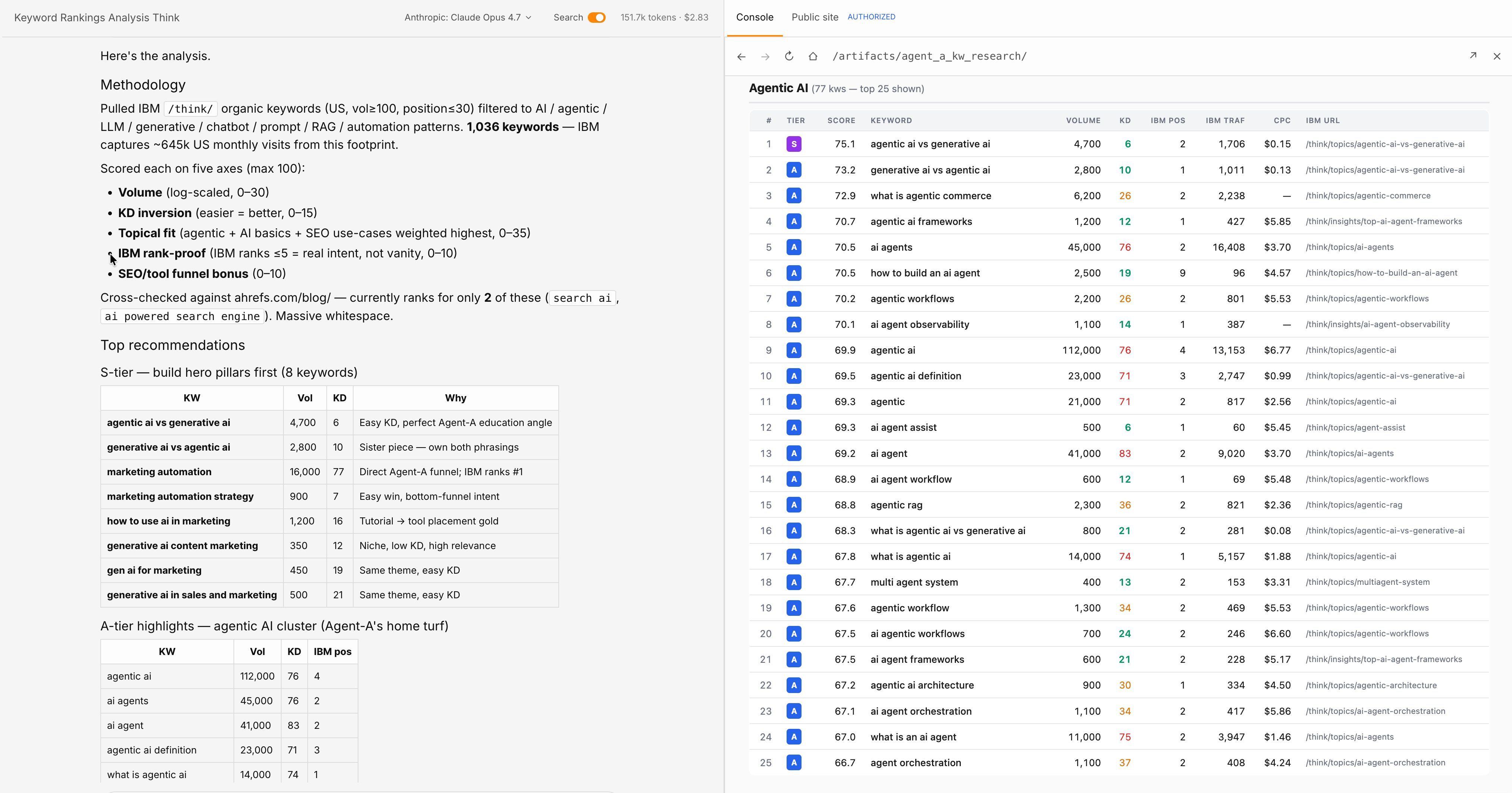

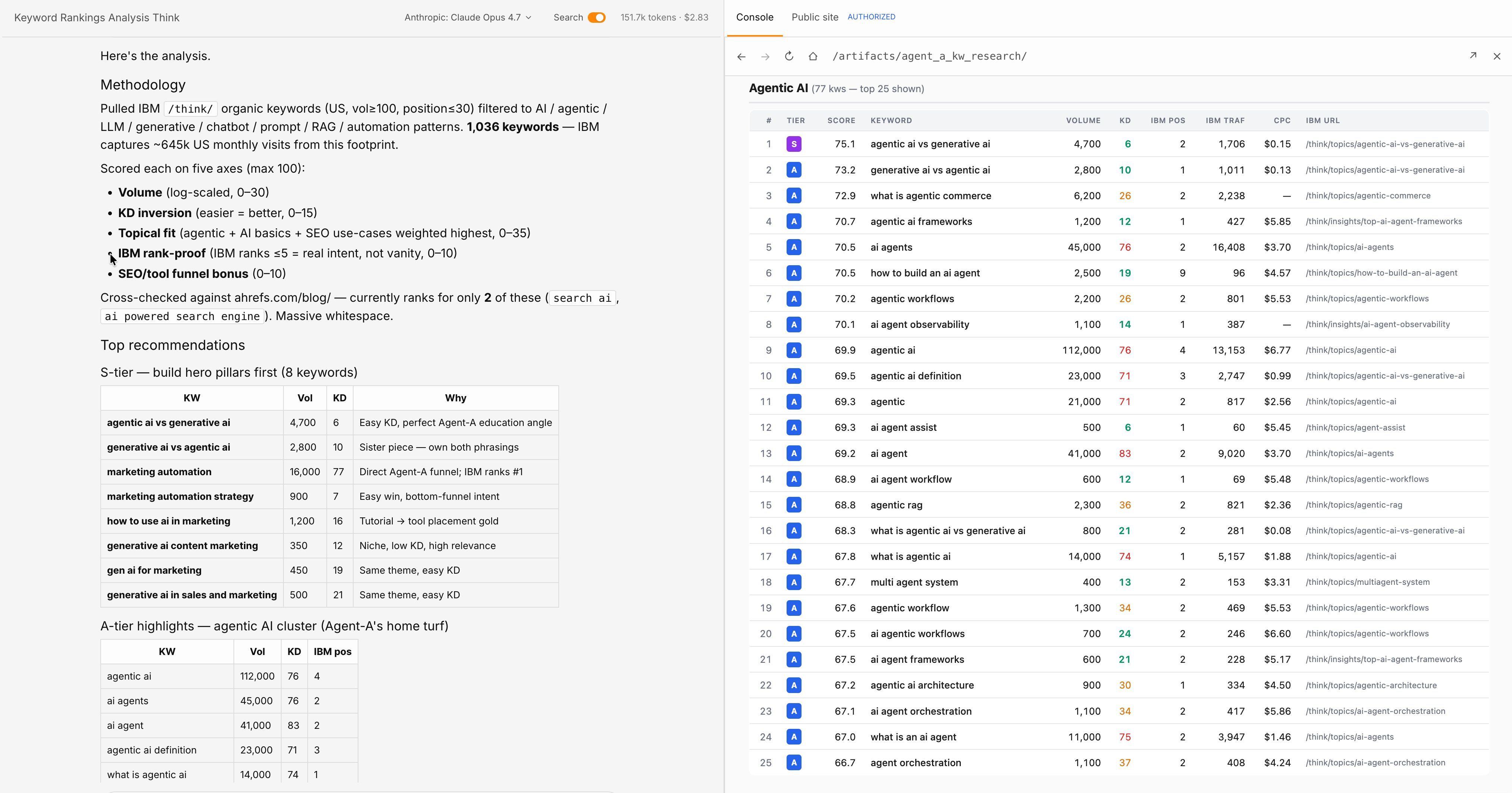

A comparability of utilizing generative AI versus agentic AI.

The rising infrastructure for that is referred to as Mannequin Context Protocol (MCP), a regular that lets AI fashions hook up with exterior knowledge sources in a structured manner.

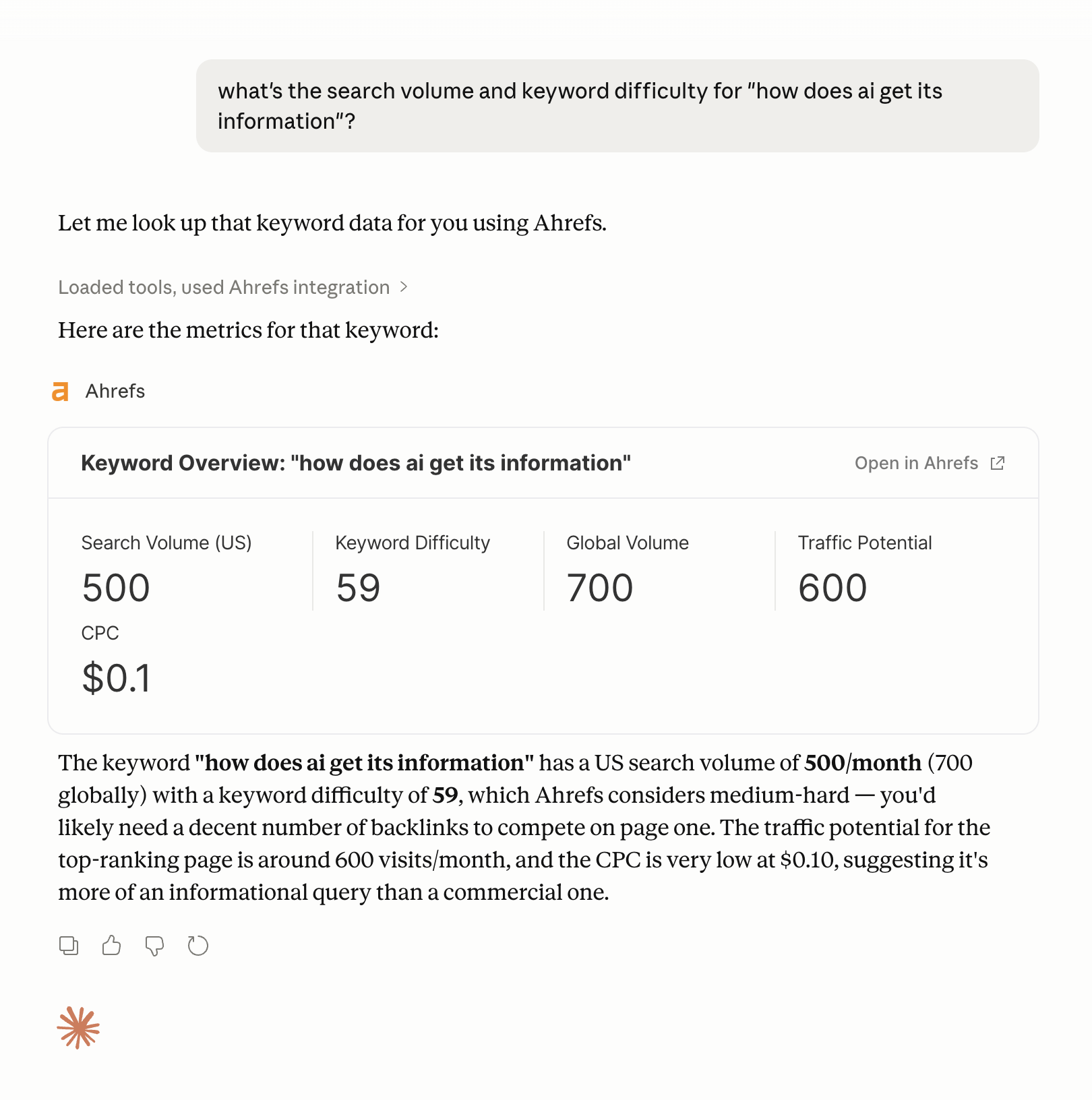

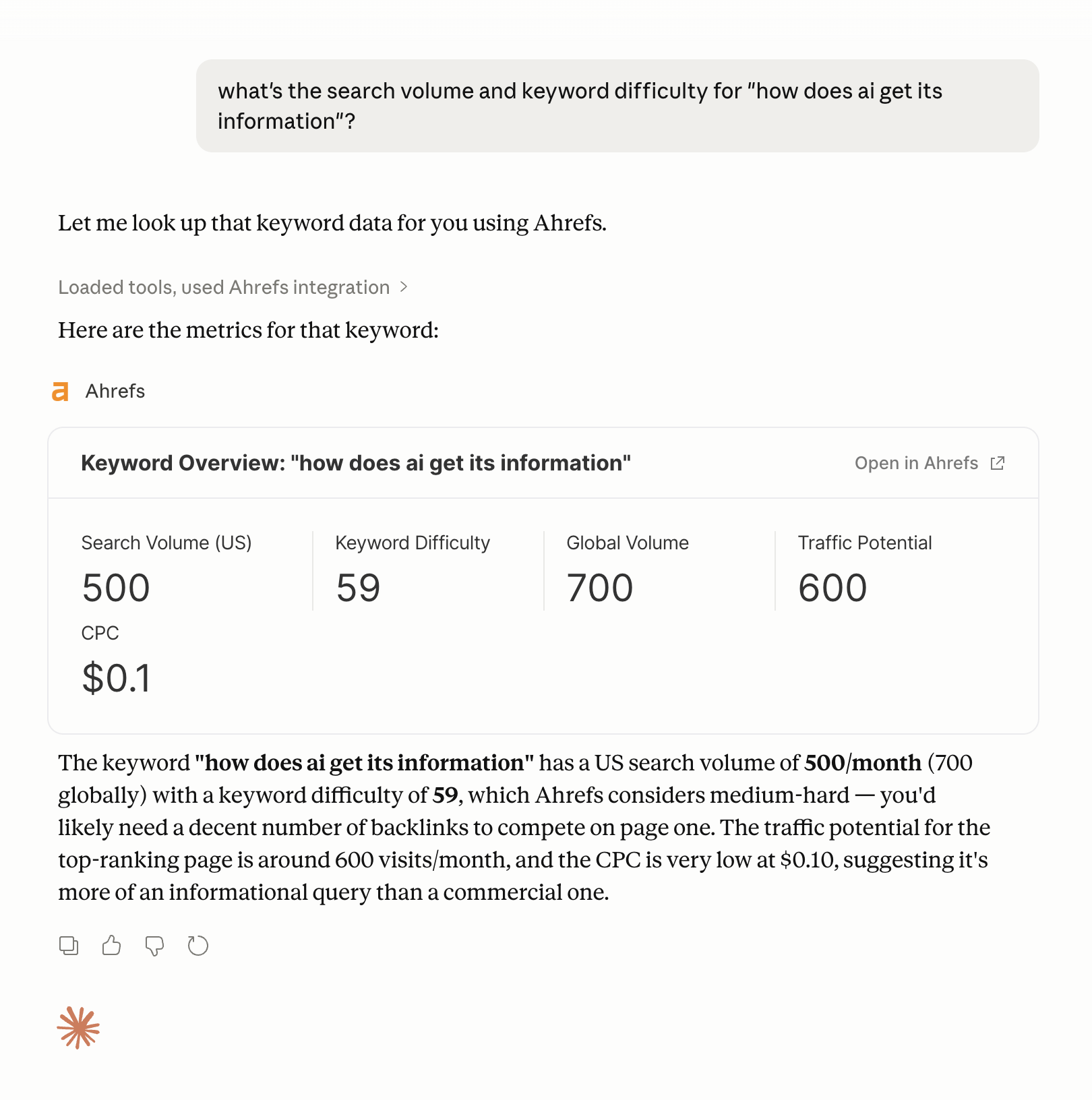

A concrete instance: Ahrefs has an MCP integration that lets AI brokers question Ahrefs knowledge immediately throughout a activity, pulling key phrase metrics, backlink knowledge, or aggressive insights with out the person leaving their workflow.

An instance of getting key phrase knowledge utilizing the Ahrefs MCP in Claude.

Strive Agent A now

Ahrefs’ Agent A takes this additional. It’s a advertising and marketing AI with direct, limitless entry to Ahrefs’ full inner dataset: key phrase knowledge, web site metrics, aggressive intelligence, the works.

Quite than an AI that has to approximate search engine marketing insights from coaching knowledge (which fits stale) or retrieve them from public sources (that are incomplete), Agent A works from the precise knowledge.

For advertising and marketing and search engine marketing duties particularly, that’s an enormous distinction: Agent A can deal with many search engine marketing and advertising and marketing workflows, with none hand-holding.

The broader precept is that tool-augmented AI is barely as dependable because the instruments it calls. If the API returns dangerous knowledge, the AI produces a foul reply, confidently. The intelligence of the mannequin doesn’t prevent from rubbish inputs. What it does do is prolong the mannequin’s attain far past what any coaching dataset may cowl.

Once you perceive the place AI will get its data from, you perceive the place your model must show-up to face the most effective probability of being cited:

- Off-site mentions. In order for you AI to precisely symbolize your model, the start line isn’t your web site—it’s off-site mentions. Fashions find out about manufacturers from the sources they educated on: press protection, third-party opinions, discussion board discussions, Wikipedia entries, and citations in authoritative publications. A model that exists solely by itself area is basically invisible to the mannequin’s coaching knowledge.

- Question fan-out. Past model recognition, you must take into consideration question fan-out, the adjoining questions AI techniques generate round a core subject. A model rating for “undertaking administration software program” also needs to be focusing on content material like “learn how to run a dash evaluation” or “agile vs. waterfall,” as a result of these are the questions an AI system will floor when a person follows up on the preliminary question. Creating content material that covers the total semantic neighborhood round your core subjects will increase the probabilities you seem in that growth.

- AI accessibility. Technical accessibility nonetheless issues, too. Clear HTML, quick load instances, and a well-configured robots.txt file have an effect on whether or not AI crawlers can learn your content material in any respect. llms.txt is a proposed commonplace for serving to LLMs navigate your web site’s construction, however as of 2026 no main LLM supplier has confirmed they respect it (so don’t waste your time).

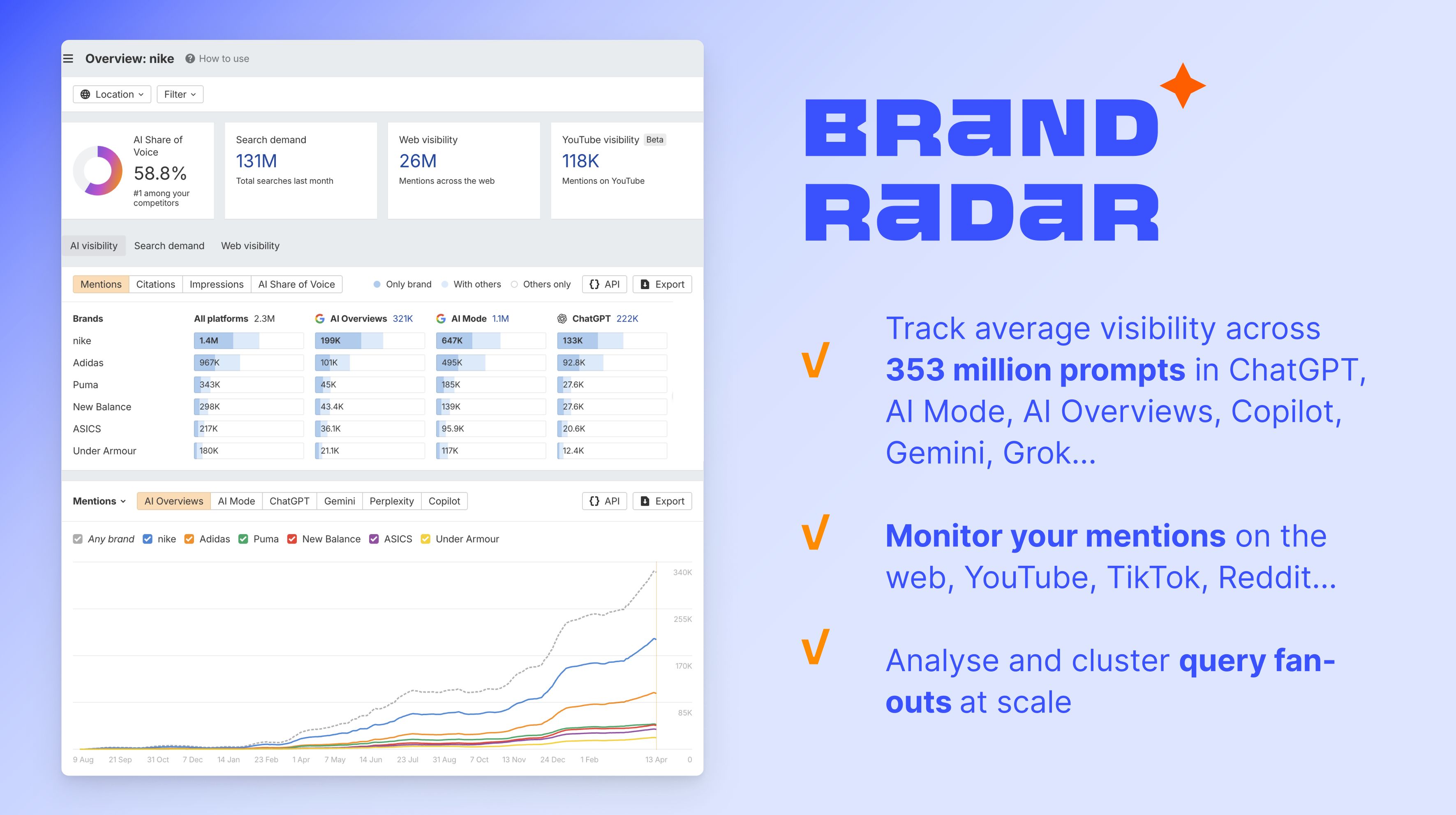

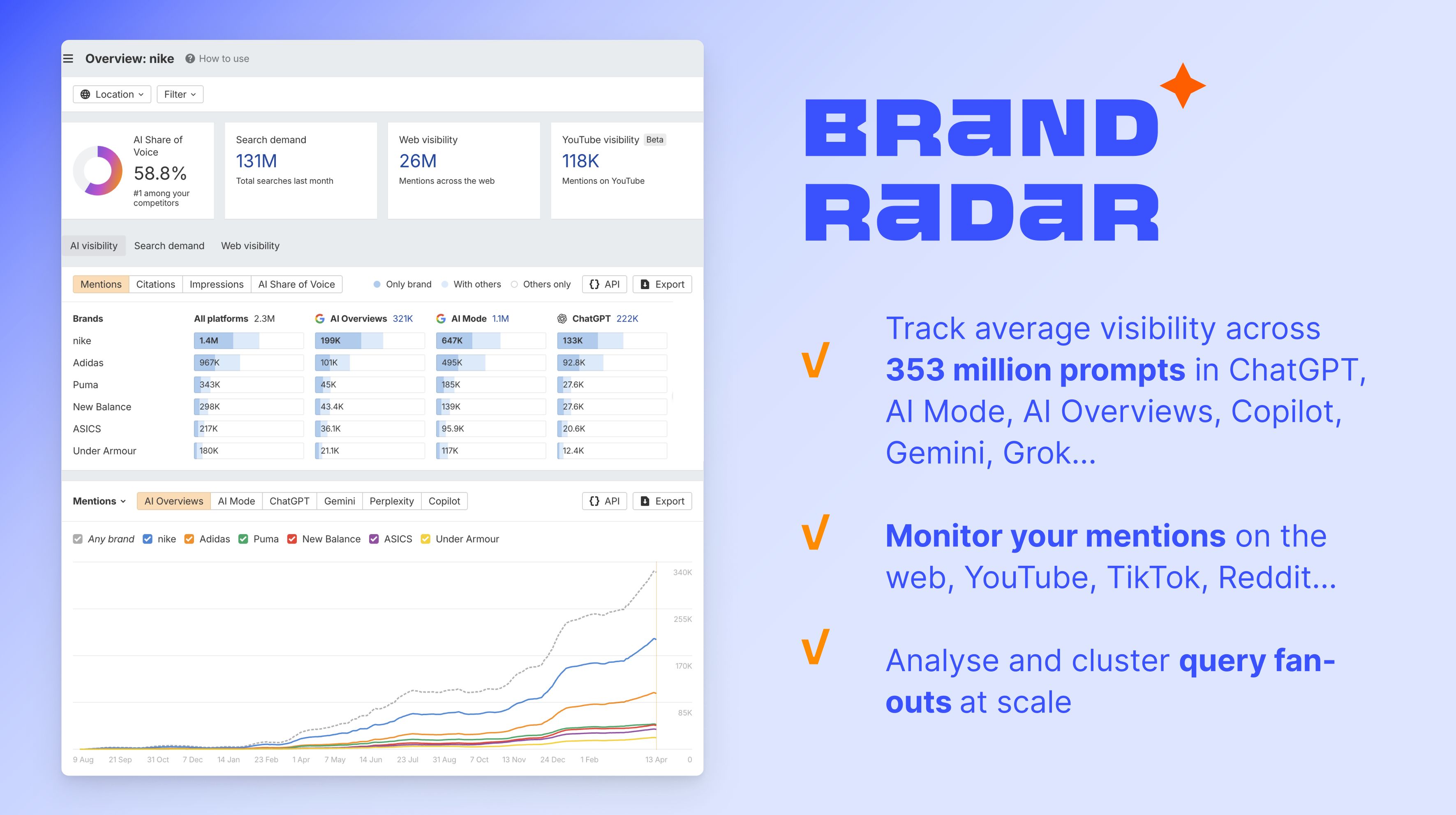

Begin monitoring AI visibility with Model Radar

To measure how that is working in apply, Ahrefs’ Model Radar tracks AI share of voice throughout ChatGPT, Gemini, Perplexity, AI Overviews, AI Mannequin Grok, and plenty of extra, displaying how typically your model is talked about in AI-generated responses relative to rivals. Learn this text to be taught the way it works.

Ultimate ideas

AI information comes from three layers: frozen coaching knowledge, retrieved dwell paperwork, and related exterior instruments, like APIs and MCPs. Every has a unique accuracy profile, a unique relationship with recency, and a unique manner of failing.

Coaching knowledge is the muse—huge, costly, and static. RAG and grounding add foreign money at the price of retrieval reliability. Instrument integrations like Ahrefs’ MCP and purpose-built brokers like Agent A prolong that additional, giving AI entry to dwell, authoritative knowledge in the mean time it’s wanted.

For a deeper have a look at how AI search engines like google sew these layers collectively to generate solutions, try our information to how AI search engines like google work.