Introduction:

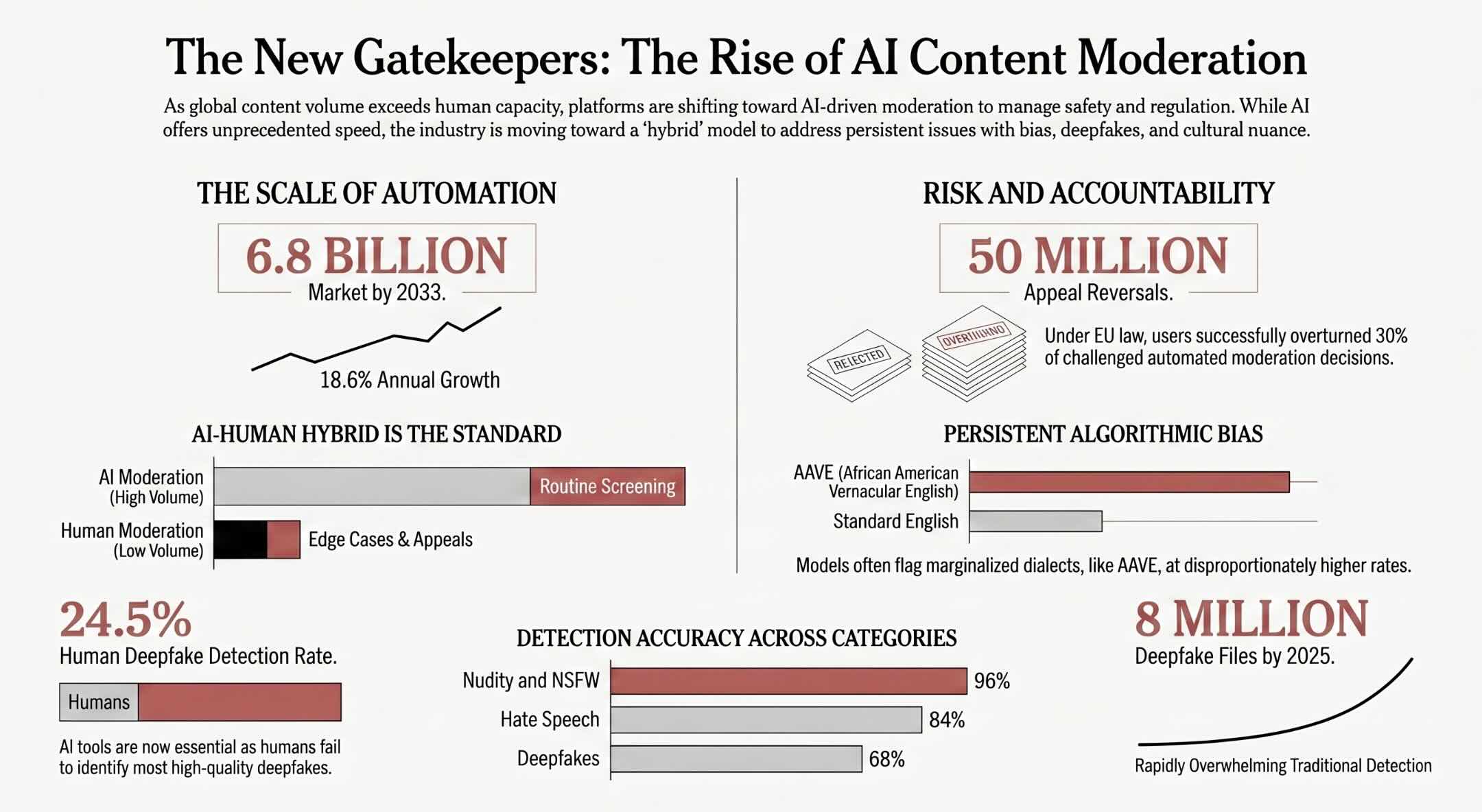

The size of user-generated content material throughout world platforms has reached some extent the place human evaluation alone can’t maintain tempo with the amount of posts, pictures, and movies uploaded each second. In line with Verified Market Experiences, the AI content material moderation market was valued at USD 1.5 billion in 2024 and is projected to succeed in USD 6.8 billion by 2033, increasing at a compound annual development price of 18.6 p.c. Platforms starting from social networks to e-commerce marketplaces now rely upon machine studying fashions to establish hate speech, specific imagery, scams, and coordinated disinformation campaigns earlier than they attain broad audiences. The shift towards automated moderation isn’t merely about velocity; it displays a elementary change in how digital platforms handle belief, security, and regulatory compliance throughout dozens of languages and cultural contexts. Content material moderation powered by synthetic intelligence has develop into a strategic perform that touches model popularity, authorized publicity, and the lived expertise of billions of customers worldwide. This text explores the applied sciences, challenges, rules, and evolving practices that outline AI content material moderation in 2026 and past.

Fast Solutions on AI-Powered Content material Moderation

What’s AI content material moderation and the way does it work?

AI content material moderation makes use of machine studying fashions, pure language processing, and laptop imaginative and prescient to robotically detect, flag, and take away dangerous or policy-violating content material from digital platforms at scale.

Why do platforms want AI for content material moderation?

Platforms generate billions of posts, pictures, and movies every day, making human-only evaluation unimaginable. AI permits real-time scanning, constant enforcement, and multilingual protection that guide groups can’t obtain alone.

What are the largest dangers of automated content material moderation?

Key dangers embody algorithmic bias, over-enforcement that silences professional speech, problem understanding cultural context and sarcasm, and vulnerability to adversarial assaults designed to evade detection programs.

Key Takeaways

- Bias, cultural insensitivity, and deepfake evasion stay persistent challenges that demand steady mannequin retraining, various datasets, and strong human oversight.

- AI content material moderation combines pure language processing, laptop imaginative and prescient, and multimodal evaluation to flag dangerous content material throughout textual content, pictures, video, and audio at speeds no human group can match.

- Hybrid AI-human moderation fashions are rising because the business normal, with AI dealing with high-volume routine screening and human reviewers managing nuanced edge instances and appeals.

- Regulatory frameworks just like the EU Digital Providers Act and the UK On-line Security Act are compelling platforms to publish transparency stories, conduct danger assessments, and supply consumer attraction mechanisms tied to automated moderation choices.

What Is AI Content material Moderation?

AI content material moderation is using machine studying algorithms, pure language processing, and laptop imaginative and prescient to robotically establish, flag, and implement coverage towards dangerous, unlawful, or inappropriate content material on digital platforms. It permits real-time, scalable evaluation of textual content, pictures, video, and audio throughout a number of languages and content material sorts.

AI Content material Moderation Simulator

Regulate platform parameters under to see how completely different configurations have an effect on AI moderation efficiency, prices, and danger publicity.

Estimated Every day Price

$12,400

AI: $4,200 | Human: $8,200

Common Response Time

2.4s

For preliminary content material screening

Human Reviewers Wanted

164

For escalated and edge instances

Estimated Accuracy

92.3%

Blended precision throughout classes

Configuration Perception

With 80% AI automation on a big platform processing 5M posts every day, the hybrid mannequin balances price effectivity with accuracy. Rising automation past 90% could scale back prices however will increase danger of false positives in nuanced classes like hate speech and misinformation.

Why Content material Moderation Calls for Automation

The sheer quantity of content material uploaded to digital platforms each minute has made purely guide moderation an operational impossibility that no group can maintain at world scale. Fb alone processes billions of posts, feedback, and shared media objects every day, whereas platforms like YouTube, TikTok, and X face related torrents of user-generated materials throughout each language and format. Human evaluation groups, even when staffed within the hundreds, can solely consider a fraction of this content material inside the time home windows that matter for stopping hurt. The velocity at which viral content material spreads signifies that a dangerous submit left unmoderated for even just a few hours can attain tens of millions of customers and trigger lasting reputational or psychological injury.

Automation by way of synthetic intelligence addresses this hole by enabling platforms to scan and classify content material in actual time, typically inside milliseconds of add. Machine studying fashions educated on labeled datasets can establish patterns related to hate speech, graphic violence, nudity, spam, and rip-off content material with a consistency that human reviewers wrestle to keep up throughout eight-hour shifts. AI programs also can function constantly throughout time zones, eliminating the bottleneck created by shift adjustments, fatigue, and the geographic focus of moderation facilities. The result’s a baseline layer of automated enforcement that catches nearly all of clear-cut violations earlier than any human moderator must intervene.

Price is one other driving drive behind the push towards automation in content material moderation workflows. Sustaining massive moderation groups throughout a number of nations includes important payroll, coaching, and psychological well being help bills that scale linearly with content material quantity. AI moderation, against this, scales sub-linearly: as soon as a mannequin is educated and deployed, the marginal price of processing an extra million posts is a fraction of what it might price to rent and practice sufficient human reviewers to deal with the identical workload. Platforms that put money into accountable AI equipping companies for achievement can scale back operational prices whereas concurrently bettering the velocity and consistency of enforcement choices throughout their content material ecosystem.

Pure Language Processing in Dangerous Textual content Detection

Pure language processing sits on the core of text-based content material moderation, giving AI programs the power to parse, interpret, and classify written language throughout a spectrum of dangerous classes. Fashionable NLP fashions go properly past easy key phrase matching; they use transformer-based architectures to investigate sentence construction, semantic which means, and contextual cues that distinguish real threats from benign conversations. These fashions can detect hate speech, harassment, incitement to violence, self-harm promotion, and spam by understanding the relationships between phrases reasonably than counting on static lists of banned phrases. The power to course of pure language processing challenges like slang, coded language, and deliberate misspellings has develop into a vital differentiator amongst moderation platforms.

Sentiment evaluation and intent classification add extra layers of nuance to text-based moderation programs that transcend surface-level toxicity detection. A press release that seems impartial on its floor could carry hostile intent when analyzed within the context of the encircling dialog, the consumer’s historical past, or the particular neighborhood norms of the platform. NLP fashions educated on various, context-rich datasets can differentiate between a consumer quoting a dangerous assertion to criticize it and a consumer endorsing the identical language with malicious intent. The hole between key phrase filtering and contextual understanding represents the only largest leap that AI has delivered to textual content moderation over the previous 5 years. These programs additionally help multilingual detection, processing content material in dozens of languages with out requiring separate moderation groups for every linguistic neighborhood.

Pc Imaginative and prescient and Picture Classification Techniques

Pc imaginative and prescient fashions educated on tens of millions of labeled pictures present the muse for automated picture moderation throughout social media platforms, marketplaces, and messaging purposes. These programs use convolutional neural networks and, more and more, imaginative and prescient transformer architectures to categorise pictures into classes similar to nudity, graphic violence, weapons, drug paraphernalia, and hate symbols. Every uploaded picture passes by way of a number of classification layers that assign chance scores to numerous coverage violation classes, with high-confidence matches triggering automated removing and borderline instances being routed to human evaluation queues. The accuracy of those programs has improved dramatically as coaching datasets have grown to incorporate tens of millions of annotated examples spanning various cultural, racial, and geographic contexts.

Object detection and scene evaluation permit laptop imaginative and prescient programs to transcend easy picture classification by figuring out particular parts inside advanced visible compositions. A single picture may include a crowd scene with a small hate image on a banner, a product itemizing with deceptive imagery, or a meme that mixes innocuous visible parts with dangerous textual content overlays. Fashionable laptop imaginative and prescient purposes can isolate and analyze every of those parts independently, making use of completely different coverage guidelines to completely different components of the identical picture. This granular method reduces the speed of false positives that plagued earlier programs, which frequently flagged complete pictures primarily based on a single deceptive visible function.

Optical character recognition built-in into laptop imaginative and prescient pipelines has develop into important for moderating pictures that embed textual content, together with memes, screenshots of conversations, and manipulated paperwork. Dangerous actors incessantly embed hate speech, threats, or misinformation into picture codecs particularly to evade text-based NLP filters, understanding that many platforms traditionally handled textual content and picture moderation as separate programs. Fashionable multimodal fashions bridge this hole by extracting and analyzing embedded textual content alongside visible options, making a unified classification pipeline that treats every bit of content material as a coherent entire reasonably than a set of disconnected alerts. This convergence of textual content and picture evaluation represents a major development in closing the evasion gaps that dangerous actors have exploited for years.

Video and Audio Moderation at Scale

Whereas textual content and picture moderation have matured considerably over the previous decade, video and audio moderation current a definite set of challenges that push AI programs to their computational and analytical limits. A single minute of video accommodates hundreds of particular person frames, an audio monitor that will embody speech, music, and sound results, and contextual metadata that should all be analyzed in live performance to find out whether or not the content material violates platform insurance policies. AI fashions designed for video moderation use a mix of body sampling, temporal evaluation, and audio transcription to establish dangerous content material inside livestreams, uploaded clips, and short-form movies. The computational price of real-time video moderation is orders of magnitude greater than textual content or picture scanning, which is why most platforms use tiered screening approaches that apply lighter checks throughout add and deeper evaluation post-publication.

Audio moderation has emerged as a vital frontier as voice-based platforms, podcasts, and reside audio options have expanded throughout the digital ecosystem lately. Speech-to-text transcription powered by AI converts spoken language into textual content that may then be processed by way of normal NLP moderation pipelines, whereas separate audio classifiers can detect non-speech indicators of dangerous content material similar to gunshots, screaming, or misery alerts. Platforms internet hosting reside audio conversations face the extra problem of moderating content material in actual time, the place even just a few seconds of delay between detection and motion can expose listeners to dangerous materials earlier than the system intervenes. The mixing of audio evaluation with AI’s affect on media and content material creation demonstrates how deeply these applied sciences now penetrate each content material format.

How Hybrid AI-Human Fashions Enhance Accuracy

Past the capabilities of standalone automated programs, the best content material moderation methods in 2026 mix AI’s velocity and scale with human judgment and contextual reasoning in structured hybrid workflows. On this mannequin, AI programs deal with the preliminary screening of all incoming content material, robotically eradicating clear-cut violations and routing ambiguous or borderline instances to educated human moderators who can apply nuanced judgment. This division of labor permits platforms to course of monumental volumes of content material effectively whereas preserving the standard of decision-making on the instances that require cultural consciousness, contextual understanding, or coverage interpretation that AI can’t but reliably ship. The hybrid method acknowledges that neither purely automated nor purely guide moderation is adequate by itself.

Human moderators in hybrid programs don’t merely evaluation content material flagged by AI; additionally they play a vital function in coaching and bettering the automated fashions by way of steady suggestions loops. When a human reviewer overturns an AI resolution, that correction is fed again into the coaching pipeline, serving to the mannequin study from its errors and refine its classification boundaries over time. This iterative course of creates a virtuous cycle the place human experience steadily improves AI accuracy, and improved AI accuracy reduces the amount of ambiguous instances that require human consideration. Platforms that put money into structured suggestions mechanisms report measurable enhancements in each precision and recall over successive mannequin generations.

The organizational design of hybrid moderation groups issues as a lot because the know-how itself, as a result of poorly structured workflows can negate the advantages of mixing AI with human judgment. Efficient hybrid programs assign completely different tiers of human reviewers to several types of content material, with generalist moderators dealing with routine escalations and specialised consultants addressing classes like little one security, terrorism, and coordinated inauthentic conduct. Platforms that deal with human moderators as interchangeable with AI classification outputs miss the purpose of the hybrid mannequin fully. Clear escalation pathways, constant coverage pointers, and common calibration classes between human groups and AI engineers are important for sustaining the accuracy and equity that neither element can obtain in isolation.

The financial calculus of hybrid moderation additionally deserves consideration, as the fee construction differs considerably from each absolutely automated and absolutely guide approaches. Hybrid fashions require funding in each AI infrastructure and human expertise, however the whole price per moderation resolution tends to be decrease than both excessive as a result of AI handles nearly all of simple instances at minimal marginal price whereas people focus their time on the small share of selections that truly require human cognition. Analysis from business analysts means that AI governance tendencies and rules are pushing platforms towards hybrid fashions not only for accuracy causes but additionally to fulfill regulatory necessities for human oversight in automated decision-making processes.

Coaching Information and the Basis of Moderation AI

The standard and composition of coaching information decide the higher sure of what any AI content material moderation system can obtain, no matter how subtle the mannequin structure could also be. Fashions study to establish dangerous content material by analyzing tens of millions of labeled examples that human annotators have labeled as violating or complying with particular content material insurance policies. If the coaching information is skewed towards sure languages, cultural contexts, or content material classes, the ensuing mannequin will carry out erratically throughout the varied vary of content material it encounters in manufacturing. Constructing consultant, balanced, and often up to date coaching datasets is among the most resource-intensive and strategically essential investments a platform could make in its moderation infrastructure.

Annotation high quality is a persistent problem as a result of content material moderation insurance policies are inherently subjective and evolve over time as societal norms, authorized necessities, and platform priorities shift. Two annotators reviewing the identical piece of content material could attain completely different conclusions relying on their cultural background, private experiences, and interpretation of ambiguous coverage language. Platforms tackle this variability by using a number of annotators per merchandise and utilizing consensus mechanisms to find out the ultimate label, however this method multiplies the fee and time required to construct every coaching dataset. The hole between coverage intent and annotation execution is the place many moderation AI programs quietly fail, producing fashions that implement an imprecise model of the principles they have been designed to uphold.

Information freshness is equally vital as a result of the ways utilized by dangerous actors evolve constantly, creating a continuing arms race between evasion methods and detection capabilities. A mannequin educated on information from twelve months in the past could not acknowledge the most recent slang, coded language, or visible manipulation methods which have emerged for the reason that coaching cutoff. Platforms should set up steady information pipelines that incorporate newly recognized violations, rising tendencies, and suggestions from human moderators into the coaching cycle on an everyday cadence. The problem of sustaining up-to-date coaching information aligns carefully with broader issues about adversarial machine studying and the methods during which subtle actors intentionally probe and exploit the boundaries of automated detection programs.

Bias and Equity Challenges in Automated Techniques

Algorithmic bias in content material moderation programs isn’t a theoretical concern however a documented actuality that impacts the speech and visibility of tens of millions of customers throughout world platforms every single day. Research have proven that NLP fashions educated totally on English-language information are inclined to flag African American Vernacular English and different dialectal variations at disproportionately greater charges than normal written English, successfully penalizing audio system of marginalized linguistic communities. A 2026 research from the College of Queensland demonstrated that giant language fashions used for moderation encode political biases, with fashions adopting ideological personas that decide criticism of their assigned in-group extra harshly than equal criticism directed at opposing teams. These findings underscore the danger that bias and discrimination in AI programs can silently reshape public discourse by amplifying some voices and suppressing others.

The basis causes of moderation bias prolong past coaching information to incorporate the design selections embedded in mannequin architectures, analysis metrics, and deployment configurations. Optimizing a mannequin for precision, which minimizes false positives, produces a system that lets extra dangerous content material by way of; optimizing for recall, which minimizes false negatives, produces a system that over-enforces and catches extra professional speech in its web. The trade-off between these two goals is inherently a coverage resolution, but it’s typically made by engineering groups with out adequate enter from coverage consultants, affected communities, or exterior auditors. Platforms that fail to acknowledge moderation accuracy as a sociotechnical problem reasonably than a purely technical one are prone to perpetuate and even amplify the biases current of their coaching information and analysis frameworks.

Addressing bias requires a mix of technical interventions and institutional reforms that transcend merely diversifying coaching information, although that is still a essential place to begin. Common bias audits utilizing disaggregated efficiency metrics throughout demographic, linguistic, and geographic segments can reveal disparities that combination accuracy numbers obscure. Exterior evaluation boards, impartial auditors, and public transparency stories present extra accountability mechanisms that assist platforms establish and proper systemic patterns of unfair enforcement. The platforms that take bias mitigation most critically are people who deal with it as an ongoing operational self-discipline reasonably than a one-time repair utilized throughout mannequin improvement. These efforts hook up with the broader panorama of moral implications of superior AI that reach properly past content material moderation into hiring, lending, healthcare, and felony justice.

Cultural and Linguistic Nuance in International Moderation

The problem of moderating content material throughout tons of of languages and cultural contexts represents one of the persistent gaps in AI-driven moderation programs deployed at a world stage. A phrase that constitutes innocent banter in a single tradition could carry deeply offensive connotations in one other, and humor, sarcasm, and irony are notoriously tough for AI fashions to interpret even inside a single language. Platforms working throughout dozens of nations should both construct language-specific fashions for every market or depend on multilingual fashions that sacrifice depth of understanding for breadth of protection. The useful resource disparity between high-resource languages like English, Spanish, and Mandarin and low-resource languages spoken by smaller populations creates a two-tiered moderation system the place customers in some areas obtain considerably much less safety than others.

Native context additionally shapes the definition of dangerous content material in methods that can’t be captured by common coverage frameworks utilized uniformly throughout all markets. Political speech that’s protected in a single jurisdiction could represent incitement in one other, spiritual expression that’s routine in a single tradition could violate blasphemy legal guidelines in a neighboring nation, and visible content material that’s thought-about acceptable in a single area could also be deeply offensive in one other. Platforms navigating this complexity should stability world consistency with native sensitivity, typically counting on regional coverage groups and native human reviewers to calibrate AI programs for particular markets. The stress between scalable, uniform AI moderation and the irreducible range of human tradition is a problem that no present know-how can absolutely resolve. Understanding AI-driven misinformation and manipulation requires acknowledging that misinformation itself is outlined in another way throughout cultural and political boundaries.

Deepfake Detection and AI-Generated Content material Threats

As deepfake detection calls for intersect with content material moderation, platforms face a quickly escalating problem that assessments the bounds of present AI capabilities. Deepfake information surged from 500,000 in 2023 to a projected 8 million in 2025, based on UK authorities estimates, and the standard of artificial media has improved to the purpose the place 68 p.c of deepfakes are actually thought-about almost indistinguishable from real media. Human detection charges for high-quality video deepfakes stand at simply 24.5 p.c, whereas a 2024 meta-analysis of 56 research discovered that total human deepfake detection accuracy averages solely 55.54 p.c throughout all modalities. These statistics clarify that AI-powered detection instruments should not elective however important for any platform critical about moderating manipulated media at scale.

The arms race between deepfake creators and detection programs mirrors the broader adversarial dynamics that characterize content material moderation, with every enchancment in detection prompting new evasion methods from dangerous actors. Detection fashions educated in managed laboratory environments typically see their accuracy drop by 45 to 50 p.c when deployed towards real-world deepfakes that differ from the coaching information in refined however important methods. This efficiency degradation highlights the significance of steady mannequin retraining, various coaching datasets, and multi-signal detection approaches that analyze not simply visible artifacts but additionally metadata, behavioral patterns, and provenance data. Platforms that depend on a single detection technique are weak to adversarial assaults particularly designed to take advantage of that technique’s weaknesses.

AI-generated textual content, pictures, and video current a definite however associated problem for moderation programs that have been initially designed to guage human-created content material. Generative AI instruments can produce convincing misinformation articles, fabricated product evaluations, artificial profile photographs, and manipulated proof at a scale and velocity that overwhelm conventional detection approaches. The proliferation of those instruments has compelled platforms to put money into new classification layers that may distinguish between AI-generated and human-created content material, a job that grows tougher as generative fashions enhance. Understanding what deepfake know-how is and spot a deepfake has develop into important information for each platform operators and particular person customers navigating an more and more artificial media panorama.

Digital watermarking and content material provenance initiatives like Adobe’s C2PA supply a complementary method to detection by embedding verifiable metadata into content material on the level of creation. Slightly than attempting to find out after the actual fact whether or not a bit of content material is artificial, provenance programs connect cryptographic signatures that document the origin, enhancing historical past, and authenticity of media information all through their lifecycle. Whereas adoption of those requirements stays uneven throughout platforms and content material creation instruments, the mixture of detection-based and provenance-based approaches represents essentially the most promising path towards managing the deepfake risk at scale. The mixing of watermarking with AI detection programs may finally shift the default assumption from “content material is actual till confirmed pretend” to “content material is unverified till its provenance is confirmed.”

Regulatory Frameworks Shaping Platform Accountability

The regulatory panorama for content material moderation has undergone a dramatic transformation as governments worldwide transfer from voluntary business self-regulation to binding authorized frameworks with important enforcement mechanisms. The European Union’s Digital Providers Act, absolutely relevant since early 2024, requires Very Giant On-line Platforms with over 45 million month-to-month EU customers to implement content material moderation programs, conduct annual danger assessments, publish transparency stories, and supply consumer attraction mechanisms. In simply two years, on-line platforms have reversed nearly 50 million content material moderation choices that customers appealed by way of inner mechanisms, with 30 p.c of 165 million appealed choices leading to reversals. The UK’s On-line Security Act imposes related obligations with a selected give attention to defending youngsters from dangerous content material.

Enforcement actions have moved from theoretical to tangible, with regulators imposing actual monetary penalties on platforms that fail to fulfill their obligations beneath new content material moderation frameworks. The European Fee imposed a forty five million EUR high-quality on X for sustaining a non-compliant promoting repository, and has opened formal proceedings towards a number of platforms together with TikTok and Meta for potential DSA violations. Within the first half of 2025, out-of-court settlement our bodies reviewed over 1,800 disputes associated to content material on Fb, Instagram, and TikTok within the EU, overturning platform choices in 52 p.c of closed instances. These enforcement actions exhibit that AI governance tendencies and rules are not aspirational coverage paperwork however energetic authorized devices with monetary and operational penalties for non-compliant platforms.

The geopolitical dimension of content material moderation regulation provides one other layer of complexity, as completely different jurisdictions pursue divergent approaches that create compliance challenges for world platforms. America has traditionally favored a lighter regulatory contact anchored in Part 230 protections, whereas the EU, UK, Australia, and a number of other Asian nations have enacted prescriptive frameworks with particular technical and procedural necessities. Platforms working throughout a number of jurisdictions should navigate conflicting authorized obligations, various definitions of unlawful content material, and completely different requirements for transparency and accountability. The fragmentation of worldwide content material moderation regulation signifies that no single AI system may be configured to adjust to each jurisdiction concurrently, forcing platforms to construct modular, jurisdiction-aware moderation architectures. The interaction between regulation and risks of AI and authorized adjustments continues to form the strategic course of content material moderation investments throughout the business.

Person Rights, Appeals, and Transparency

Past the regulatory mandates, the design of user-facing attraction and transparency mechanisms determines whether or not AI content material moderation programs are perceived as truthful or arbitrary by the communities they govern. Customers whose content material is eliminated or whose accounts are restricted want clear, particular explanations of why the motion was taken, which coverage was violated, and what choices can be found for attraction. The EU Digital Providers Act codified these necessities into regulation, mandating that platforms present detailed statements of causes for each content material moderation resolution and keep inner complaint-handling programs accessible to affected customers. Transparency stories revealed by platforms now disclose the variety of moderation actions taken, the proportion dealt with by automated programs, and the accuracy and error charges of these programs.

The effectiveness of attraction mechanisms is determined by whether or not they’re staffed and resourced to deal with the amount of disputes that AI moderation generates, or whether or not they perform as procedural checkboxes that not often lead to significant evaluation. In observe, many platforms report that almost all of appeals are nonetheless processed by way of automated programs that re-evaluate the unique AI resolution utilizing the identical or related fashions, elevating questions on whether or not the attraction constitutes real reconsideration or just a second go by way of the identical algorithmic logic. Significant attraction requires human evaluation, and the platforms that put money into accessible, responsive, and genuinely impartial attraction processes earn considerably greater consumer belief than people who automate the attraction pipeline as properly. The rising give attention to lack of transparency in AI displays broader societal calls for for accountability in automated decision-making programs that have an effect on elementary rights like freedom of expression.

The Psychological Well being Impression on Human Moderators

The psychological toll on human content material moderators who evaluation graphic, violent, and disturbing materials every day stays one of the underreported prices of content material moderation, whilst AI programs soak up an growing share of the workload. Moderators tasked with evaluating content material involving little one exploitation, self-harm, terrorism, and excessive violence face documented dangers of post-traumatic stress dysfunction, anxiousness, melancholy, and compassion fatigue that may persist lengthy after they depart the function. Main platforms have confronted lawsuits and regulatory scrutiny over insufficient psychological well being help for moderation staff, a lot of whom are employed by way of third-party contractors in nations with weaker labor protections. The ethical accountability for these harms is shared between platforms, outsourcing corporations, and regulators who collectively decide the working situations of the folks standing between dangerous content material and public audiences.

AI moderation has the potential to cut back human publicity to essentially the most graphic content material classes by robotically filtering out clear-cut violations earlier than they attain human evaluation queues, however this profit isn’t assured with out deliberate system design. Poorly designed hybrid workflows can really focus essentially the most disturbing content material in human evaluation queues, as AI programs deal with the routine instances and route solely essentially the most ambiguous, typically essentially the most graphic, materials to human reviewers. Efficient workflow design ensures that content material routing considers not simply moderation problem but additionally the psychological impression on reviewers, incorporating common content material rotation, publicity limits, and obligatory breaks into the moderation course of. Platforms that prioritize the wellbeing of their moderation workforce acknowledge that AI ethics and rising legal guidelines should tackle not solely the customers affected by moderation choices but additionally the employees who make these choices doable.

The rising consensus within the belief and security business is that AI ought to be deployed particularly to defend human moderators from essentially the most dangerous content material classes, reasonably than merely to cut back headcount. Organizations that use AI primarily as a cost-cutting instrument with out reinvesting financial savings in moderator wellbeing and help constructions are failing to honor the obligation of care they owe to staff who carry out important however psychologically hazardous duties. Trade-wide requirements for moderator working situations, together with most publicity limits, obligatory counseling, and truthful compensation, are steadily taking form by way of a mix of regulatory stress, employee advocacy, and rising public consciousness of the human price of protecting the web protected.

Enterprise and E-Commerce Purposes

Content material moderation AI has expanded properly past social media into enterprise and e-commerce environments the place the stakes embody model popularity, authorized legal responsibility, and client belief in digital marketplaces. On-line marketplaces like Amazon, eBay, and Alibaba deploy AI moderation programs to display screen product listings for counterfeit items, deceptive descriptions, prohibited objects, and fraudulent vendor exercise throughout tens of millions of listings up to date every day. These programs should stability the necessity to defend customers from dangerous or unlawful merchandise with the business crucial to keep away from falsely eradicating professional listings, which might injury vendor relationships and scale back market income. The mixing of moderation AI into e-commerce platforms displays the rising recognition that content material security is a business-critical perform, not an afterthought.

Enterprise communication platforms, buyer evaluation websites, and on-line boards additionally depend on AI moderation to keep up civil discourse and adjust to authorized obligations, although their moderation necessities differ from these of client social networks in essential methods. A company collaboration instrument like Slack or Microsoft Groups should reasonable for harassment, discrimination, and information leakage with out impeding the free move of office communication that the instrument is designed to facilitate. Evaluate platforms should stability the detection of faux evaluations, competitor sabotage, and incentivized postings towards the necessity to protect real buyer suggestions, even when that suggestions is unfavorable. The range of moderation contexts throughout enterprise and e-commerce purposes demonstrates that AI content material moderation isn’t a one-size-fits-all know-how however a configurable framework that have to be tailor-made to the particular insurance policies, dangers, and consumer expectations of every platform.

Measuring Moderation Efficiency and Accuracy Metrics

Quantifying the efficiency of AI content material moderation programs requires a framework of metrics that captures not simply accuracy but additionally the velocity, consistency, and equity of enforcement choices throughout content material classes and consumer populations. Precision, which measures the proportion of flagged content material that truly violates coverage, and recall, which measures the proportion of violating content material that the system efficiently identifies, are the foundational metrics that each moderation group tracks. The stress between these two measures creates a elementary trade-off: growing precision reduces false positives however permits extra dangerous content material to slide by way of, whereas growing recall catches extra violations but additionally sweeps up extra professional content material within the course of.

Latency, or the time between content material add and moderation resolution, is an more and more essential efficiency metric as platforms increase into real-time content material codecs like livestreaming, reside audio, and ephemeral tales. A moderation system that achieves 99 p.c accuracy however takes half-hour to render a choice is functionally ineffective for a reside video platform the place dangerous content material can attain tens of millions of viewers inside seconds of broadcast. Actual-time moderation calls for not solely correct fashions but additionally optimized inference pipelines, edge computing infrastructure, and clever prioritization algorithms that route the highest-risk content material by way of the quickest processing paths. The push towards real-time moderation has pushed important funding in {hardware} acceleration, mannequin compression, and on-device AI processing capabilities.

Equity metrics that measure efficiency disparities throughout demographic, linguistic, and geographic segments have gotten as essential as combination accuracy numbers in evaluating moderation system high quality. A system that achieves 95 p.c total accuracy however performs at 85 p.c accuracy for content material in Arabic or Hindi is failing the customers who depend on moderation in these languages. Disaggregated efficiency reporting, common bias audits, and exterior benchmarking towards business requirements are important practices for platforms that need to perceive not simply how properly their moderation AI works on common however how equitably it serves each section of their consumer base. The motion towards privateness issues round AI and fairness-aware analysis displays a maturing business that more and more acknowledges fairness as a core dimension of moderation high quality.

Rising Applied sciences in Proactive Content material Security

The subsequent era of AI content material moderation is shifting from reactive removing to proactive prevention, utilizing predictive fashions and behavioral evaluation to establish dangerous content material and coordinated inauthentic conduct earlier than they trigger measurable injury. Slightly than ready for content material to be uploaded after which scanning it towards coverage violation classifiers, proactive programs analyze consumer conduct patterns, account creation alerts, community dynamics, and content material trajectory predictions to flag potential threats earlier than dangerous materials is revealed. Meta’s latest AI deployment, for instance, contains programs that may detect account takeovers by recognizing suspicious patterns similar to sudden location adjustments mixed with profile edits, figuring out threats that human reviewers would miss. This shift from content-centric to behavior-centric moderation represents a elementary change within the structure of platform security programs.

Explainable AI is one other rising know-how that has direct implications for content material moderation transparency and accountability in regulatory environments that demand justification for automated choices. Present deep studying fashions used for content material classification function largely as black bins, producing choices with out clear explanations of which options, patterns, or contextual alerts drove the result. Explainable moderation programs intention to supply human-readable rationales for every resolution, enabling customers to grasp why their content material was flagged, moderators to evaluate whether or not the AI’s reasoning aligns with coverage intent, and regulators to audit whether or not the system’s logic complies with authorized necessities. The event of explainable AI for content material moderation isn’t merely a technical comfort however a regulatory necessity as frameworks just like the EU Digital Providers Act more and more require platforms to justify automated choices that have an effect on consumer rights. Advances in AI and election misinformation detection additional illustrate how proactive, explainable programs can defend democratic processes from coordinated manipulation campaigns.

Moral Boundaries and the Censorship Debate

The stress between content material moderation and freedom of expression represents essentially the most politically charged dimension of AI-driven moderation, with critics on each side arguing that platforms both reasonable too aggressively or too leniently relying on the political and cultural context. Over-enforcement, the place AI programs take away professional speech as a result of it superficially resembles policy-violating content material, can silence political dissent, suppress marginalized voices, and create a chilling impact that daunts customers from partaking in public discourse. Below-enforcement, the place programs fail to catch dangerous content material as a result of they’re calibrated too conservatively, can expose customers to harassment, hate speech, and harmful misinformation that erodes belief within the platform and causes real-world hurt. The calibration of AI moderation programs is inherently a political act, even when it’s framed as a technical optimization drawback.

The function of governments in shaping moderation insurance policies introduces extra moral complexity, as state calls for for content material removing can serve professional regulation enforcement functions or perform as devices of political censorship relying on the jurisdiction and context. Platforms receiving authorities orders to take away content material should consider whether or not these orders adjust to native regulation, worldwide human rights requirements, and the platform’s personal insurance policies, a willpower that turns into exponentially extra advanced when working throughout dozens of jurisdictions with conflicting authorized frameworks. The EU Digital Providers Act requires platforms to doc and report authorities takedown requests, making a transparency mechanism that may assist establish patterns of abuse, however compliance with the reporting requirement doesn’t resolve the underlying moral query of when compliance with a authorities order constitutes acceptable regulation enforcement cooperation versus complicity in censorship.

Essentially the most tough moral questions in AI content material moderation should not technical however political: who decides what constitutes dangerous content material, whose values are embedded within the classification fashions, and who bears accountability when automated programs make errors that have an effect on tens of millions of customers. These questions can’t be answered by algorithms alone, and platforms that try and place their moderation choices as impartial, goal, or apolitical are obscuring the worth judgments that inevitably form each content material coverage and each mannequin coaching resolution. Significant engagement with the censorship debate requires platforms to be clear in regards to the values that inform their insurance policies, the trade-offs they settle for between security and expression, and the mechanisms out there to customers and civil society to problem and affect moderation choices. The broader dialogue round moral implications of superior AI gives important context for understanding how moderation choices hook up with bigger questions in regards to the function of know-how in democratic societies.

What Lies Forward for AI-Pushed Moderation

Because the business appears towards the rest of this decade, the trajectory of AI content material moderation factors towards more and more subtle, multimodal, and context-aware programs that blur the road between content material evaluation and behavioral intelligence. Basis fashions able to understanding textual content, pictures, video, and audio concurrently will allow moderation programs that consider every bit of content material as a unified entire reasonably than processing every modality by way of separate pipelines that will miss cross-modal alerts. These multimodal fashions will even enhance the detection of coordinated inauthentic conduct by analyzing not simply particular person items of content material however the community of relationships, timing patterns, and behavioral signatures that distinguish natural exercise from manufactured campaigns. The convergence of content-level and network-level evaluation will give platforms a extra complete view of platform well being than any single modality can present.

Regulatory stress will proceed to accentuate, with new jurisdictions adopting content material moderation frameworks and present regulators increasing the scope and rigor of enforcement actions throughout the digital ecosystem. The EU Digital Providers Act is already being studied as a template by regulators in Latin America, Southeast Asia, and Africa, and the worldwide development towards obligatory transparency reporting, impartial auditing, and consumer attraction rights reveals no indicators of reversing. Platforms that construct compliance into the structure of their moderation programs from the outset might be higher positioned to adapt to new necessities than people who deal with regulation as an afterthought bolted onto present infrastructure. Funding in modular, jurisdiction-aware moderation architectures will develop into a aggressive differentiator for platforms looking for to function throughout a number of regulatory environments.

The economics of content material moderation are additionally shifting as AI capabilities enhance and regulatory necessities enhance, creating new price constructions that may reshape the aggressive panorama of the platform business. Smaller platforms that lack the sources to construct proprietary moderation programs will more and more depend on third-party moderation APIs provided by suppliers like Google, Microsoft, Amazon, and specialised distributors, democratizing entry to AI moderation but additionally creating dependency on a small variety of infrastructure suppliers. The content material moderation API market is already extremely aggressive, with companies providing textual content, picture, and video moderation at costs as little as $0.001 per request, making enterprise-grade moderation accessible to startups and mid-sized platforms that might not afford to construct equal programs in-house. This democratization of moderation know-how is a optimistic improvement, however it additionally raises questions in regards to the focus of moderation decision-making within the palms of some API suppliers whose fashions and insurance policies form enforcement throughout hundreds of downstream platforms.

The last word imaginative and prescient for AI content material moderation isn’t a world the place machines make all the selections however one the place AI handles the size drawback whereas people retain significant authority over the values, insurance policies, and accountability constructions that govern on-line discourse. The platforms that may lead the subsequent period of content material moderation are people who put money into AI programs subtle sufficient to deal with the amount, velocity, and complexity of recent digital content material whereas preserving the human judgment, cultural sensitivity, and moral reasoning that no algorithm can replicate. The trail ahead calls for collaboration between technologists, policymakers, civil society organizations, and affected communities to construct moderation programs that aren’t solely efficient and environment friendly but additionally truthful, clear, and accountable to the folks they’re designed to guard.

Key Insights

- About 87 p.c of entrepreneurs used generative AI in a minimum of one recurring workflow in Q1 2026, demonstrating how AI-generated content material quantity is compounding the moderation problem going through platforms.

- The worldwide content material moderation options market was valued at USD 11.88 billion in 2025 and is projected to develop towards USD 29.77 billion by 2035, underscoring the huge scale of funding flowing into automated moderation infrastructure.

- Meta’s AI content material enforcement experiments detected and mitigated 5,000 rip-off makes an attempt per day that human groups couldn’t establish, demonstrating AI’s potential to catch threats invisible to guide evaluation.

- A College of Queensland research discovered that giant language fashions used for moderation encode political biases that shift detection thresholds primarily based on ideological alignment, introducing partisan unfairness into automated moderation choices.

- Below the EU Digital Providers Act, platforms reversed nearly 50 million content material moderation choices that customers appealed, with 30 p.c of 165 million appeals leading to modified outcomes.

- Human detection accuracy for high-quality video deepfakes stands at simply 24.5 p.c, making AI-powered detection instruments indispensable for platforms going through a projected 8 million deepfake information by 2025.

- Out-of-court settlement our bodies beneath the DSA reviewed over 1,800 disputes on Fb, Instagram, and TikTok within the first half of 2025, overturning platform choices in 52 p.c of closed instances.

- The AI content material moderation market was valued at USD 1.5 billion in 2024 and is projected to succeed in USD 6.8 billion by 2033, increasing at a CAGR of 18.6 p.c.

These insights collectively paint an image of an business present process speedy transformation, the place AI-driven moderation is scaling to fulfill unprecedented content material volumes whereas concurrently confronting persistent challenges in bias, equity, and regulatory compliance. The funding trajectory confirms that platforms and regulators alike view automated moderation as vital infrastructure reasonably than an elective enhancement. The stress between AI’s rising technical capabilities and its documented limitations in areas like cultural nuance and political neutrality will outline the subsequent section of the content material moderation business. Platforms that stability technological funding with real dedication to transparency, accountability, and human oversight might be finest positioned to earn consumer belief and keep regulatory compliance. The info additionally reveals that the hole between AI functionality and human judgment is narrowing in some areas whereas remaining stubbornly broad in others, notably in deepfake detection and cross-cultural content material analysis.

| Dimension | AI-Solely Moderation | Human-Solely Moderation | Hybrid AI-Human Moderation |

|---|---|---|---|

| Transparency | Restricted; choices made by black-box fashions with out clear rationale | Excessive; human reviewers can articulate causes for every resolution | Reasonable to excessive; AI gives preliminary classification, people add context and rationale |

| Participation | No consumer participation in resolution course of; automated enforcement | Customers can have interaction with reviewers by way of appeals | Customers profit from AI velocity plus human-reviewed attraction pathways |

| Belief | Low amongst customers as a consequence of perceived arbitrariness and lack of clarification | Greater however restricted by inconsistency between reviewers | Highest when AI choices are explainable and human attraction is accessible |

| Resolution Making | Quick, constant, however inflexible and susceptible to contextual errors | Gradual, contextual, however inconsistent and never scalable | Balanced; AI handles quantity, people resolve ambiguity and edge instances |

| Misinformation | Detects recognized patterns however struggles with novel narratives and satire | Higher at nuanced analysis however too sluggish to include viral unfold | Simplest; AI catches quantity whereas people consider rising narratives |

| Service Supply | Scales infinitely however high quality degrades for low-resource languages | Top quality however restricted by geography, language, and staffing | Scales with AI whereas sustaining high quality by way of focused human intervention |

| Accountability | Weak; tough to audit algorithmic choices at scale | Robust; particular person reviewers may be held accountable | Robust when mixed with transparency stories, audits, and attraction mechanisms |

Actual World Examples

Walmart’s AI-Powered Market Content material Moderation

Walmart deployed an AI-driven content material moderation system throughout its on-line market to display screen product listings for counterfeit items, deceptive claims, prohibited objects, and coverage violations at a scale that guide evaluation groups couldn’t maintain. The system makes use of laptop imaginative and prescient and NLP fashions to investigate product pictures, titles, descriptions, and vendor metadata towards a constantly up to date database of recognized violations and rising risk patterns. In line with Walmart’s know-how group, the automated screening system diminished the common time to detect and take away policy-violating listings by 65 p.c whereas concurrently reducing false optimistic charges by 30 p.c in comparison with the earlier manual-plus-rules-based method. Critics word that the system nonetheless struggles with subtle counterfeit listings that carefully mimic professional merchandise and that smaller sellers have reported professional listings being incorrectly eliminated throughout preliminary rollout, a friction level the corporate continues to handle by way of feedback-driven mannequin refinement.

YouTube’s AI-Pushed Video Moderation Pipeline

YouTube’s moderation infrastructure processes over 500 hours of video uploaded each minute utilizing a multi-layered AI pipeline that mixes frame-level picture classification, audio transcription, and behavioral alerts to establish coverage violations throughout classes together with hate speech, violence, little one security, and deceptive content material. The system applies light-weight classifiers at add time for instant triage and routes borderline content material by way of progressively extra subtle fashions earlier than escalating essentially the most ambiguous instances to human evaluation groups. In line with YouTube’s transparency stories, the platform eliminated over 8 million movies in Q1 2025, with automated programs detecting greater than 95 p.c of eliminated movies earlier than any consumer flagged them. The system’s main limitation is its problem with context-dependent content material, together with satire, academic materials discussing dangerous matters, and information reporting that features graphic imagery for journalistic functions, all of which require the contextual judgment that present AI fashions can’t reliably present.

TikTok’s Actual-Time Content material Security System

TikTok developed a proprietary AI moderation system that operates in close to real-time to display screen short-form movies throughout and instantly after add, combining visible evaluation, audio transcription, textual content overlay detection, and behavioral sample recognition right into a unified classification pipeline. The platform’s moderation problem is amplified by the velocity at which content material goes viral on its recommendation-driven feed, the place a single video can attain tens of millions of viewers inside minutes of posting if the algorithm selects it for broad distribution. In line with TikTok’s neighborhood pointers enforcement stories, the platform eliminated over 170 million movies within the first half of 2025 for violating neighborhood pointers, with 99.3 p.c of these removals occurring earlier than the content material was reported by customers. The first criticism of TikTok’s moderation system facilities on inconsistent enforcement throughout areas, with civil society teams documenting cases the place an identical content material is eliminated in a single nation however left untouched in one other, suggesting that the platform’s moderation insurance policies are formed by native political pressures as a lot as by constant world requirements.

Case Research

Meta’s AI Content material Enforcement Transformation

Meta launched into a multi-year transition to switch important parts of its third-party content material moderation workforce with superior AI programs able to dealing with enforcement duties like catching scams, eradicating unlawful media, and detecting coordinated inauthentic conduct throughout Fb, Instagram, and Threads. The issue Meta sought to handle was a elementary mismatch between the size of content material flowing by way of its platforms and the capability of human moderation groups to evaluation that content material precisely and constantly throughout dozens of languages and content material classes. The answer concerned deploying massive language fashions and multimodal AI classifiers that course of billions of content material objects every day, with early experiments demonstrating that one AI instrument detected and mitigated 5,000 phishing makes an attempt per day that human groups had been unable to establish. In line with Meta’s engineering weblog, the system additionally diminished stories of faux superstar profiles by over 80 p.c and doubled detection of grownup sexual solicitation content material that violated Meta’s insurance policies. Critics level to the timing of this transition, which coincided with Meta’s loosening of content material moderation guidelines and its shift away from third-party fact-checking towards a community-notes mannequin, elevating issues that the AI deployment is partly a cost-cutting measure dressed up as a technological improve reasonably than a real enchancment in consumer security.

The EU Digital Providers Act Transparency Framework

The European Union’s implementation of the Digital Providers Act created the world’s first complete, legally binding transparency framework for platform content material moderation, addressing the longstanding drawback of opaque and unaccountable automated enforcement programs. Earlier than the DSA, platforms revealed voluntary transparency stories with inconsistent methodologies, incomparable metrics, and no exterior verification, making it unimaginable for researchers, regulators, or customers to guage whether or not moderation programs have been functioning pretty. The DSA’s answer mandated standardized transparency reporting, a central transparency database for content material moderation choices, structured information entry for researchers, consumer attraction rights with particular procedural necessities, and impartial auditing of the biggest platforms. In line with the European Fee’s DSA impression evaluation, platforms reported greater than 9 billion content material moderation choices within the first half of 2025, with 99 p.c taken proactively primarily based on platforms’ personal phrases of service reasonably than authorities orders. The framework’s limitation is its enforcement asymmetry: whereas the DSA applies stringent necessities to the biggest platforms, smaller companies face lighter obligations, and the fragmented nature of Digital Providers Coordinator authorities throughout 27 member states has created uneven enforcement that some critics describe as regulatory arbitrage ready to occur.

The College of Queensland AI Bias in Moderation Analysis

Researchers on the College of Queensland’s College of Electrical Engineering and Pc Science carried out a groundbreaking research inspecting how massive language fashions used for content material moderation encode and reproduce political biases when conditioned with ideological personas, revealing systemic equity dangers in automated moderation programs. The issue beneath investigation was whether or not AI moderation instruments, more and more deployed by platforms to make high-stakes choices about speech, may keep ideological neutrality when processing politically charged content material. The analysis group examined six LLMs, together with imaginative and prescient fashions, by asking them to reasonable hundreds of examples of hateful textual content and memes by way of 400 ideologically various AI personas chosen from a database of 200,000 artificial identities. In line with the revealed findings in ACM Transactions on Clever Techniques and Expertise, the research discovered that ideological personas shifted detection thresholds and launched partisan bias, with fashions judging criticism of their assigned in-group extra harshly than equal criticism directed at opposing teams. The analysis concluded that bigger fashions exhibited stronger ideological cohesion reasonably than neutralizing biases, suggesting that scaling mannequin dimension alone won’t clear up the equity problem and that impartial human oversight stays important for high-stakes moderation choices.

Steadily Requested Questions About AI in Content material Moderation

AI programs use pure language processing fashions educated on tens of millions of labeled examples to establish patterns of hate speech, together with specific slurs, coded language, and contextual hostility. Fashionable transformer-based fashions analyze sentence which means and intent reasonably than relying solely on key phrase lists, permitting them to catch subtle types of harassment that easy filters would miss.

Sure, multilingual NLP fashions can course of content material throughout dozens of languages inside a single classification pipeline, although efficiency varies considerably between high-resource languages like English and low-resource languages with smaller coaching datasets. Platforms sometimes obtain the most effective multilingual protection by combining common fashions with language-specific classifiers and native human reviewers who can consider cultural context.

Proactive moderation makes use of AI to scan and classify content material earlier than or instantly after it’s revealed, catching violations robotically with out counting on consumer stories. Reactive moderation responds to user-submitted stories of dangerous content material, counting on flags from the neighborhood to establish materials that requires evaluation and potential removing.

Accuracy varies considerably by content material class, language, and platform, however main programs obtain precision and recall charges above 90 p.c for well-defined classes like nudity and graphic violence. Accuracy drops considerably for context-dependent classes like hate speech, sarcasm, and misinformation, the place cultural nuance and subjective judgment are required.

Customers sometimes have entry to an attraction mechanism the place they will problem an AI-generated moderation resolution by way of the platform’s inner evaluation course of. Below rules just like the EU Digital Providers Act, platforms should present particular explanations for moderation actions and supply accessible pathways for customers to request reconsideration.

Platforms deploy specialised AI fashions that analyze visible artifacts, inconsistencies in facial actions, audio-visual synchronization mismatches, and metadata anomalies to establish synthetically generated or manipulated media. Detection accuracy for high-quality deepfakes stays a problem, with fashions performing properly in laboratory situations however experiencing important accuracy drops towards adversarial, real-world deepfakes.

Human moderators deal with edge instances, appeals, and high-stakes choices that AI can’t reliably resolve, together with content material requiring cultural context, subjective coverage interpretation, or sensitivity in classes like little one security and terrorism. In addition they present vital suggestions that improves AI mannequin accuracy over time by way of steady coaching loops.

The DSA requires platforms working within the EU to implement clear content material moderation programs, publish detailed transparency stories, conduct annual danger assessments, and supply customers with clear explanations and attraction rights for moderation choices. Non-compliance may end up in fines of as much as 6 p.c of worldwide annual turnover.

Video moderation faces challenges together with the large computational price of analyzing hundreds of frames per minute, the issue of sustaining real-time processing speeds for livestreamed content material, and the complexity of decoding visible, audio, and textual parts inside a single video concurrently.

Coaching information high quality straight determines moderation accuracy, as fashions study to establish violations by analyzing labeled examples curated by human annotators. Biased, incomplete, or outdated coaching datasets produce fashions that carry out erratically throughout content material classes, languages, and demographic teams, creating systematic equity gaps.

Sure, subtle actors use methods like character substitution, visible manipulation, steganography, and adversarial perturbation to craft content material that evades AI detection whereas remaining dangerous to human viewers. This creates an ongoing arms race between moderation programs and evasion methods that requires steady mannequin updates.

Explainable AI refers to moderation programs that present human-readable rationales for his or her classification choices, enabling customers, moderators, and regulators to grasp which options and contextual alerts drove a selected enforcement motion. This functionality is more and more essential for regulatory compliance and consumer belief.

Content material moderation APIs permit smaller platforms to entry enterprise-grade AI moderation capabilities by sending content material to a cloud-based service that returns classification outcomes and confidence scores. Suppliers like Google, Microsoft, Amazon, and specialised distributors supply these companies at per-request pricing that makes automated moderation accessible with out requiring in-house AI infrastructure.

The long run factors towards multimodal basis fashions that analyze textual content, pictures, video, and audio concurrently, proactive behavioral evaluation that forestalls hurt earlier than it happens, explainable AI that satisfies regulatory transparency necessities, and hybrid programs the place AI handles scale whereas people retain authority over values and accountability.